Fionce Siow

Ryan Warrier

Data is central to any business: it powers mission-critical applications, informs business decisions, and supports the growing adoption of AI/ML models. As a result, data volumes are only increasing, and teams rely on engines like Apache Spark and managed platforms like Databricks or Amazon EMR to process this data at scale. These systems process data in parallel on large clusters and enable teams to transform and analyze large datasets with ease and speed—but they also introduce some challenges of their own.

Due to the complexity of these systems, troubleshooting issues in your data pipelines can be tedious. Not only can failures have many potential causes (e.g., insufficient resourcing, bad configuration, or inefficient queries), but it’s also difficult to manually correlate relevant information from the Spark UI, logs, and infrastructure metrics to find the root cause. In addition, teams often lack a comprehensive view into all their jobs and clusters. This can lead to increased time to detect and resolve issues when pipelines are broken or data is missing, as well as higher costs due to the inability to identify and optimize expensive jobs and clusters.

Datadog Data Jobs Monitoring (DJM) helps solve these challenges by enabling data platform teams and data engineers to quickly detect and debug failing or long-running jobs while offering insights into job cost and optimization opportunities. It also gathers performance telemetry from your Spark and Databricks jobs across all accounts and environments, so you have full context to understand the health and efficiency of your data pipelines.

In this post, we’ll walk through how DJM helps you:

Detect failing and long-running jobs

Because data pipelines consist of multiple technologies—including some that are distributed by nature, such as Spark—it can be difficult to monitor all components of the pipeline in a unified way and set up alerting at scale, especially for data pipelines that span cloud providers and accounts. As a result, it’s difficult for teams to have the right detection capabilities in place, and they often find out about downstream data issues impacting stakeholders hours or days after their jobs fail or take too long to complete.

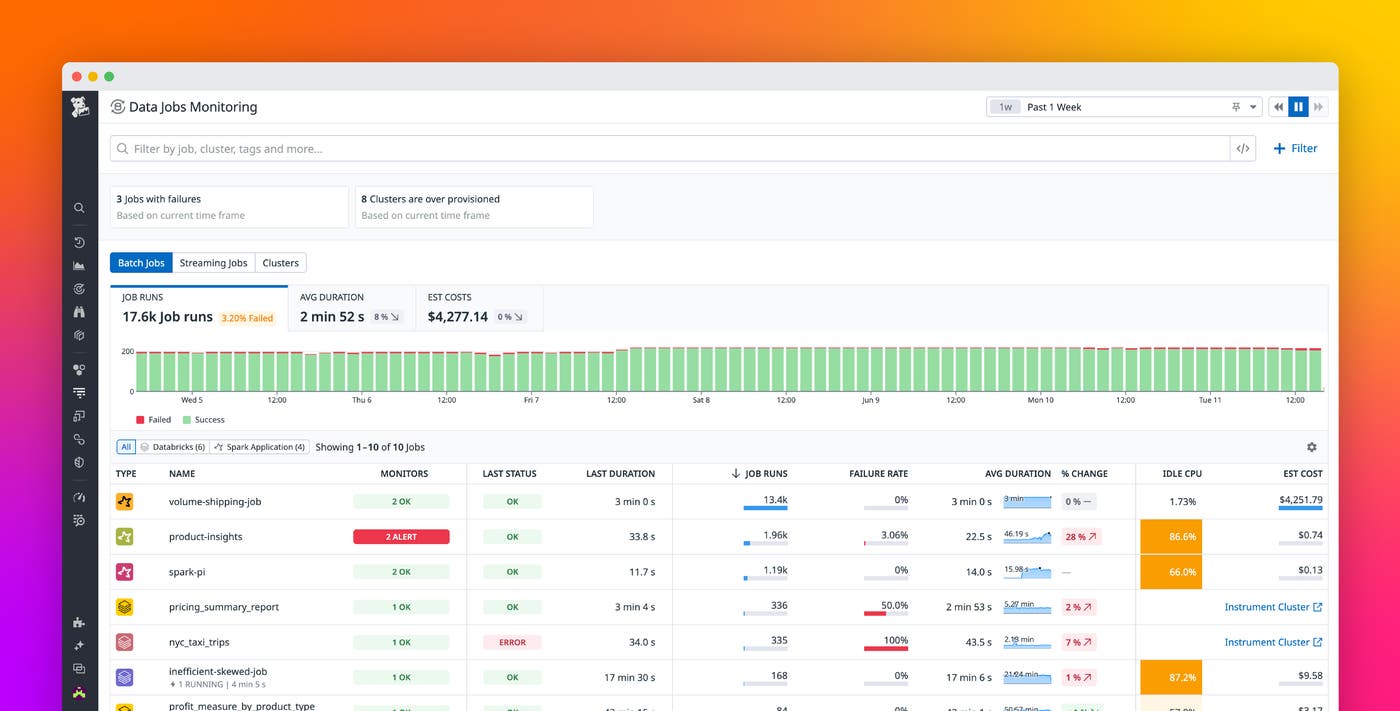

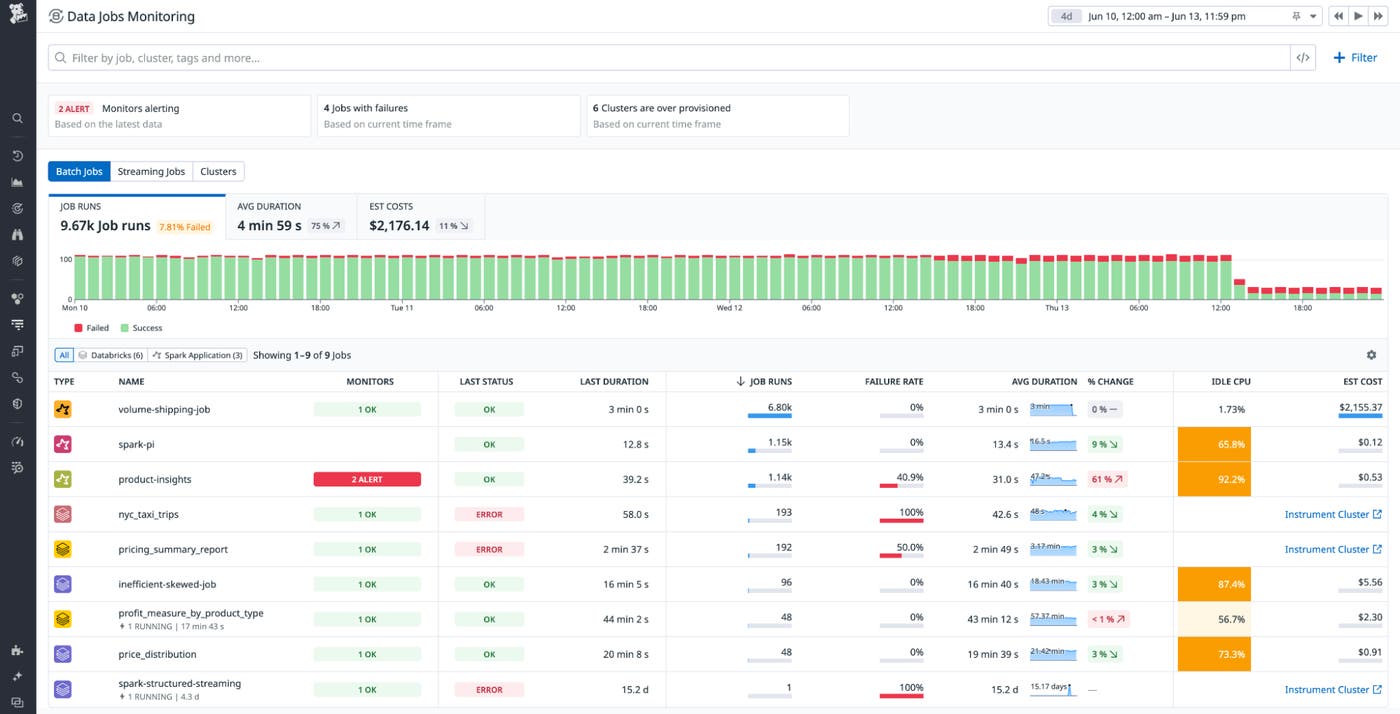

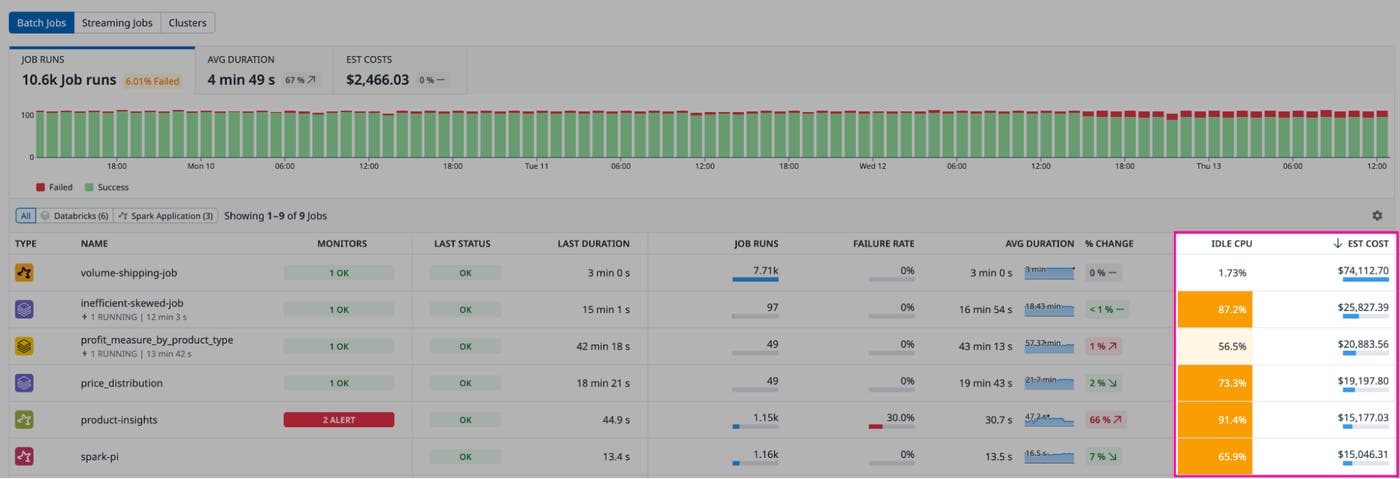

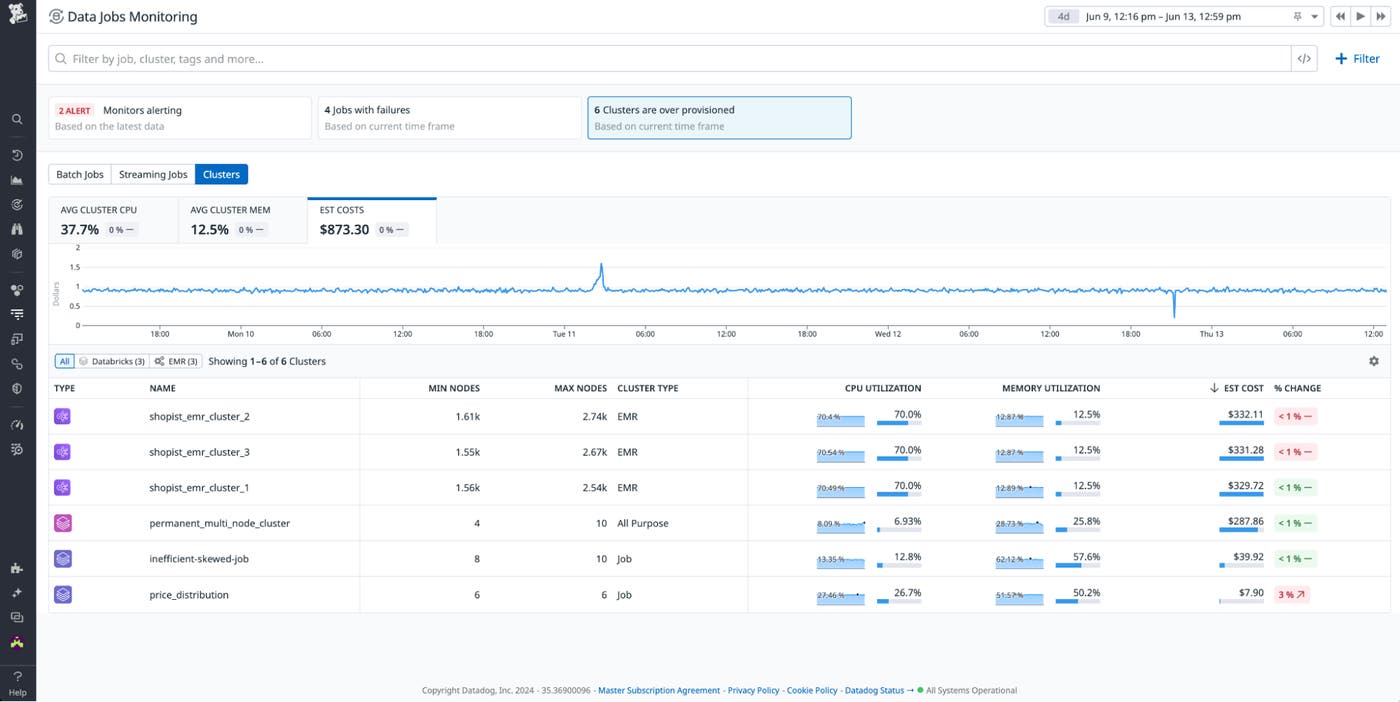

DJM enables you to identify issues with your data processing workloads and dive deeper to troubleshoot without relying on painstaking manual processes. The DJM Overview page provides a single pane of glass where you can see all your batch and streaming Spark and Databricks jobs and clusters across accounts and workspaces, so you can quickly assess job health and performance.

You can use recommended filters that surface actionable issues, such as failed jobs, jobs with run duration spikes, or overprovisioned clusters. You can also filter your jobs to a specific context you’re interested in: by job, status, cluster, environment, workspace, or custom tag; by type of job (i.e., streaming or batch jobs) or cluster; and more.

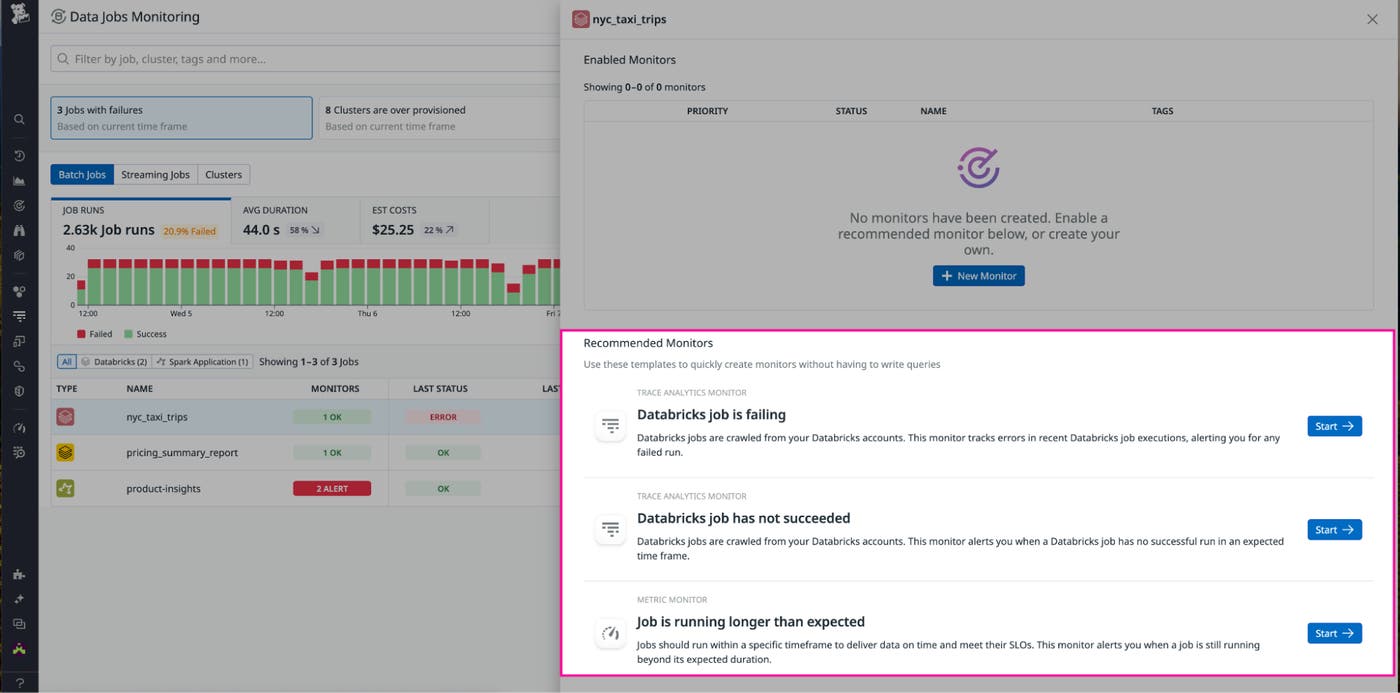

To be notified when jobs have failed or are in progress and running beyond the expected completion time, DJM provides recommended monitors that are preconfigured so you can set them up without having to write your own queries. The status of these monitors is visible right from the DJM Overview page.

You can make monitors as broad or specific as you want—for example, you can use tags to configure a single alert to notify a team if any of their jobs have failed, or you can create an alert on a business-critical job that is running for longer than the two-hour required threshold.

Pinpoint and resolve job issues faster

Remediating issues in Spark jobs can be complex, as teams need to track multiple points of failure and inefficiencies—the root cause could lie in your code or query logic, the Spark configuration, or the number of nodes or instance types in the infrastructure. Organizations are often forced to switch between different tools just to access a limited view of this data that still requires manual correlation.

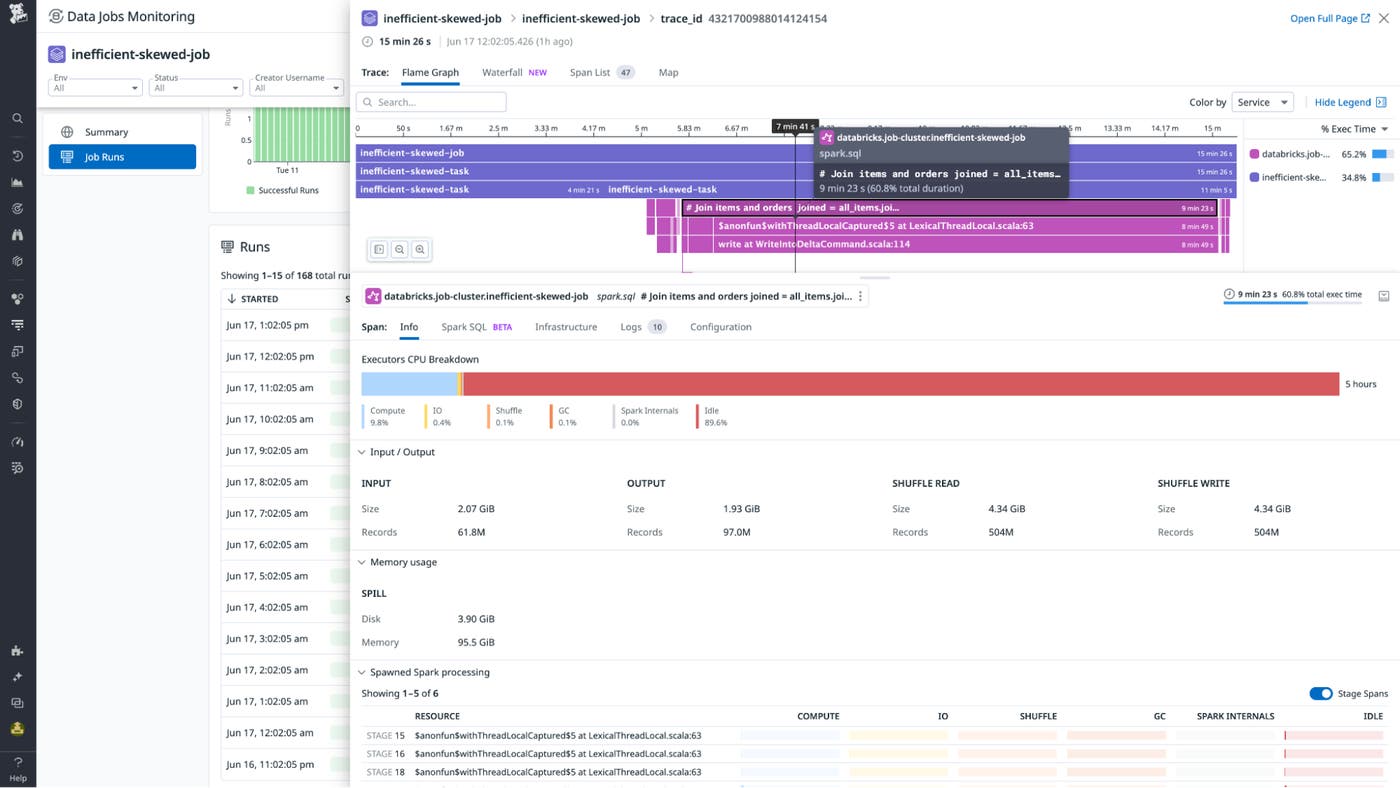

DJM provides a detailed job execution trace that brings together your Spark metrics, Spark configuration, cloud infrastructure metrics, stack traces, and logs to help you localize and remediate issues faster.

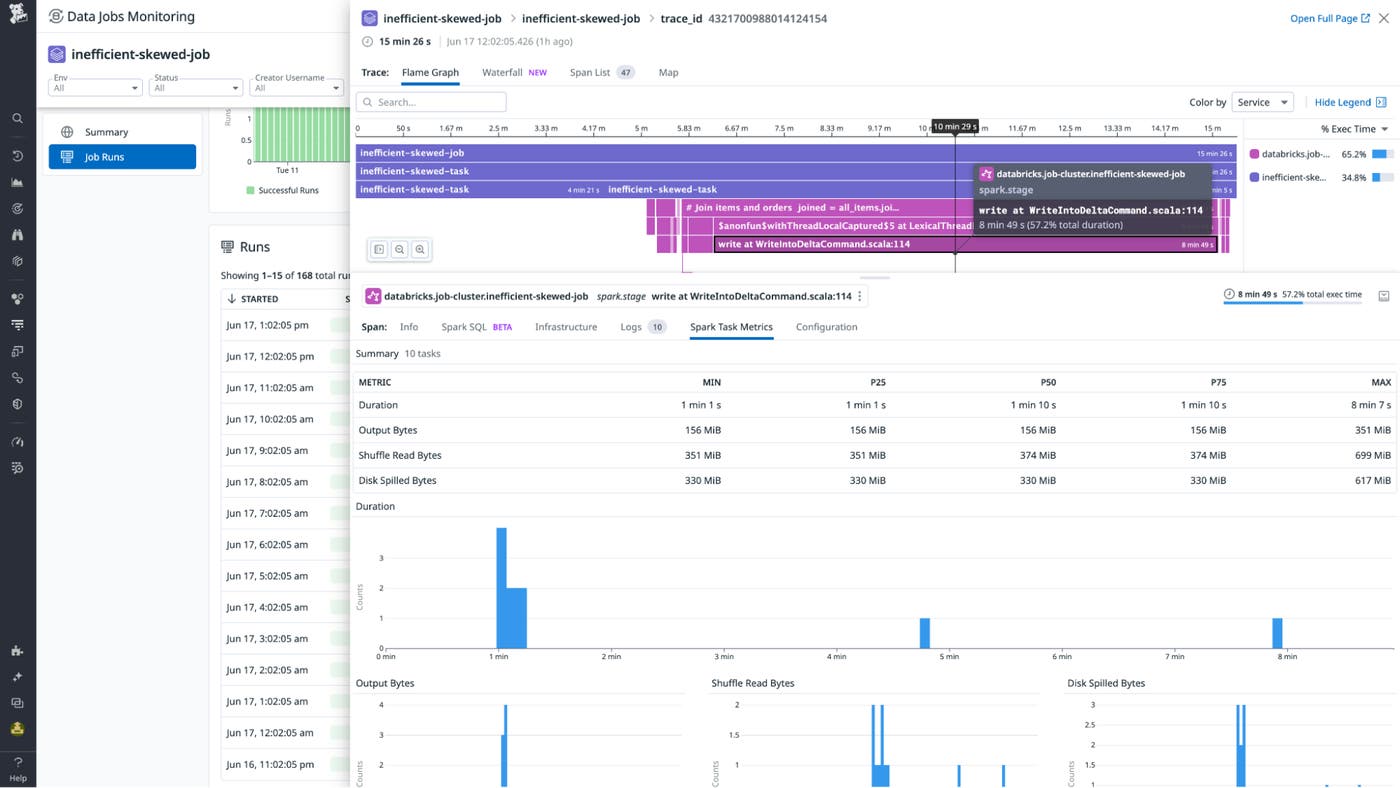

Once you’re notified of job failures or delays, the DJM trace view shows the full execution flow of the job. You can also see the specific query stage that threw the error that caused the Spark job to fail, as well as the performance details of the underlying Spark tasks. This context helps you identify where exactly the job failed or slowed down. You can then correlate each step of the job to your cluster resource utilization, configuration, and Spark application metrics to give you full troubleshooting context.

Let’s say DJM alerts you of a job that’s still running beyond its required completion time. From the alert, you open up a job execution trace. This job usually takes five minutes but is now taking 15 minutes. You notice that your Spark SQL query is taking almost nine minutes though is usually much faster, and in your Spark metrics, you see the executor CPU is spending almost 90 percent of its time idle.

You click on the longest-running Spark stage of the query, where you see one of the Spark tasks is taking more than seven minutes to run when it usually takes under two minutes, indicating a data skew issue (i.e., uneven partitioning of your data). With this knowledge in hand, you inspect your data and the partition key to make the job run faster.

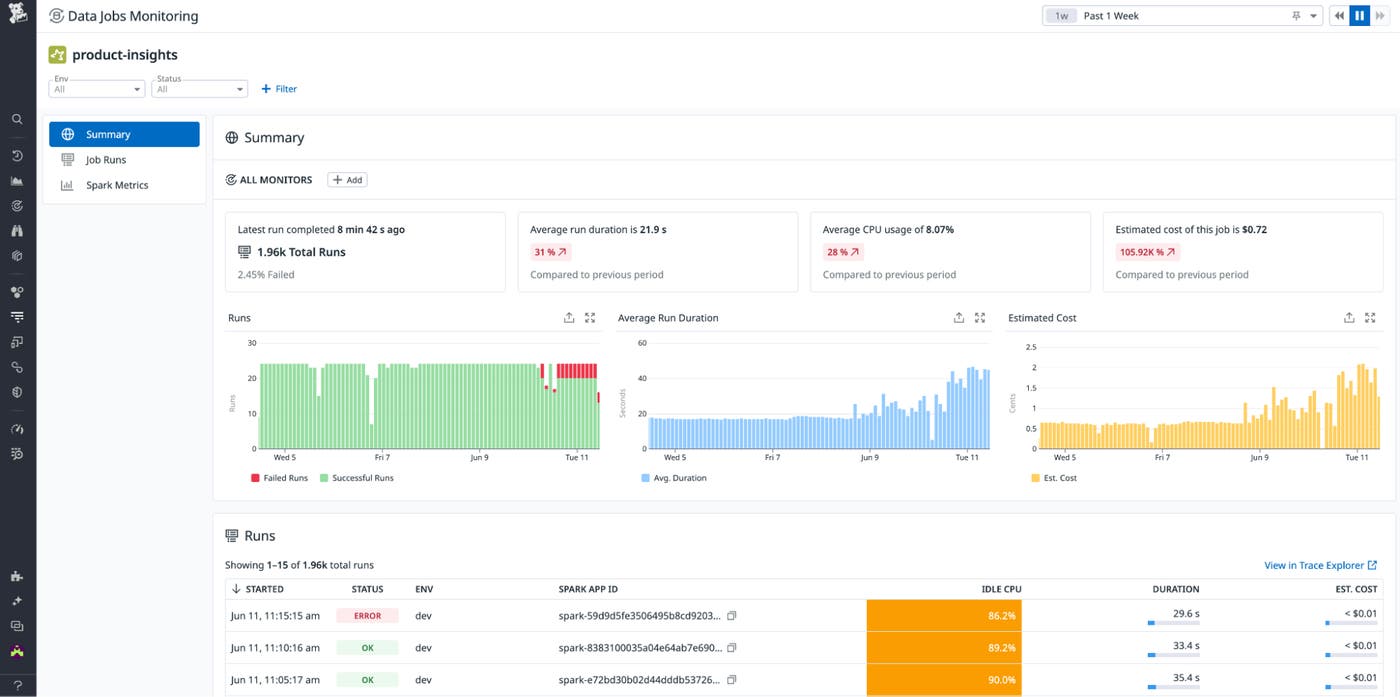

In addition to looking at individual job runs, DJM also enables you to expedite root cause analysis for job failures by observing trends in job performance across runs using the job detail page. Here, you click into a specific job—in this case, the product-insights job—where you see how related job performance metrics and Spark metrics have changed over time. This enables you to identify when a job started to behave differently and the likely cause of the issue.

Reduce costs by optimizing overprovisioned clusters and inefficient jobs

DJM also helps you reduce your data platform costs by identifying the most expensive and inefficient jobs and clusters. It provides you with metrics about cluster resource utilization and Spark application performance, so you can optimize your infrastructure and Spark code and configuration.

From the DJM Overview page, you can quickly spot which jobs are the most expensive by ordering them by the highest estimated cost and seeing which of those jobs is inefficiently using compute by its idle CPU percentage.

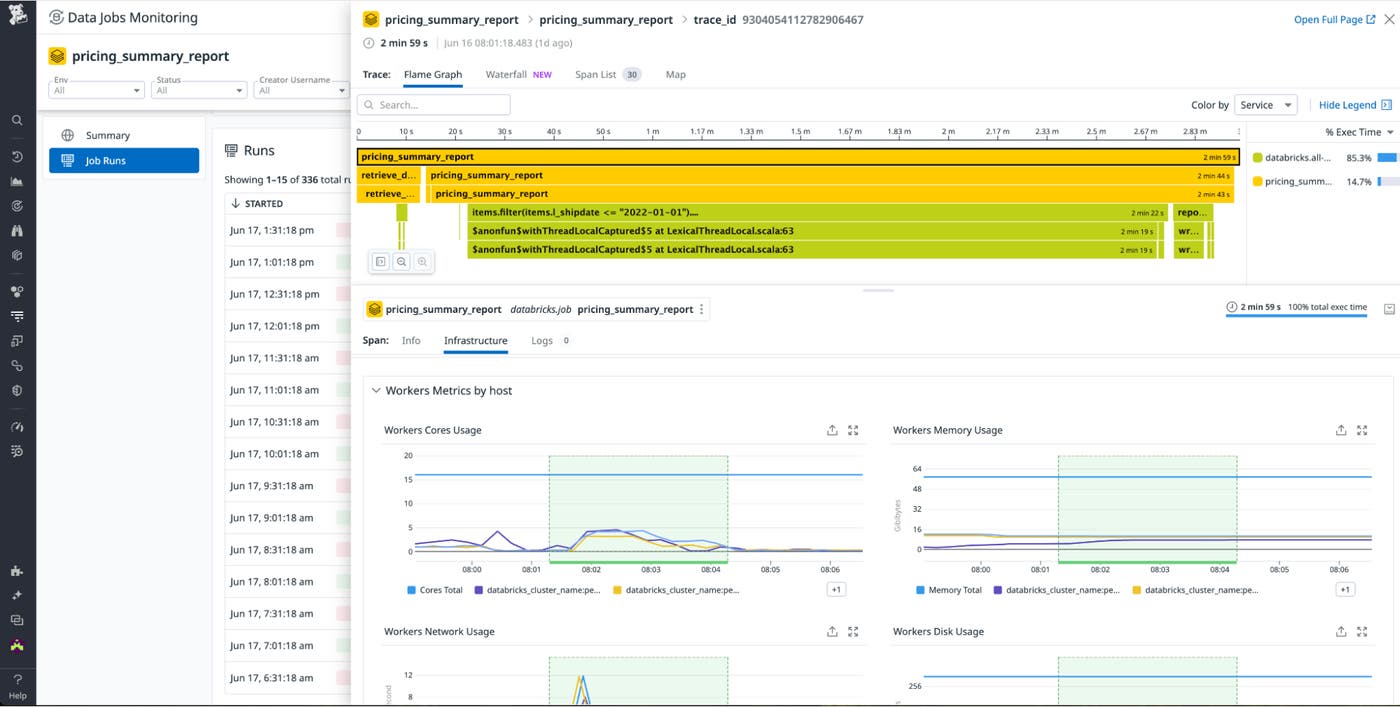

Once you identify a specific job to optimize, you can go to the Infrastructure tab in one of the recent job runs to understand the extent of the overprovisioning by looking at the cluster memory and CPU usage during the job execution. You can use this data to determine whether you can downsize the number of nodes or instance types to reduce your costs.

In addition to looking at costs associated with specific jobs, you can also look at your most expensive clusters and use the recommended filter that identifies overprovisioned clusters to pinpoint those that have low CPU and memory utilization over time, so you can right-size resourcing for these clusters.

Start monitoring your data processing jobs from a centralized view

Data Jobs Monitoring (DJM) helps teams simplify the complex task of monitoring their data jobs, bringing telemetry about job runs and underlying infrastructure together into a single pane of glass. With full context into how your jobs are performing, you can localize and remediate issues with your processing jobs quickly before they obstruct the flow of data across your distributed environment. DJM also enables you to identify inefficient or overprovisioned clusters so you can optimize them and reduce costs. You can use DJM alongside Datadog’s integrations for Kafka, Snowflake, Airflow, and other technologies in your data stack to get a full view of how your data processing infrastructure is performing.

DJM is now available for Databricks or Spark jobs on Amazon EMR or Kubernetes—check out our documentation to get started. If you’re not already using Datadog, you can also sign up for a free 14-day trial.