Mallory Mooney

Editor’s note: This is Part 2 of a five-part cloud security series that covers protecting an organization’s network perimeter, endpoints, application code, sensitive data, and service and user accounts from threats.

In Part 1 of this series, we looked at the importance of securing the perimeter of an organization’s network, especially in cloud environments. Their infrastructure can be complex and large in scope, comprising multiple private and public clouds, datacenters, and on-premise servers. Because of their size and configuration, cloud environments have a larger attack surface, which means that there are more entry points for a threat actor to exploit. Taking the time to identify these points of entry and implement controls like the Zero Trust model can help strengthen the security of an organization’s network.

In this post, we’ll explore how organizations can secure their endpoints, which are all the resources and devices connected to either an organization’s network or managed services—regardless of where they are deployed. More specifically, we’ll look at:

But first, we’ll briefly look at the complexities of endpoint management and how they can easily become vulnerable to an attack.

A primer on endpoints

As previously mentioned, endpoints comprise resources and devices that can connect to an organization’s network or access managed resources. For the sake of this post, endpoints exclude any devices that manage traffic, such as firewalls, routers, and gateways. For on-premise environments, endpoints are typically physical devices and can range from in-house office equipment like workstations, phones, internet-connected printers, and point-of-sale systems to infrastructure components like servers and databases.

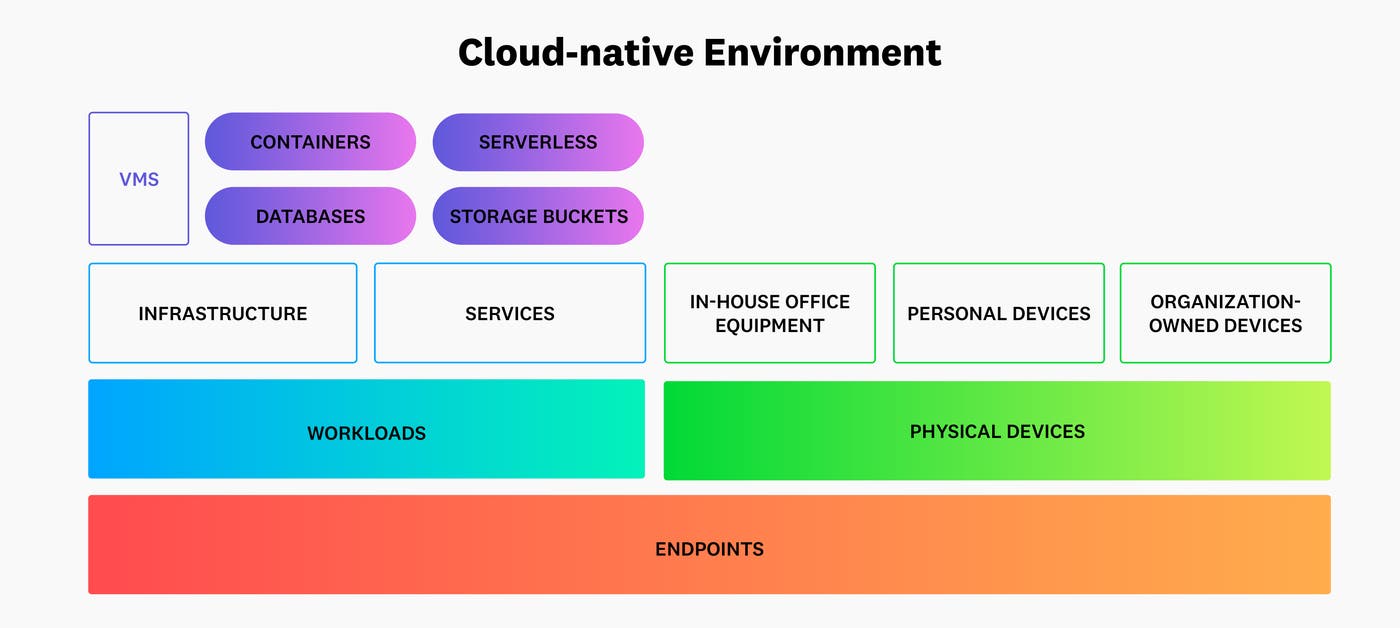

As networks evolve to support cloud-based infrastructure, the number and variety of managed endpoints has grown significantly. The following diagram shows how endpoints in cloud environments have moved away from just traditional sources like workstations to cloud workloads:

Cloud workloads, which we’ll focus on in this post, are all of an application’s underlying processes and compute resources. Their infrastructure hardware is often replaced with virtualization or containerization to reduce overhead costs, so they can include virtual machines, serverless functions, and containers, in addition to cloud-hosted resources like managed databases.

Endpoints vary in hardware, operating systems, and settings, so there is no one-size-fits-all solution for managing them. For instance, the underlying resources that support a production workload or service each require different configurations based on industry and regulatory standards. On top of that, some teams may be more diligent about keeping their services up to date than others. These complexities can lead to costly data breaches because they create vulnerabilities that are easy to overlook and give threat actors unfettered access to an otherwise secure network.

Common endpoint weaknesses and threats

Endpoint vulnerabilities can include scenarios such as out-of-date or unpatched software, weak passwords, services exposed over the network, and user or service accounts with elevated privileges. To find these kinds of vulnerabilities, threat actors may employ techniques like active scanning, which enables them to probe a network for open ports and other endpoint information that can be exploited. For example, active scanning may reveal details like assigned IP address, operating system, and role—all of which a threat actor can use to identify endpoint weaknesses that they can target for an attack.

Threat actors also use phishing to gain direct access to an endpoint. Phishing methods attempt to trick an organization’s employees or users into giving threat actors sensitive information, such as an account’s login credentials. They typically accomplish this by sending emails or text messages that look like they come from a legitimate source but contain links to malicious sites that are designed to steal an unsuspecting user’s information. This activity is especially dangerous when a threat actor manages to steal credentials to a user or service account with elevated privileges, which can potentially give them instant access to other critical endpoints within a cloud environment.

To mitigate these kinds of endpoint vulnerabilities and threats, it’s important to create effective policies for identifying active endpoints, keeping them up to date, and monitoring their activity. Next, we’ll look at how organizations can implement these steps in order to build an effective endpoint security strategy.

Map all connected endpoints

Much like inventorying any entry points along a network’s perimeter, which we discussed in Part 1, mapping all of the endpoints that interact with an organization’s services is a critical part of cloud security. As organizations create their inventory, the following questions can help them understand what needs to be protected:

Which cloud provider is hosting the endpoint?

Which endpoints are container-based versus built on serverless architecture?

Which operating systems need to be supported?

Is the endpoint deployed via platforms like AWS Fargate or Amazon Elastic Kubernetes Service (EKS)?

For Kubernetes architecture, which versions of the platform need to be supported?

These questions give organizations a better understanding of the composition of their cloud environments so they can make informed decisions when implementing their management policies. For example, the following table shows an example inventory of an organization’s endpoints, including information about whether or not they communicate with the public internet:

| Endpoint | Underlying Infrastructure | Access to Public Internet |

|---|---|---|

| web-server-a | Google Compute Engine, Apache | Yes |

| customer-database-a | Amazon RDS, PostgreSQL, Graviton1 | No |

| storage-bucket-2 | Azure, .NET 6.0 | No |

| service-a | EKS, Kubernetes v1.24, Windows Server 2019 | Yes |

| service-b | EKS, Kubernetes v1.23, Amazon Linux 2 | Yes |

This type of data can help teams get a better understanding of which endpoints should be able to send requests to critical resources like customer databases. For example, web-server-a can receive requests from the public internet, so its access to other resources within the network may need to be limited. If threat actors compromise this server, they may be able to traverse other endpoints that host valuable data.

Once an organization has an inventory of endpoints, they can create effective management practices to control access, which we’ll look at in more detail next.

Implement effective management practices to control access

As cloud-native environments continue to expand, integrate with more third-party services, and support a rapidly growing customer base, organizations face multiple challenges related to keeping their endpoints secure. One primary challenge associated with this growth is ensuring that endpoints meet industry and regulatory standards. This makes governance, risk, and compliance (GRC) a key part in implementing effective endpoint management practices.

GRC-based management practices give organizations a better understanding of how to establish a “defense-in-depth” strategy, which aims to secure endpoints at multiple layers of infrastructure. In this section, we’ll focus on Center for Internet Security (CIS) benchmarks and controls, which provide the baseline settings to ensure that resources are secure.

Create Zero Trust policies for high-level control

CIS recommends using the Zero Trust model, which we described in more detail in Part 1, to control access to endpoints based on identity. The Zero Trust model is not only beneficial for authenticating users within a network; it can also help teams control permissions for their cloud workloads. In addition, it creates the foundation by which organizations can implement fine-grained controls for the different types of endpoints in their environment, such as cloud-based resources.

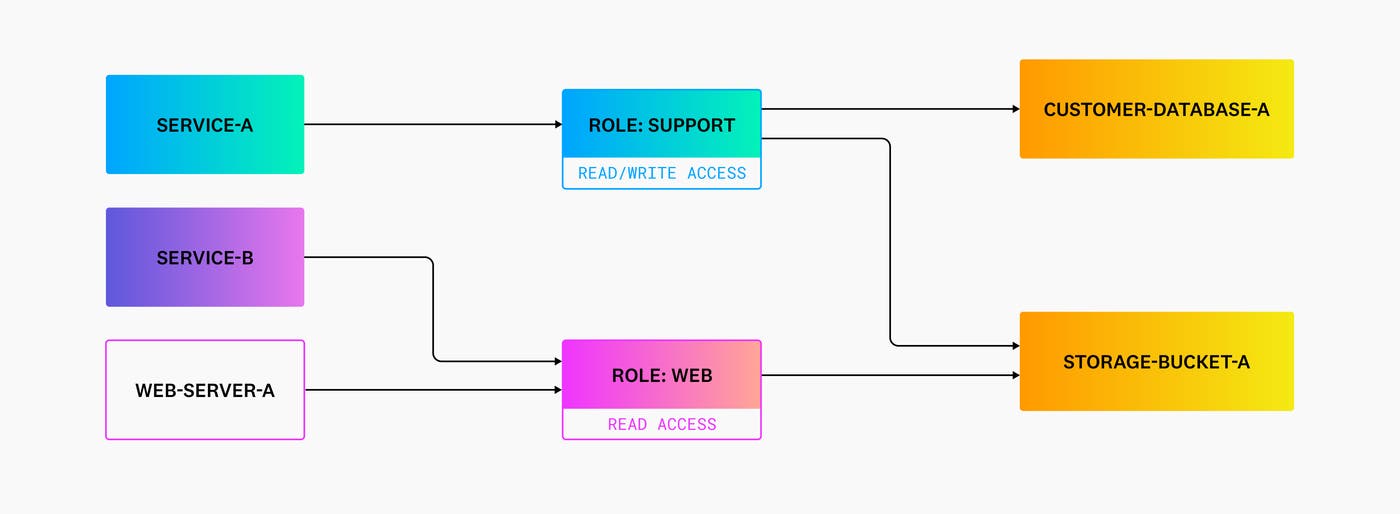

Zero Trust policies use the principle of least privilege to assign only the permissions that are needed to complete a particular task, typically grouped by roles. For example, using the previous inventory of endpoints, the following diagram shows which roles have read and write permissions to an organization’s internal resources:

Based on this model, service-b and web-server-a only have read-level access to storage-bucket-a. If a threat actor gains access to one of these sources, their ability to acquire sensitive data from other endpoints, such as the customer database, is limited.

In addition to using the Zero Trust model to establish high-level access controls, organizations need to individually control the wide variety of endpoints in cloud-native environments. Each type of endpoint has a unique set of configurations to ensure its security. Therefore, it’s important to build the appropriate controls based on those settings.

Fine-tune endpoint configurations with CIS benchmarks

As previously mentioned, the endpoints that support cloud-native applications are made up of hosted resources like storage buckets, Kubernetes clusters, and managed databases. For these, organizations can use CIS’s recommended configurations for various resource functions, such as networking, identity and access management, and logging. The following table shows an example list of configuration best practices for endpoints that are managed by popular architecture and cloud services:

| Endpoint | Benchmark | Category | Rule Number | Rule |

|---|---|---|---|---|

| Storage buckets | CIS Amazon Web Services | Logging | 2.2 | Ensure the S3 bucket used to store CloudTrail logs is not publicly accessible |

| PostgreSQL databases | CIS PostgreSQL 9.6 | Installation and patches | 1.1 | Ensure packages are obtained from authorized repositories |

| Kubernetes clusters | CIS Kubernetes | Worker nodes - Kubelet | 4.2.1 | Ensure that the anonymous-auth argument is set to false |

| GCP VPCs | CIS Google Cloud Platform | Networking | 3.6 | Ensure that SSH access is restricted from the internet |

Fine-tuning access and configurations is a key step for endpoint management. But in addition to these practices, organizations need visibility into endpoint activity to ensure that their controls are working as expected. We’ll take a look at implementing monitoring solutions in more detail next.

Monitor all connected endpoints and their activity

Monitoring endpoints and their activity is one of the final steps of implementing an effective endpoint security strategy. As organizations continue to adopt cloud technologies, visibility into their environments is becoming more of a priority for cloud security. Many security vendors are evolving to meet organizations’ security goals by combining security and observability tools to provide a unified system for threat detection, response, and monitoring. This enables organizations to correlate suspicious activity found in sources like logs and workload processes with other observability data, such as traces and metrics for affected endpoints.

There are several different types of security monitoring tools that not only provide insight into malicious activity but also enable organizations to act on active threats. For example, Endpoint Protection Platforms (EPPs) allow organizations to scan for and automatically block threats like malware and ransomware with built-in firewalls and antivirus software. EPPs are typically cloud-based and can deploy lightweight agents to all of an organization’s physical and cloud-native endpoints, such as an Amazon Elastic Compute Cloud (EC2) instance, in order to monitor them for threats.

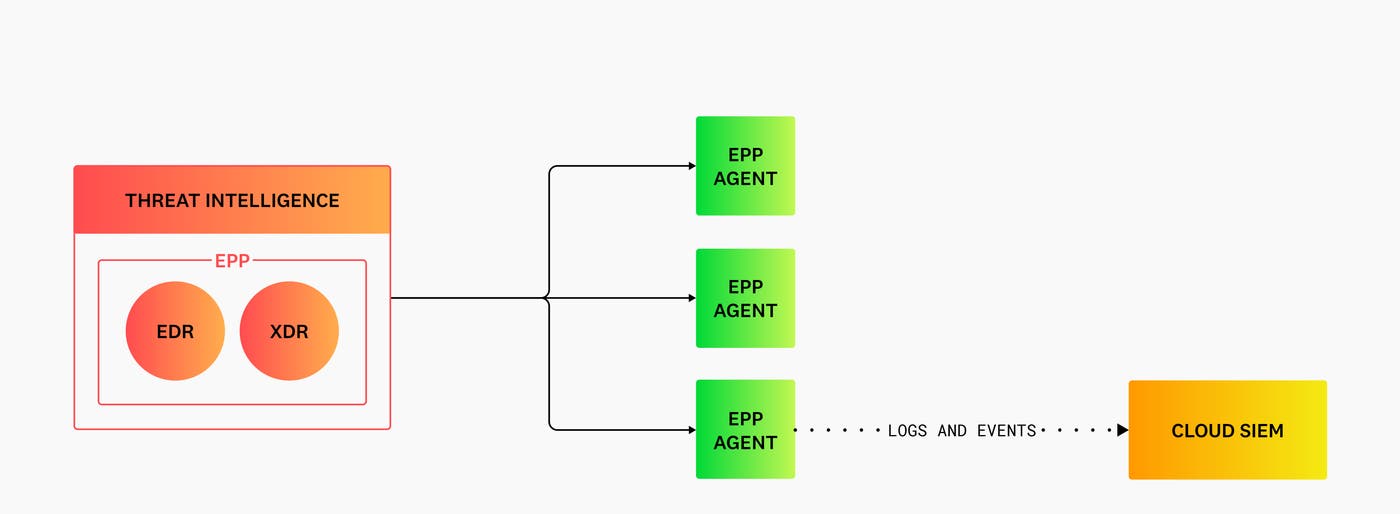

Modern EPPs may also include Endpoint Detection and Response (EDR) or Extended Detection and Response (XDR) tools, which use features like threat intelligence to scan for malicious activity across all of an organization’s endpoints, as seen in the following diagram:

But as previously mentioned, the shift to the cloud requires expanded visibility into individual workloads, not just physical devices. To accomplish this, organizations can use EPPs that also integrate with other solutions like Security Information and Event Management (SIEM), Cloud Workload Security (CWS), and Cloud Security Posture Management (CSPM) platforms. SIEMs monitor endpoint logs and event data across an organization’s cloud and multi-cloud environments, as seen in the previous diagram. CWS tools monitor workload files and process activity for unusual behavior. Finally, CSPM tools track endpoints for configuration issues that could introduce risk.

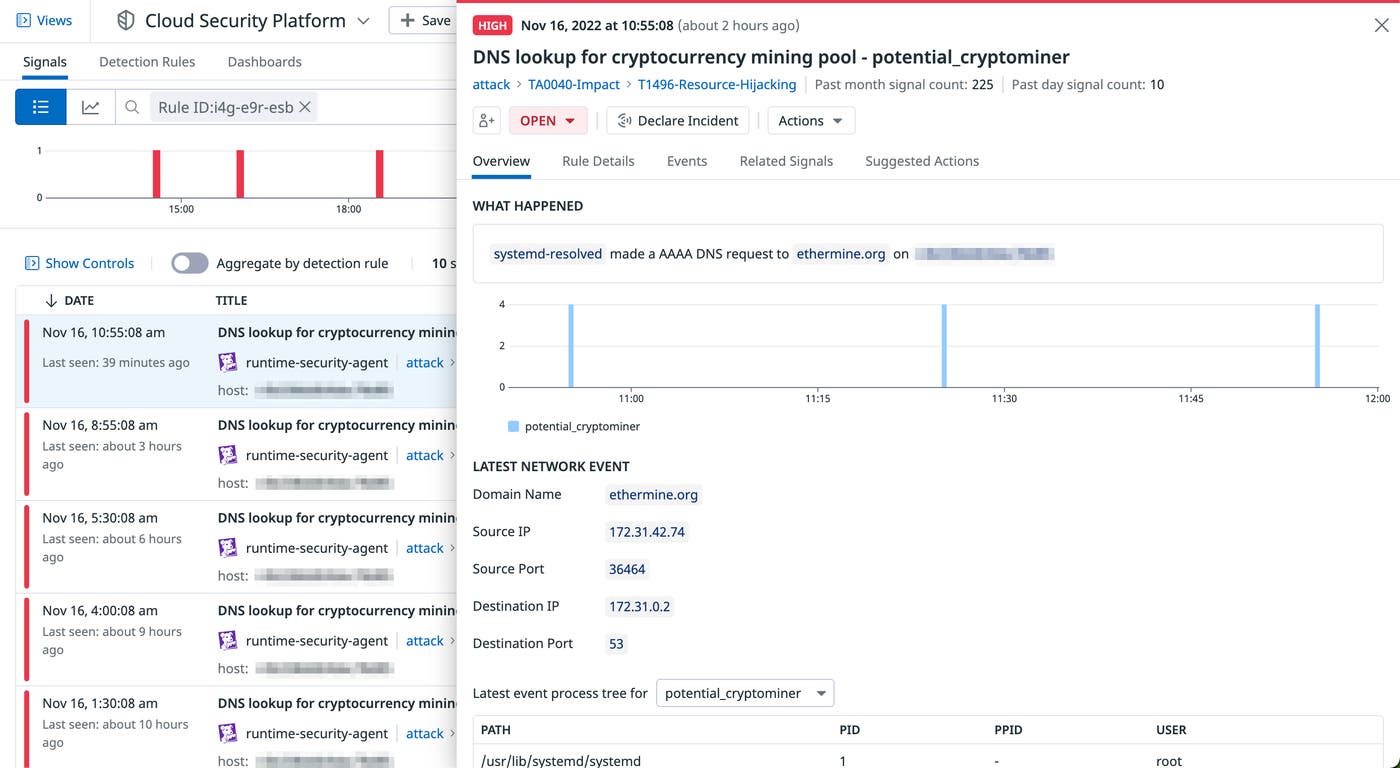

This type of integration allows organizations to collect alerts from their EPP tools and monitor them alongside activity logs and events from other platforms in order to confirm malicious activity on any connected endpoint. For example, Datadog Workload Protection provides agent-based monitoring and DNS-layer threat detection, enabling organizations to scan files, process activity, and connections across workloads for threats. As seen in the screenshot below, Workload Protection can detect if a process within a workload performed potentially harmful activity like a DNS lookup for a domain associated with an external crypto mining pool.

This activity is often a sign of cryptojacking, which involves threat actors using endpoint resources to mine for cryptocurrency. Organizations can then use Datadog Cloud SIEM to aggregate and analyze all related log data in order to confirm the source of this activity.

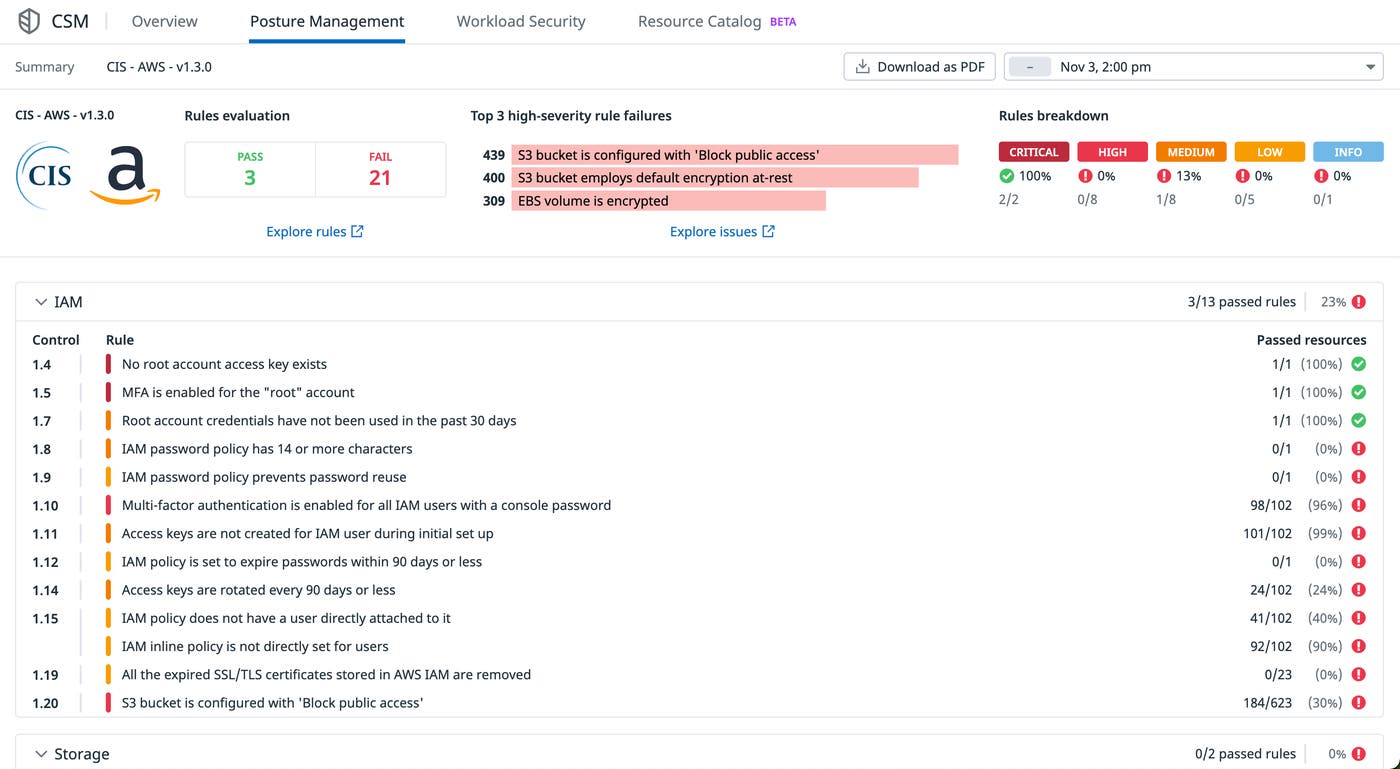

Threats like these can occur when an endpoint’s configuration deviates from an organization’s management controls. CSPM platforms help organizations surface any misconfigurations that could leave their endpoints vulnerable. For example, Datadog Cloud Security Misconfigurations provides built-in detection rules that are mapped to CIS controls for workloads hosted on AWS, GCP, Azure, and other infrastructure. Organizations can review these rules in a high-level report, as seen in the following screenshot, and determine which endpoints do not have the necessary configurations:

Implementing monitoring solutions like EPPs and integrating them with other security tools like Cloud SIEM, Workload Protection, and Cloud Security Misconfigurations gives organizations improved visibility into their endpoints and their configurations. This ensures that organizations can efficiently identify any vulnerabilities and prevent them from compromising their application services and resources.

Complete endpoint security for cloud-native infrastructure

In this post, we looked at the importance of securing endpoints, as well as best practices for implementing effective management controls. Similar to securing the boundaries of a network, endpoint security focuses on mapping all cloud workloads, their resources, and their traffic, as well as locking down their access and configurations. In Part 3 of this series, we’ll look at how to protect application code and infrastructure. To learn more about Datadog’s security offerings, check out our documentation, or you can sign up for a 14-day free trial today.