John Matson

What do you do when your customer base is scaling more than 300 percent year over year—and delivering a flawless real-time video experience is at the core of your product? At Peloton Cycle, full-stack observability—from client-side metrics to code-level tracing—has helped their engineers scale their business rapidly and improve the user experience at the same time.

About Peloton

Based in New York City, Peloton is a technology company that is revolutionizing the fitness industry. Peloton sells an indoor-cycling bike equipped with a 22-inch HD touchscreen that allows users to join live and on-demand cycling classes from the comfort and convenience of their homes. Founded in 2012, Peloton aims to bring the same sense of community and motivation that people find in group exercise classes into homes across the world. During and after the class, riders can follow their own performance metrics and see how they rank on a leaderboard of riders.

Real-time rides

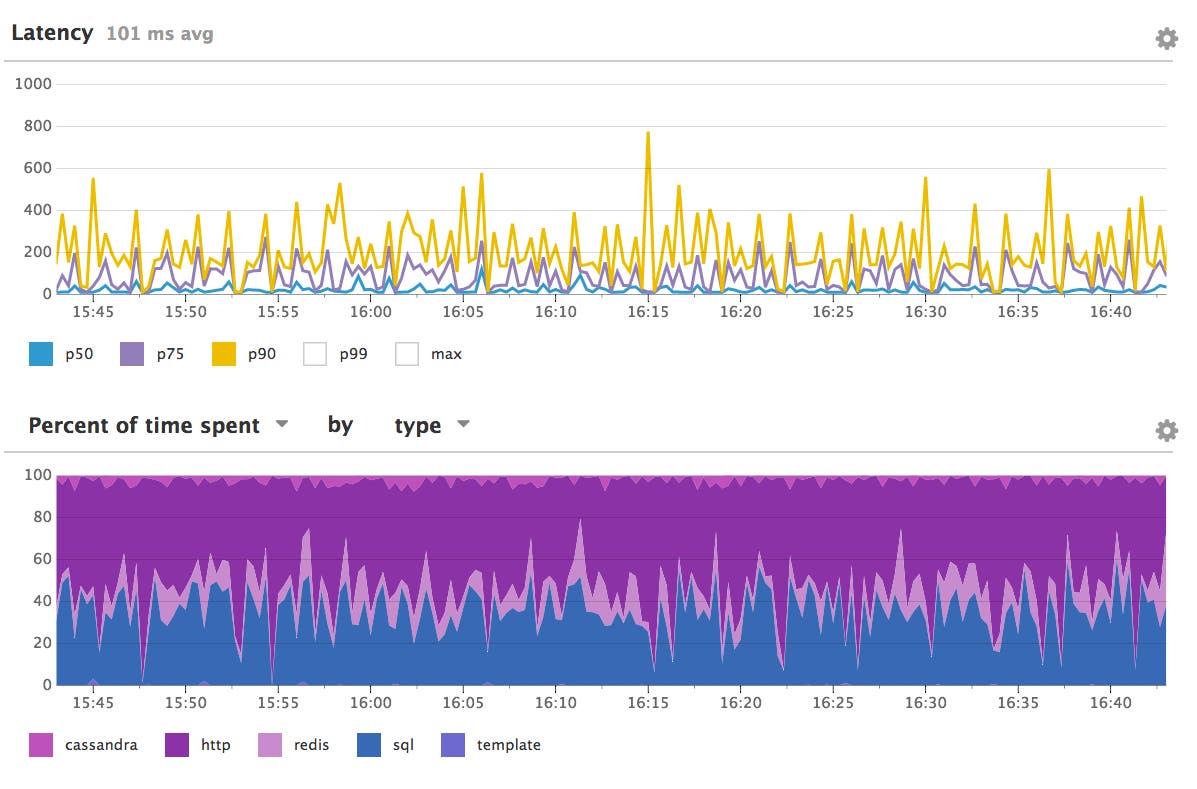

In order to create the sense of engagement and energy that you’d find in a cycling studio, Peloton needs to deliver a high-quality video feed to their users and minimize any perceived lag. “Because of the real-time nature of our platform, low latency is a critical factor for us,” says Peloton co-founder & CTO Yony Feng. But with nearly half a million users, and some classes drawing thousands of simultaneous riders, maintaining low latency for real-time features like the rider leaderboard can be a challenge.

For years, Peloton has used Datadog to monitor a primarily AWS-based cloud infrastructure, and for alerting on availability and performance issues in the components supporting their Python-based Bottle application. They also collect custom user-experience metrics from the bikes themselves, such as video lag and wi-fi strength.

To keep pace with the rapidly expanding customer base and continually improve the experience for their riders, Peloton began evaluating application performance monitoring platforms in late 2016. They found that Datadog APM was easy to roll out across their stack, with built-in support for Python apps and integrations with the libraries they rely on, such as [gevent] and the AWS [boto] library. “We looked at a few other APM products, and they were tougher to integrate with our stack,” Feng says.

Tracing code in the wild

Once they deployed APM, Peloton engineers were able to trace user requests in their application and identify inefficiencies. “Having APM measure the relevant pieces of our flow gave us some insight into our API endpoints,” Feng says. “It actually helped us trace some of our application logic errors that we didn’t know we had.”

With so many users, and a rapidly expanding selection of live and recorded classes to choose from, APM allows the engineering team to identify performance issues that are very hard to foresee, says Peloton software engineer Chris Mohr. “We have a very data-driven platform, so a lot of what we’re doing is searching through a library of content for our users,” he says. “Just looking through the code, it’s not always obvious how that’s going to work.”

The open-ended exploration made possible by tracing meant that the Peloton team could identify hotspots and inefficient code by inspecting individual requests from real users. In some cases, the Peloton team found, a request might generate hundreds of individual database calls, which could be streamlined considerably for better performance. “The tracing made all that evident even though we weren’t looking for that in particular,” Mohr says.

Scaling with speed

Within 30 days of implementing APM, the Peloton team was able to reduce the response times of a dozen endpoints by a factor of three or more. For instance, Mohr and his colleagues were able to significantly speed up user-facing search to improve rider engagement. “One of the important things for us is how quickly we can respond when a user searches for a class, and we cut that by a factor of four with the insights that we got from using APM,” Mohr says. “If users can’t search for classes quickly, they’ll just give up and might turn off their bike.”

The observability made possible by deploying a single monitoring platform across the stack has helped Peloton to improve application performance while serving an ever-growing number of riders. “It’s helped our user experience in terms of our bikes,” Feng says. “It’s helped us scale.”