Kai Xin Tai

Serverless computing continues to be a growing trend, with AWS Lambda as a main driver of adoption. Today, AWS released Provisioned Concurrency, a new feature that makes AWS Lambda more resilient to cold starts during bursts of network traffic. If you’re running a consumer-facing application, slow page loads and request timeouts can degrade the user experience and lead to significant revenue loss. Now, with Provisioned Concurrency, you can ensure that your functions remain initialized and ready to handle requests in milliseconds.

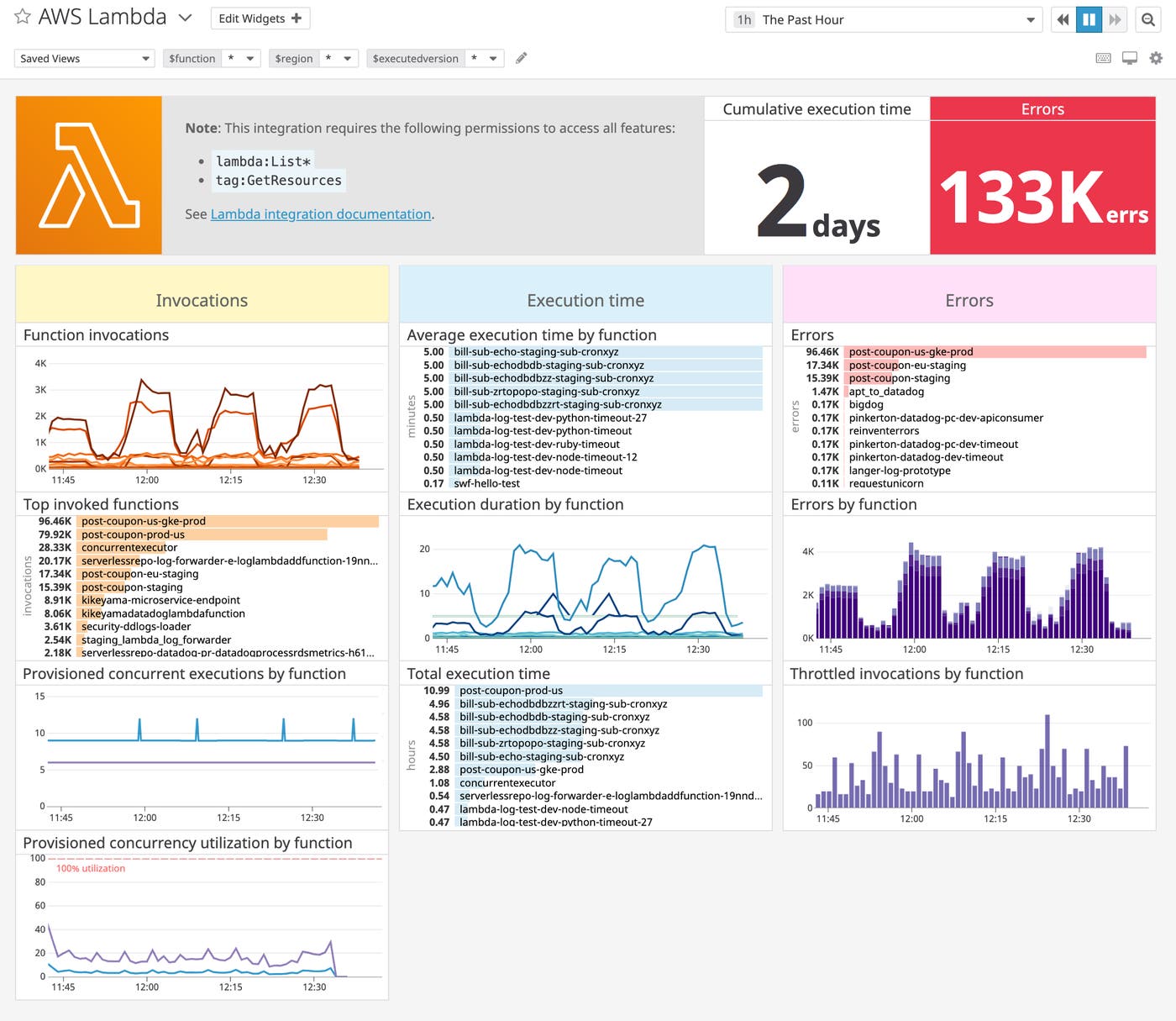

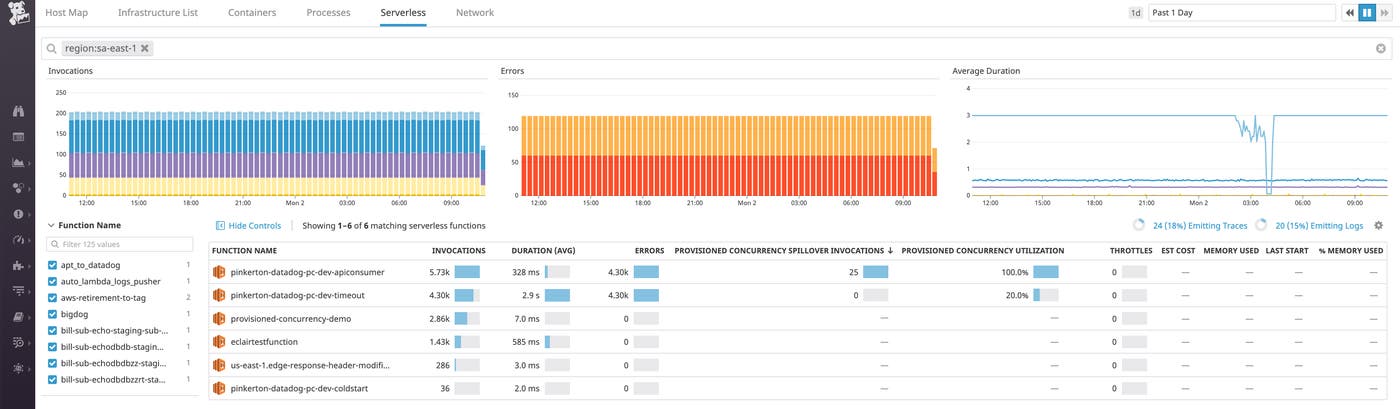

We are happy to announce that we’ve updated our AWS Lambda integration to include Provisioned Concurrency metrics so you can monitor your functions running with this new configuration from day one. By navigating between our out-of-the-box Lambda dashboard and Serverless view, you can get comprehensive visibility into all your Lambda functions—including their Provisioned Concurrency usage—through a single integration.

How Provisioned Concurrency works

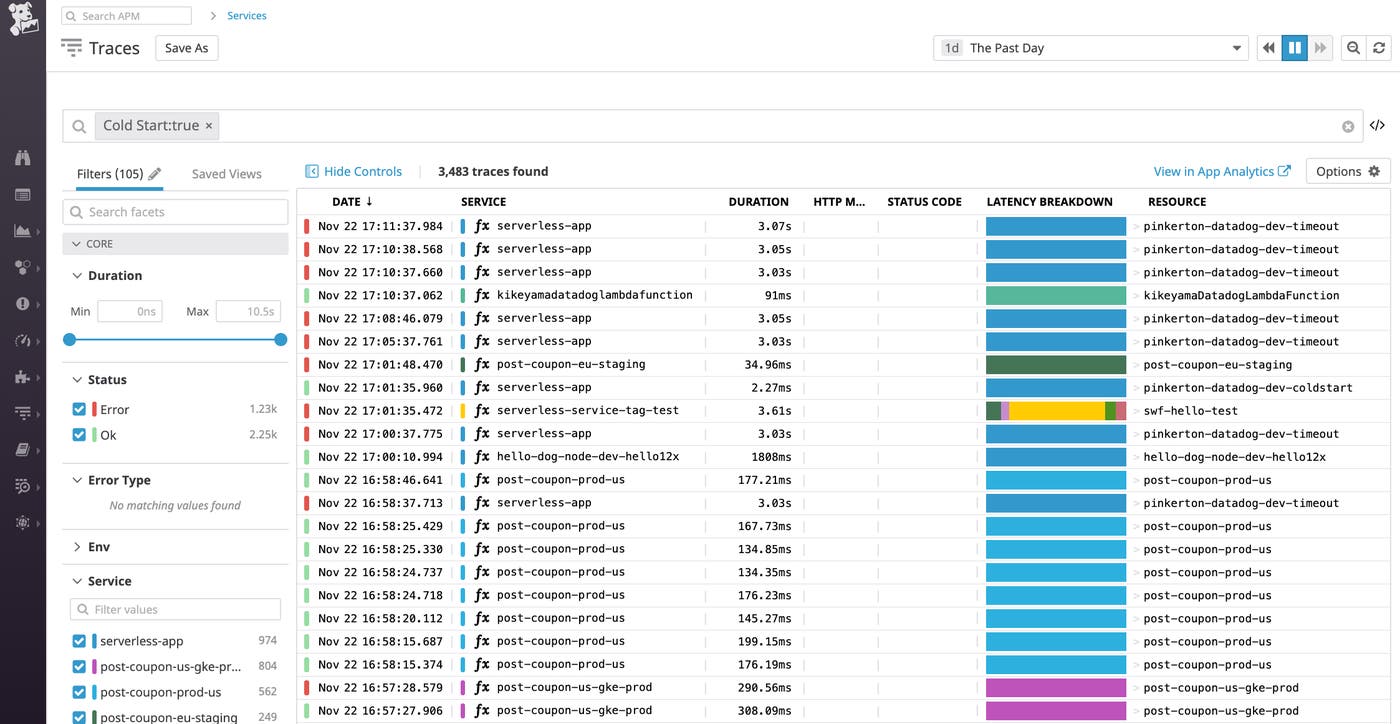

In AWS Lambda, a cold start refers to the initial increase in response time that occurs when a Lambda function is invoked for the first time, or after a period of inactivity. During this time, AWS has to set up the function’s execution context (e.g., by provisioning a runtime container and initializing any external dependencies) before it is able to respond to requests. When your serverless functions depend on each other, such as in a microservices environment, spikes in traffic can cause cold starts to cascade, resulting in extended periods of user-facing latency. With Provisioned Concurrency, you can mitigate cold starts and optimize the performance of your serverless applications, at any scale.

To understand Provisioned Concurrency, it is helpful to first understand Lambda concurrency limits. Reserving concurrency for a given Lambda function guarantees that the specified number of instances of your function will always be available to serve requests at any given time. Lambda automatically throttles a function when it reaches its concurrency limit to prevent a single function from exhausting resources that other functions may need.

In the same way that enabling concurrency ensures that instances are available to respond to requests, configuring Lambda functions with Provisioned Concurrency gives you greater control over your function start times by keeping them initialized. Upon setting up Provisioned Concurrency, AWS will run the initialization code for the specified functions, so that they’ll be ready to respond to incoming requests in milliseconds.

You can manage Provisioned Concurrency through common AWS interfaces, including the AWS Management Console, Lambda API, AWS CLI, and AWS CloudFormation. To configure Provisioned Concurrency for a stable version of your Lambda function, you can specify its alias. You will see this alias tagged as executedVersion on your Provisioned Concurrency metrics in Datadog. Provisioned Concurrency can be configured on a schedule (e.g., to accommodate periods of high traffic) or through target tracking. AWS will use Auto Scaling to dynamically adjust your Provisioned Concurrency limit based on your configured schedule or (if you’re using target tracking) in response to the incoming load and your target utilization level.

Optimize your Provisioned Concurrency usage

Understanding your Provisioned Concurrency usage is crucial for determining whether you need to scale your configuration up or down. Datadog’s AWS Lambda integration automatically collects the following four new metrics:

the sum of concurrent executions using Provisioned Concurrency for a given function (

aws.lambda.provisioned_concurrent_executions)the sum of invocation requests for functions using Provisioned Concurrency (

aws.lambda.provisioned_concurrent_invocations)the number of concurrent executions over the Provisioned Concurrency limit (

aws.lambda.provisioned_concurrent_spillover_invocations)the fraction of Provisioned Concurrency used by a given function (

aws.lambda.provisioned_concurrent_utilization)

Our integration collects and tags Provisioned Concurrency metrics by metadata from AWS (e.g., function name, executed version, and region) in the same way as other Lambda metrics. You can use these tags—and add custom tags—to slice and dice your data across any dimension, giving you deep visibility into all your AWS Lambda functions in one place, regardless of their concurrency configuration.

Identify underprovisioned functions

You can add any of the four metrics to the Serverless view by clicking on the gear icon in the upper-right corner of the table and selecting your desired metric. For example, by adding the count of invocations over the configured limit (aws.lambda.provisioned_concurrent_spillover_invocations) and sorting your functions by this metric, you can identify which functions are underprovisioned and risk experiencing cold starts. You can also set up an alert to get automatically notified when the Provisioned Concurrency utilization level is approaching the limit. This way, you can determine whether you want to increase the limit before your application suffers any performance bottlenecks.

Adjust your allocation in real time

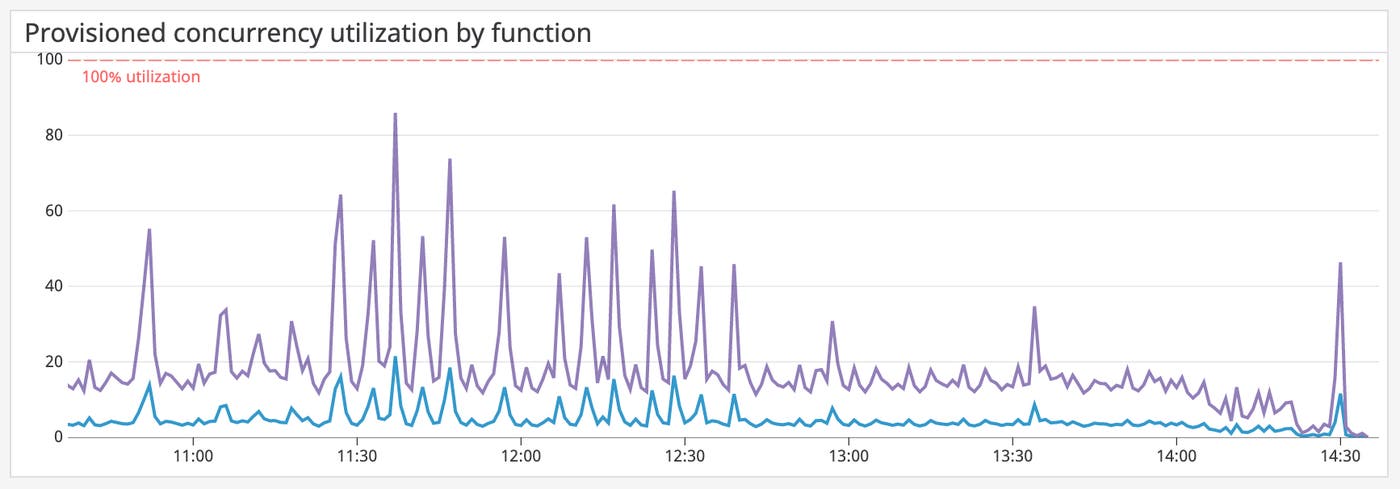

In addition, our out-of-the-box dashboard displays a graph of Provisioned Concurrency utilization (aws.lambda.provisioned_concurrent_utilization) by function. If you observe that a function is consistently using only a fraction of its Provisioned Concurrency during certain periods of time, you can safely remove those times from the schedule to avoid paying for unused resources.

Getting started

You can enable Provisioned Concurrency either by configuring it directly through the AWS Lambda console or if you’re using the Serverless Framework, by modifying the serverless.yml file. Simply add the provisionedConcurrency variable to your desired functions, as shown in the example below:

functions: hello: handler: handler.hello events: - http: path: /hello method: get provisionedConcurrency: 5This example configures AWS to keep five concurrent instances of the hello function initialized and ready to respond to requests.

Serverless monitoring made seamless

With Datadog, you can monitor all your AWS Lambda functions and troubleshoot performance issues in real time with custom metrics, distributed traces, logs, and more. The latest updates to our Lambda integration, made possible through our strong partnership with AWS, can help you optimize your Provisioned Concurrency configuration for both cost and performance. If you’re already using Datadog, head over to our documentation to start monitoring your Provisioned Concurrency usage along with the rest of your serverless environment. Otherwise, sign up today for a 14-day free trial.