Christophe Tafani-Dereeper

Cloud Security Researcher and Advocate

We recently released the State of DevSecOps study, in which we analyzed tens of thousands of applications and cloud environments to assess adoption of best practices that are at the core of DevSecOps today. In particular, we found that:

Java services are the most impacted by third-party vulnerabilities

Attack attempts from automated security scanners are mostly unactionable noise

Only a small portion of identified vulnerabilities are worth prioritizing

Lightweight container images contain fewer vulnerabilities

Adoption of infrastructure as code is high, but varies across cloud provider

Manual cloud deployments are still widespread

Usage of short-lived credentials in CI/CD pipelines is still too low

In this post, we provide key recommendations based on these findings, and we explain how you can leverage Datadog App and API Protection (AAP) and Cloud Security to improve your security posture.

Gain visibility into third-party vulnerabilities in your applications

Based on our telemetry, we identified that an application has, on average, 160 third-party dependencies. Many of these originate from open source ecosystems, and many are indirect dependencies, making them more difficult to monitor. These dependencies can introduce vulnerabilities into your application that often lead to exploitable flaws that attackers take advantage of, such as Spring4Shell, Log4Shell, or CVE-2023-46604 in Apache ActiveMQ. It’s therefore important to gain visibility into all of the third-party libraries that your services rely on and the vulnerabilities these libraries might bring.

The process typically takes three steps. First is to generate a software bill of materials (SBOM), which is a recursive list of all of your application’s dependencies. SBOMs typically use the OWASP CycloneDX format, which can be represented in JSON. You can generate them using tools such as Google’s osv-scanner or OWASP’s Dependency-Check.

{ "$schema": "http://cyclonedx.org/schema/bom-1.5.schema.json", "bomFormat": "CycloneDX", "specVersion": "1.5", "version": 1, "components": [ { "bom-ref": "pkg:maven/org.junit.jupiter/junit-jupiter@5.10.2", "type": "library", "name": "org.junit.jupiter:junit-jupiter", "version": "5.10.2", "purl": "pkg:maven/org.junit.jupiter/junit-jupiter@5.10.2", "evidence": { "occurrences": [] } }, { "bom-ref": "pkg:maven/org.junit.platform/junit-platform-engine@1.10.2", "type": "library", "name": "org.junit.platform:junit-platform-engine", "version": "1.10.2", "purl": "pkg:maven/org.junit.platform/junit-platform-engine@1.10.2", "evidence": { "occurrences": [] } },[...]Once you have an SBOM, the second step is to match the dependencies in it with a database of known vulnerable components. Although commercial databases exist, the Open Source Vulnerability database is the most popular choice and has an API.

As a final step, it’s critical to store the vulnerabilities you have identified in your services in an easy-to-access place. This enables teams to gain visibility, track remediation progress, and maintain a centralized understanding of your application security posture.

Use Datadog SCA to surface third-party vulnerabilities

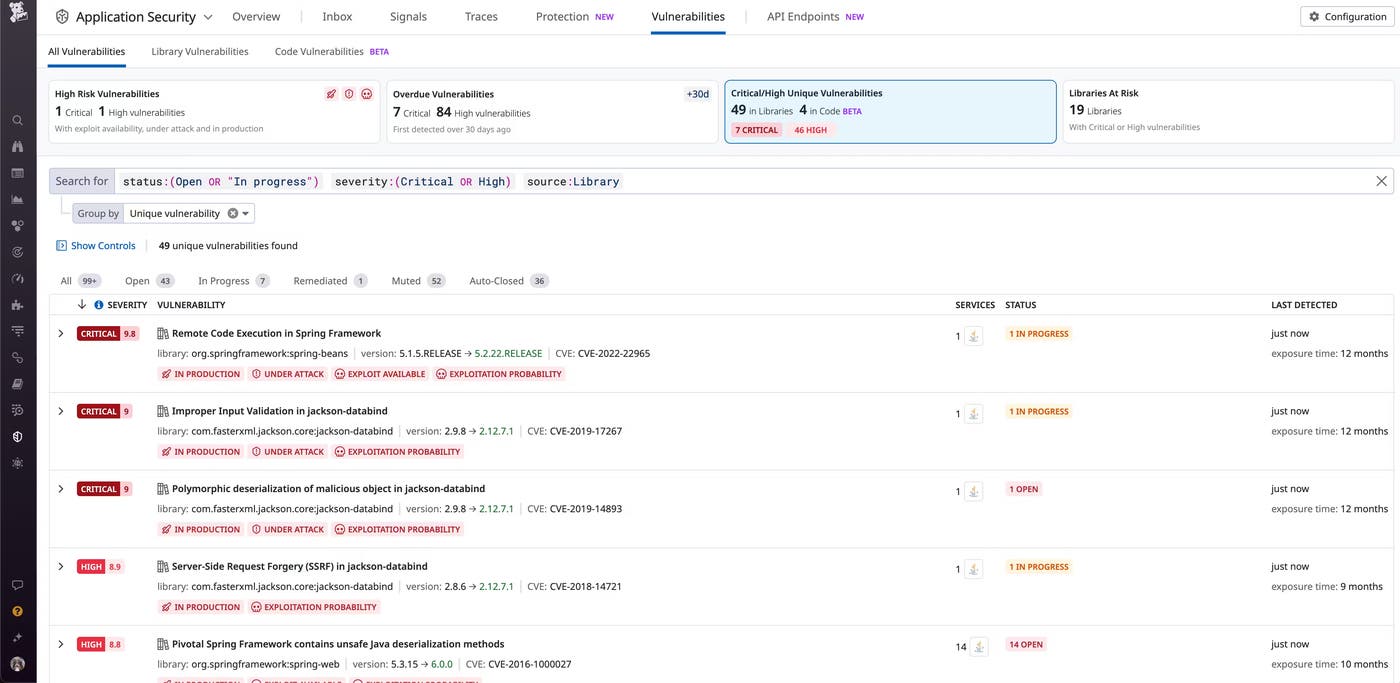

You can use Datadog Software Composition Analysis (SCA) to identify third-party vulnerabilities in your applications by analyzing source code both in your IDE as well as at runtime. This effectively enables you to “shift left” on security.

For each vulnerability that SCA identifies, Datadog presents developers with a clear impact report including:

When the vulnerability was first identified

Which services and environments are affected

How to fix the vulnerability using their favorite build tool

The ability to quickly jump to the Datadog service and infrastructure observability

Read more: Mitigate vulnerabilities from third-party libraries with Datadog Software Composition Analysis

Implement a framework to prioritize vulnerability and threat remediation

The volume of alerts triggered by the detection of vulnerabilities and threats in modern environments can become noisy and overwhelming. Given that the vast majority of attack attempts are harmless, it’s important to implement a framework for prioritizing security concerns and determining where best to focus response and remediation efforts. This is critical to getting the most business value out of your security work.

There are several industry standards for ranking the seriousness of known vulnerabilities. For example, the Common Vulnerabilities and Exposures (CVE) list attempts to provide a base score of how severe a vulnerability is. The Exploit Prediction Scoring System (EPSS) measures how likely a vulnerability is to be actually exploited, making it particularly useful for identifying the most urgent threats.

These standards, however, inherently don’t take into account your specific environment. In addition to these scores, when considering how to prioritize vulnerabilities and threat investigation, it’s also useful to consider the following characteristics.

Is the asset (e.g., host or application) part of a production environment? These typically contain sensitive or personal data and can be more critical than development or pre-production deployments.

Is the asset exposed publicly? Internet-facing assets are continuously scanned by automated scanners, and therefore much more likely to be actually targeted by real attacks.

Is the asset tied to a known privileged identity, such as a privileged AWS IAM role? This makes the impact of potential exploitation much higher, as it would allow a successful attacker to pivot from a single vulnerable asset to a full cloud environment.

Does the vulnerability have known public exploit code? These vulnerabilities have easily accessible instructions for how to exploit them, making it more likely that an attacker takes advantage of a weakness, even with minimal skills.

Using these factors, it’s possible to better prioritize remediation and investigation efforts.

Use Datadog to prioritize vulnerabilities and threats using runtime context

Datadog App and API Protection, Software Composition Analysis (SCA), and Cloud Security leverage runtime insights from your service and cloud environments to help you better understand the actual impact potential of vulnerabilities and threats, and let you focus on what matters.

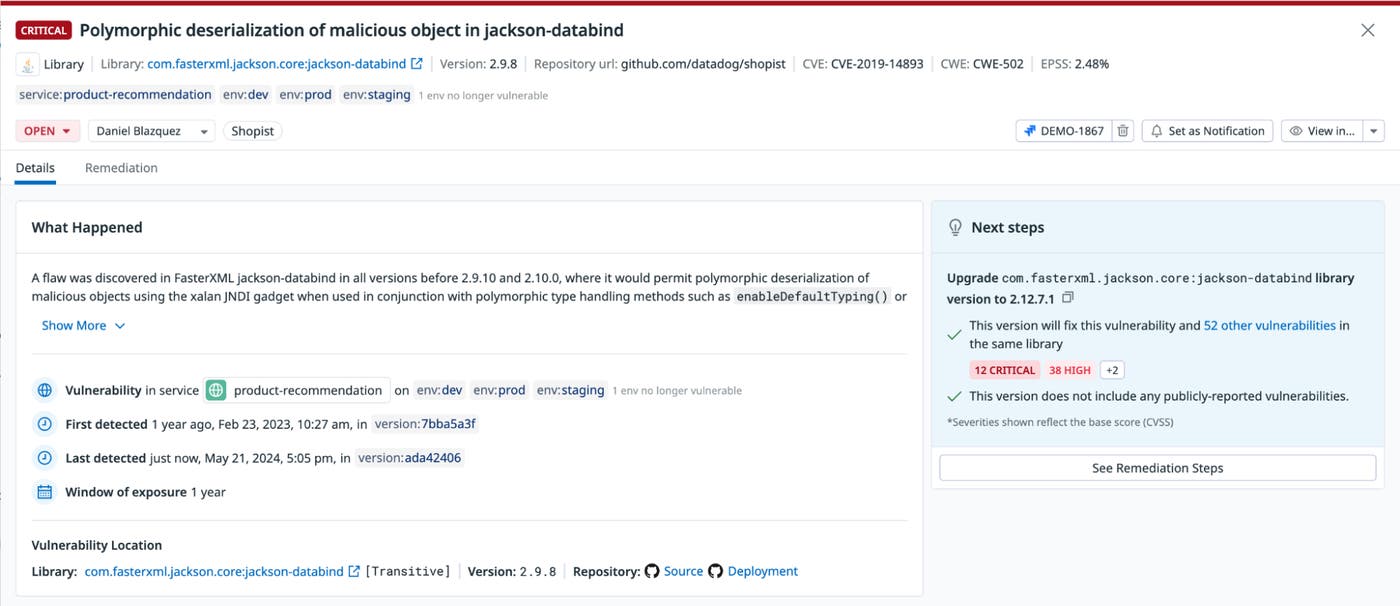

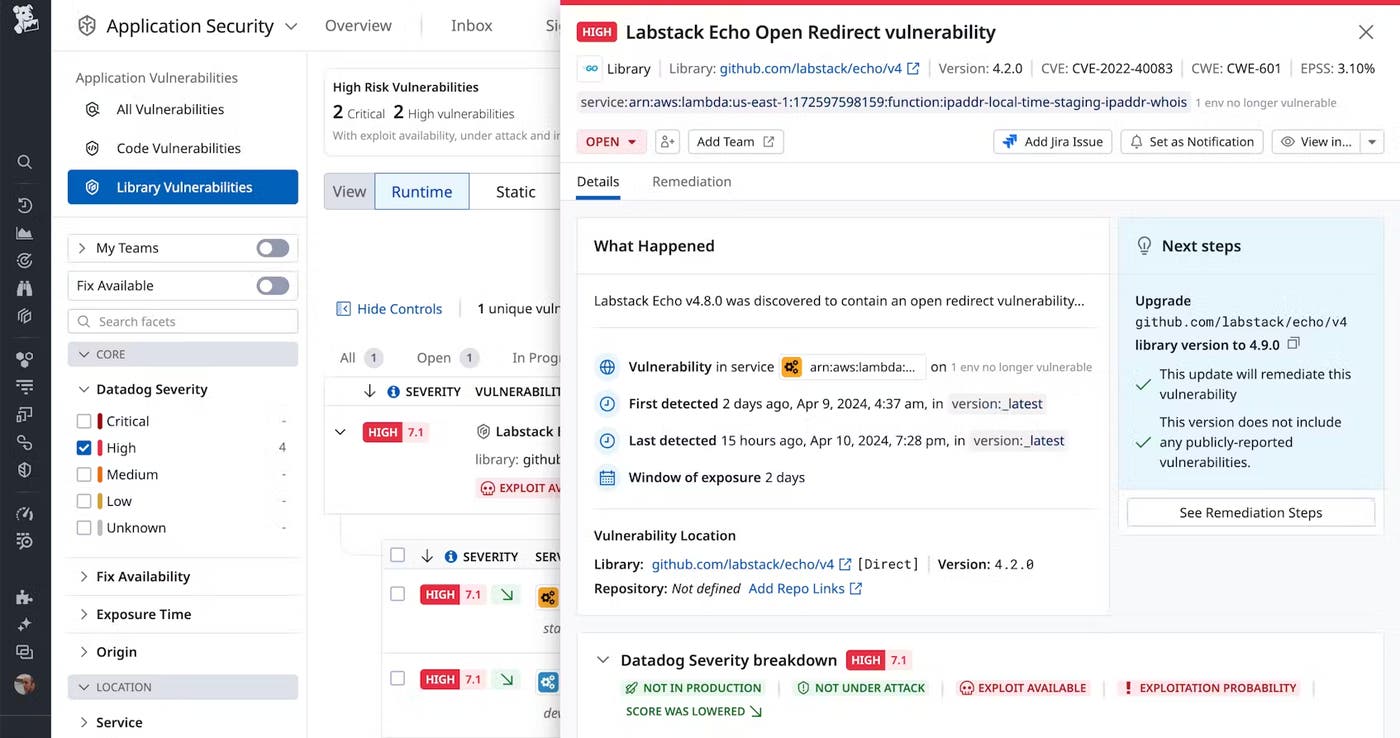

As an example, the vulnerability in the screenshot below has a CVSS base score of 9.6/10 (critical). Datadog analyzed a variety of factors unique to the environment and determined that the service is not in a production environment and does not seem to be internet-facing. Datadog then automatically lowered the score to 7.1/10 (high), helping to understand whether or not to prioritize remediation over other possible security issues.

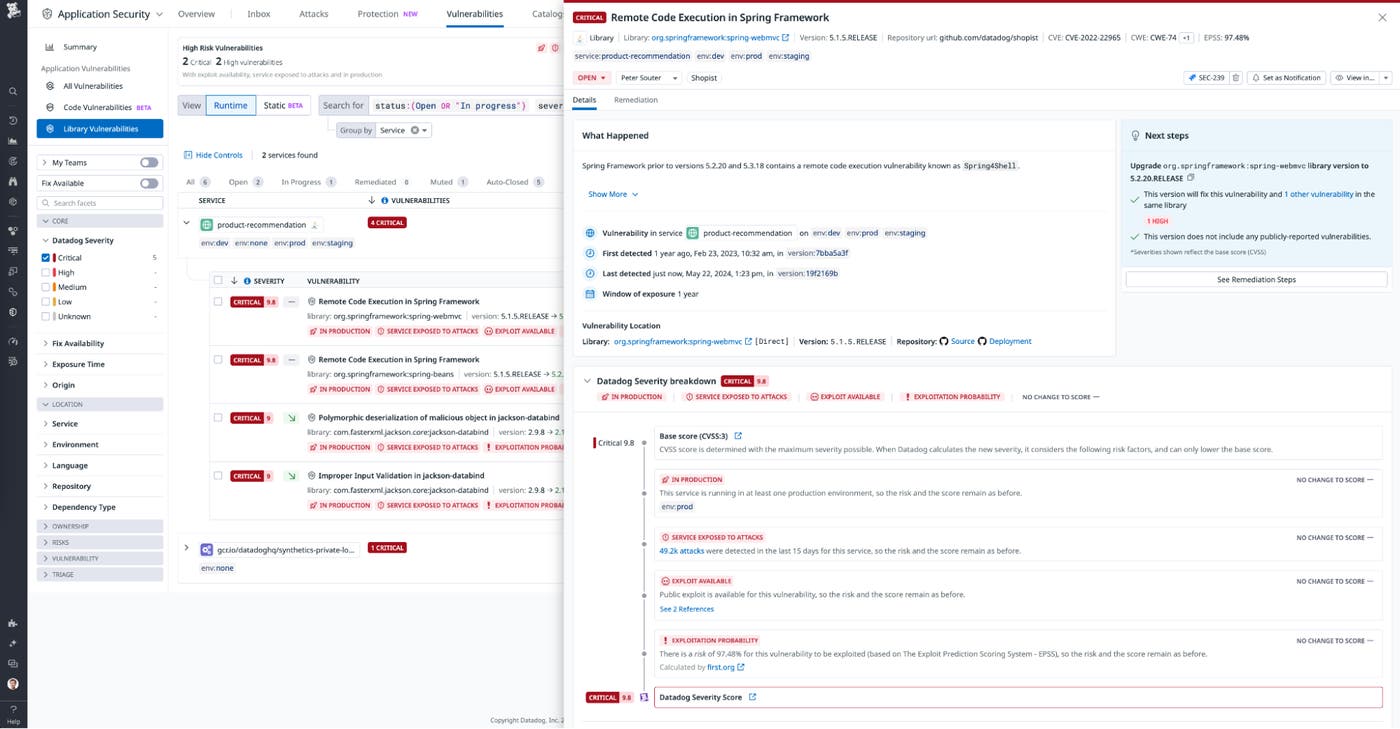

Conversely, the vulnerability below affects a service that runs in a production environment and is internet-facing. In addition, the EPSS score indicates that there’s a high likelihood that an attacker exploits this vulnerability. In that case, Datadog keeps the original vulnerability score, here 9.8/10 (critical).

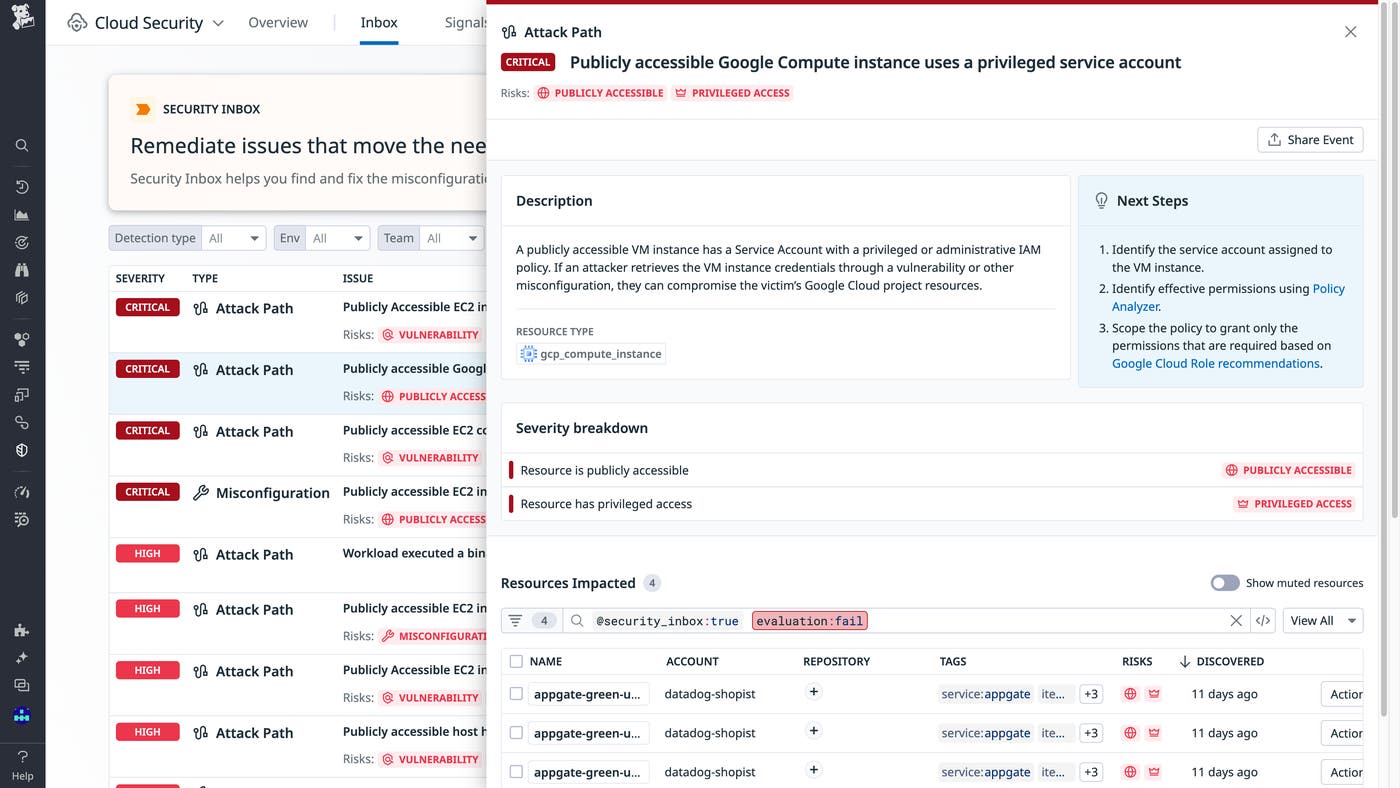

At the cloud layer, Datadog Cloud Security also considers whether a cloud resource is publicly accessible and if it’s privileged to help you prioritize remediation efforts. For instance, the security issue below indicates that a Google Cloud Compute instance is both internet-facing and is tied to a privileged identity, resulting in a critical severity rating.

You can learn more about how to prioritize vulnerability remediation with Datadog SCA, or see how Datadog Security Inbox helps correlate risks and surface the most relevant ones.

Package your applications in minimal container images

Most modern applications run in cloud-native containerized systems. Whether they run on top of Kubernetes or provider-specific services such as Amazon ECS or Azure Container Instances, these applications are packaged within a container image. When building such applications, it’s important to attempt to build small, minimal container images: they are faster to deploy, remove complexity, and drastically reduce the number of OS-level vulnerabilities. However, building minimal container images can require additional effort, as we’ll see in the example below.

The code below represents a small web application that serves content through an HTTP endpoint:

package mainimport ( "fmt" "net/http")

func helloWorldHandler(w http.ResponseWriter, r *http.Request) { fmt.Fprintf(w, "hello world")}

func main() { http.HandleFunc("/", helloWorldHandler) fmt.Println("Starting server on :8000") err := http.ListenAndServe(":8000", nil) if err != nil { fmt.Println("Error starting server:", err) }}A simple Dockerfile for this application might look like the following:

FROM golang:1.22 WORKDIR /app ADD . /app RUN go build -o main-application main.go EXPOSE 8000 CMD ["/app/main-application"]Although the main application has a weight of only 6.6 MB and is self-contained (as it’s statically linked, like all Go binaries), the resulting container image weighs over 900 MB and contains thousands of known vulnerabilities, including 2 critical and 72 high-severity ones (at the time of writing). This is because most of the widely used Docker images are based on a complete Linux distribution such as Debian. In our case, golang:1.22 is based on Debian 12 “bookworm.”

As a first improvement, we can use the golang:1.22-alpine base image, which, as its name suggests, uses the lightweight Alpine Linux distribution. This lowers the image size from 900 MB down to 300 MB, and the number of vulnerabilities down to just 16, with no critical or high-severity ones.

However, we can go a step further and use the fact that Go binaries don’t need specific runtime dependencies in order to leverage multi-stage builds. As a first step, we’ll build our Go binary from our usual golang:1.22-alpine base image as before—but we’ll then copy it to a blank, “distroless” container image where it will run:

FROM golang:1.22-alpine as builder WORKDIR /app ADD . /app RUN go build -o main-application main.go

FROM gcr.io/distroless/static-debian11 COPY --from=builder /app/main-application / EXPOSE 8000 CMD ["/main-application"]Our container image is now down to just 9 MB (one hundredth of the original size!) and contains no vulnerabilities at all. The key to achieving this was to separate the image performing the build process (our go build command) from the image where our application will run, the latter typically requiring fewer dependencies. You can achieve a similar outcome for other languages, including Java, Python, and Node.js by using, for instance, Google’s distroless images.

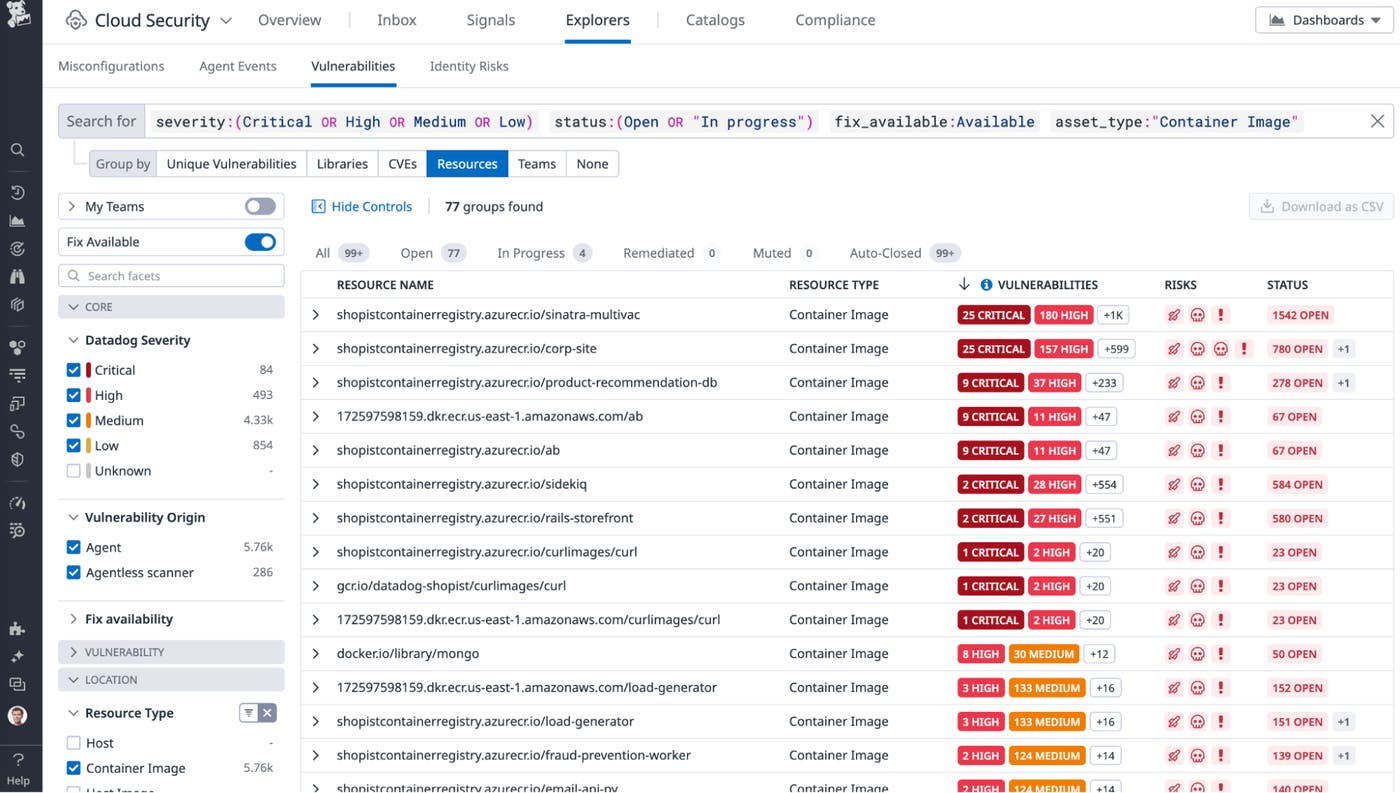

Monitor the security of your container images with Datadog

You can use Datadog Cloud Security to identify vulnerabilities in container images. Datadog can scan your container images and alert you when vulnerabilities are identified, either using the Datadog Agent deployed to your clusters, or through Agentless Scanning. You can then have a centralized view of vulnerabilities in your container images across your infrastructure and environments, assign them to the right team for remediation, and better understand your risk posture.

You can also use the Container Images view to identify container images based on their size, the number of vulnerabilities they have, when they were last built, or the number of containers running this specific image.

Implement “zero-touch” production environments using infrastructure-as-code

The concept of zero-touch production environments was first introduced in 2019 by Michał Czapiński and Rainer Wolafka at the USENIX conference. At a high level, these environments rely heavily on reliable automation as opposed to manual, error-prone actions performed by humans. Zero-touch production also promotes the concept of the least privilege: when an automation can perform a workflow (such as deploying a cloud workload), human operators don’t need direct access to the production environment and can instead rely on safe, indirect, and user-friendly interfaces.

The first step toward implementing zero-touch (or “low-touch”) production environments is to automate the deployment process, typically using infrastructure-as-code (IaC) technologies. Tools like Terraformer can also help convert an existing environment into its code representation.

Once automation is in place, operators typically need only minimal permissions (such as view only) to the production environment, since provisioning new infrastructure now happens through automation. It’s helpful to plan “break-glass” roles ahead of time. These are roles that can be activated with approvals and audits when a specific situation, such as an incident, requires an operator to use privileged permissions in a production environment.

For additional information on implementing zero-touch production environments, you can watch a free livestream of Rami McCarthy’s talk at this year’s upcoming fwd:cloudsec North America. The first independent cloud security conference for practitioners, Datadog has been a sponsor of fwd:cloudsec multiple years.

Track manual cloud actions with Datadog

It’s important to be notified when there are manually initiated changes in your cloud environment. You can use the following query in Datadog Cloud SIEM to identify and alert on manual actions performed from the AWS Console (adjusted from Arkadiy Tetelman’s methodology):

source:cloudtrail @http.useragent:("console.amazonaws.com" OR "Coral/Jakarta" OR "Coral/Netty4" OR "AWS CloudWatch Console" OR S3Console/* OR \[S3Console* OR Mozilla/* OR onsole.*.amazonaws.com OR aws-internal*AWSLambdaConsole/*) -@evt.name:(Get* OR Describe* OR List* OR Head* OR DownloadDBLogFilePortion OR TestScheduleExpression OR TestEventPattern OR LookupEvents OR listDnssec OR Decrypt OR REST.GET.OBJECT_LOCK_CONFIGURATION OR ConsoleLogin) -@userIdentity.invokedBy:"AWS Internal"Use short-lived cloud credentials in your CI/CD pipelines

Research has repeatedly shown that leaked long-lived cloud credentials are one of the most common causes for data breaches in cloud environments. These credentials, which often have privileged permissions, never expire and are easy to inadvertently leak in source code, container images, configuration files, or logs. As a consequence, using short-lived cloud credentials to authenticate humans and workloads is one of the most valuable investments a cloud security program can make.

Continuous integration and continuous deployment (CI/CD) pipelines are a type of workload that often runs on external infrastructure and needs to authenticate to cloud environments to deploy resources, typically through infrastructure-as-code or some sort of automation. In these pipelines as well, it’s critical to leverage short-lived credentials to minimize the impact of accidental credentials exposure.

Up to as recently as 2021, external systems (such as CI/CD pipelines) that connect to cloud environments would usually require hardcoded, long-lived credentials. Thankfully, cloud providers and CI/CD providers now support using short-lived cloud credentials through the OpenID Connect (OIDC) protocol. The process works as follows:

The CI/CD provider injects into the running pipeline a signed JSON Web Token (JWT) that provides a verifiable identity to the pipeline.

The CI/CD pipeline uses this JWT to request cloud credentials. How this is done depends on the cloud provider. On AWS, for instance, this is achieved using a call to

AssumeRoleWithWebIdentity.If the cloud environment has been properly configured to trust and distribute credentials to this pipeline, it returns short-lived credentials that are valid for a few hours.

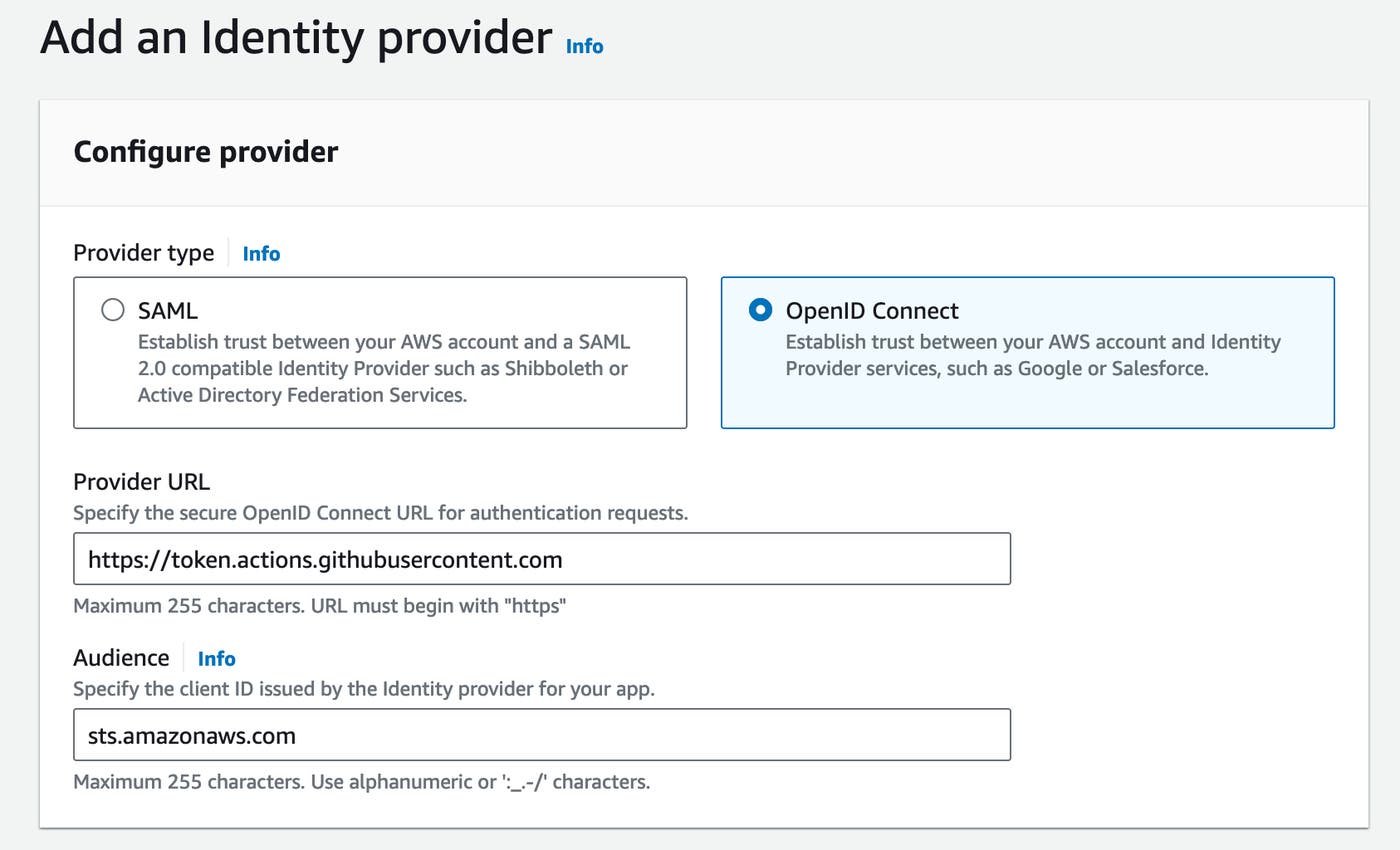

Let’s see an example of how to configure this for a GitHub Actions workflow that deploys cloud resources to AWS. First, create an OIDC provider in the target AWS account with the URL https://token.actions.githubusercontent.com and audience sts.amazonaws.com.

Then, create an IAM role for your pipeline to use and use the following trust policy:

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "arn:aws:iam::0123456789012:oidc-provider/token.actions.githubusercontent.com" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "token.actions.githubusercontent.com:aud": "sts.amazonaws.com" }, "StringLike": { "token.actions.githubusercontent.com:sub": "repo:your-organization/your-repository:*" } } } ]}Finally, add a step to your pipeline using the aws-actions/configure-aws-credentials GitHub Action and specify the IAM role to assume:

- name: Configure AWS credentials uses: aws-actions/configure-aws-credentials@v1 with: aws-region: us-east-1 role-to-assume: arn:aws:iam::0123456789012:role/github-actions-role role-session-name: github-actionsUse Datadog to monitor authentication events

Datadog provides multiple ways to monitor your cloud environment for suspicious authentication activity or potential misconfigurations. For example, Cloud Security provides out-of-the-box rules to identify risky IAM users with long-lived, unused credentials:

Datadog Cloud SIEM can help you identify compromised access keys through the following rules:

TruffleHog user agent observed in AWS, which identifies an attacker who successfully uses the popular TruffleHog tool to discover leaked access keys

The AWS managed policy AWSCompromisedKeyQuarantineV2 has been attached, which identifies when AWS itself quarantines an IAM user whose access key was publicly leaked

Impossible travel event leads to permission enumeration, which correlates an Impossible user travel event with multiple

AccessDeniederrors

In addition, you can create custom Cloud SIEM rules using new value and anomaly detection methods to identify, for instance, when a specific IAM user authenticates from a new, previously unseen country.

You can also use Cloud SIEM to query logs that identify unusual activity, including when long-lived access keys are used in CloudTrail logs:

source:cloudtrail @userIdentity.type:IAMUser @userIdentity.accessKeyId:AKIA*Or when new access keys are created:

source:cloudtrail @eventSource:iam.amazonaws.com @evt.name:CreateAccessKeyFinally, you can use Cloud Security to create a custom rule that flags IAM users with active credentials, using the Rego code below on the aws_iam_user and aws_iam_credential_report resource types:

package datadog

import data.datadog.output as dd_output

import future.keywords.containsimport future.keywords.ifimport future.keywords.in

one_access_key_is_active(credential_report) if { credential_report.access_key_1_active} else if { credential_report.access_key_2_active}

eval(iam_user) := "fail" if { some credential_report in input.resources.aws_iam_credential_report credential_report.arn == iam_user.arn one_access_key_is_active(credential_report)} else := "pass"

# This part remains unchanged for all rulesresults contains result if { some resource in input.resources[input.main_resource_type] result := dd_output.format(resource, eval(resource))}Conclusion

Our 2024 State of DevSecOps study shows that it’s not enough just to monitor for security vulnerabilities and threats. Rather, it’s essential to understand how severe each threat is within the context of your environment in order to effectively prioritize remediation efforts. Additionally, while organizations have made progress in hardening their systems, there are still some key security best practices—such as implementing low- or zero-touch production environments and short-lived credentials—that can help reduce your overall risk.

In this post, we looked at how Datadog’s Cloud Security platform can help customers effectively identify and prioritize security vulnerabilities and threats, both to your cloud infrastructure and applications. To get started with the Datadog security suite, check out our documentation. If you’re new to Datadog, sign up for a 14-day free trial.