Mohammad Jama

As AI adoption accelerates on Google Cloud, the challenge for most teams today is no longer just building AI-powered applications. It’s also managing the full AI stack from end to end, including data pipelines, infrastructure, release process, and security operations. Many teams are monitoring these layers with different tools, creating complexity, fragmenting visibility, and slowing decisions on what to do next.

Addressing these challenges is at the heart of Datadog’s long-standing collaboration with Google Cloud. And as a recipient of two 2026 Google Cloud Partner of the Year awards in the categories of AIOps (Technology) and Infrastructure Modernization (Marketplace), Datadog is thrilled to be on site at Google Cloud Next this year.

In this post, we’ll show how Datadog gives teams building AI applications and agents on Google Cloud a single platform to:

Improve data reliability and visibility across your Google Cloud AI stack

Strengthen security with AI-powered investigation and response

Evaluate and troubleshoot AI applications and agents on Google Cloud

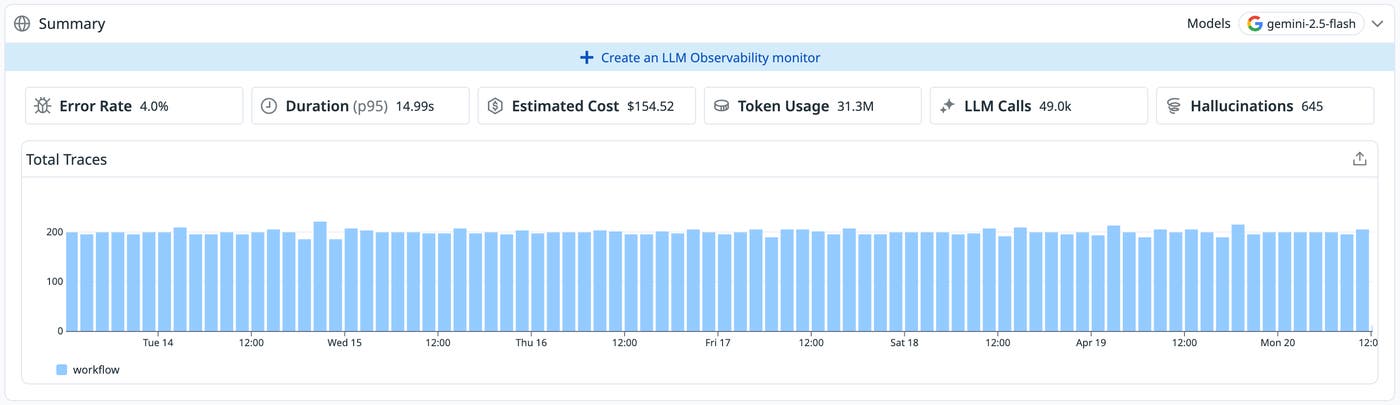

Teams building on Google Cloud need visibility into every step of agent behavior, not just model outputs. That includes visibility into what prompts are sent, which tools are called, how long each step takes, and what everything costs. Datadog LLM Observability supports auto-instrumentation for Google ADK-based agents, giving teams a faster, lower-friction path to full observability of agents built on the Gemini Enterprise Agent Platform. Once instrumented, teams can debug agentic workflows step by step, inspect inputs and outputs at each stage, analyze latency and token usage, and iterate faster without writing custom instrumentation from scratch.

Shipping AI applications with confidence also means evaluating them before issues surface in production, not just reacting after they do. LLM Observability Experiments gives teams a structured way to test prompts, compare models, and assess output quality before any change goes live.

Even with strong instrumentation and pre-production evaluation, production issues still happen, and when they do, the speed of investigation matters. The Datadog MCP Server brings Datadog’s observability context directly into AI-assisted development environments like Gemini CLI, so engineers can query metrics, traces, and logs without leaving their workflow. And for alerts that require deeper investigation, Bits AI SRE can autonomously analyze the full-stack telemetry data behind an incident and surface likely root causes.

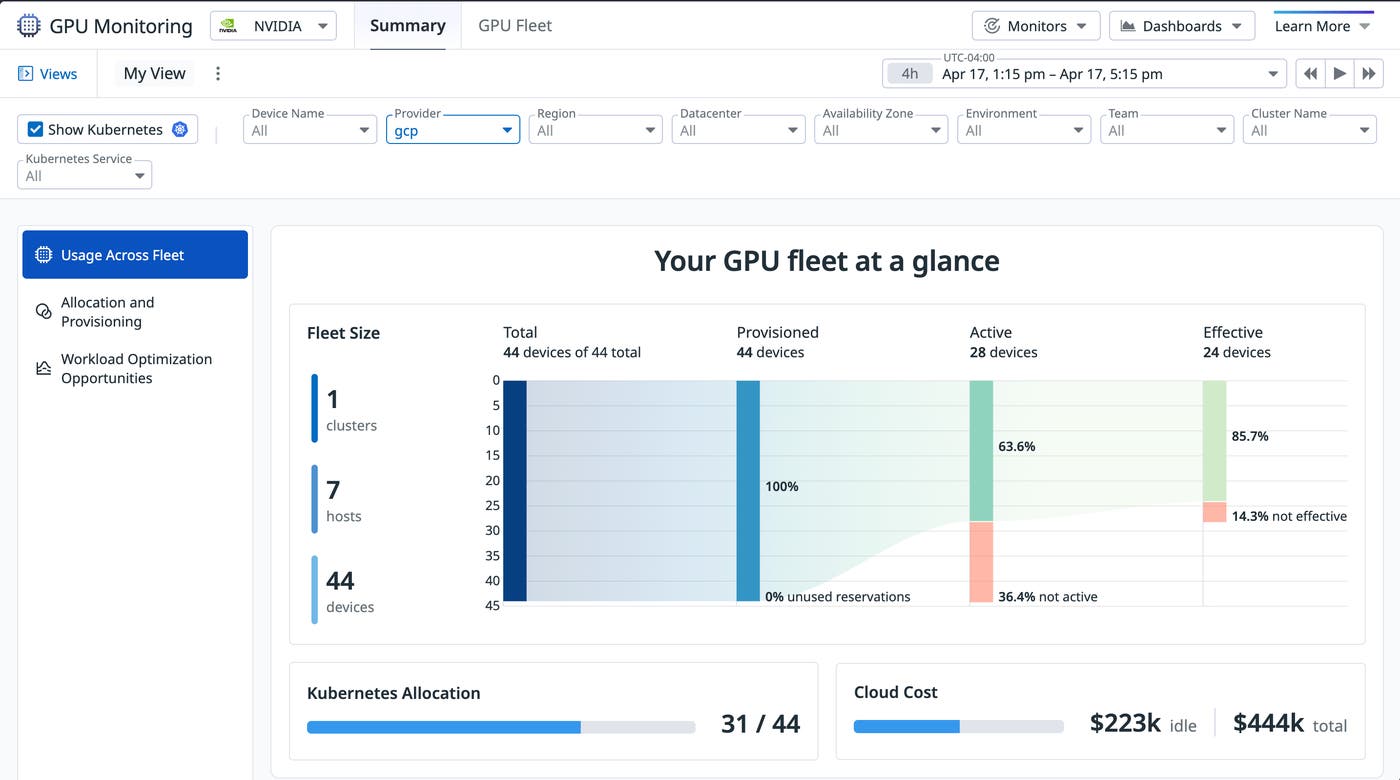

Optimize cost and performance across GPUs and TPUs

AI infrastructure is expensive, and understanding whether it is being used efficiently is harder than it should be. Datadog GPU Monitoring gives teams visibility into the performance and utilization of GPUs running on Google Cloud, making it easier to surface workload inefficiencies and hardware bottlenecks before they drive up costs. Teams can spot underutilized GPUs, identify memory bottlenecks, and understand which workloads are getting the most out of their hardware. For teams running inference on Google’s custom TPU accelerators, Datadog’s Google Cloud TPU integration provides similar visibility into TPU workloads.

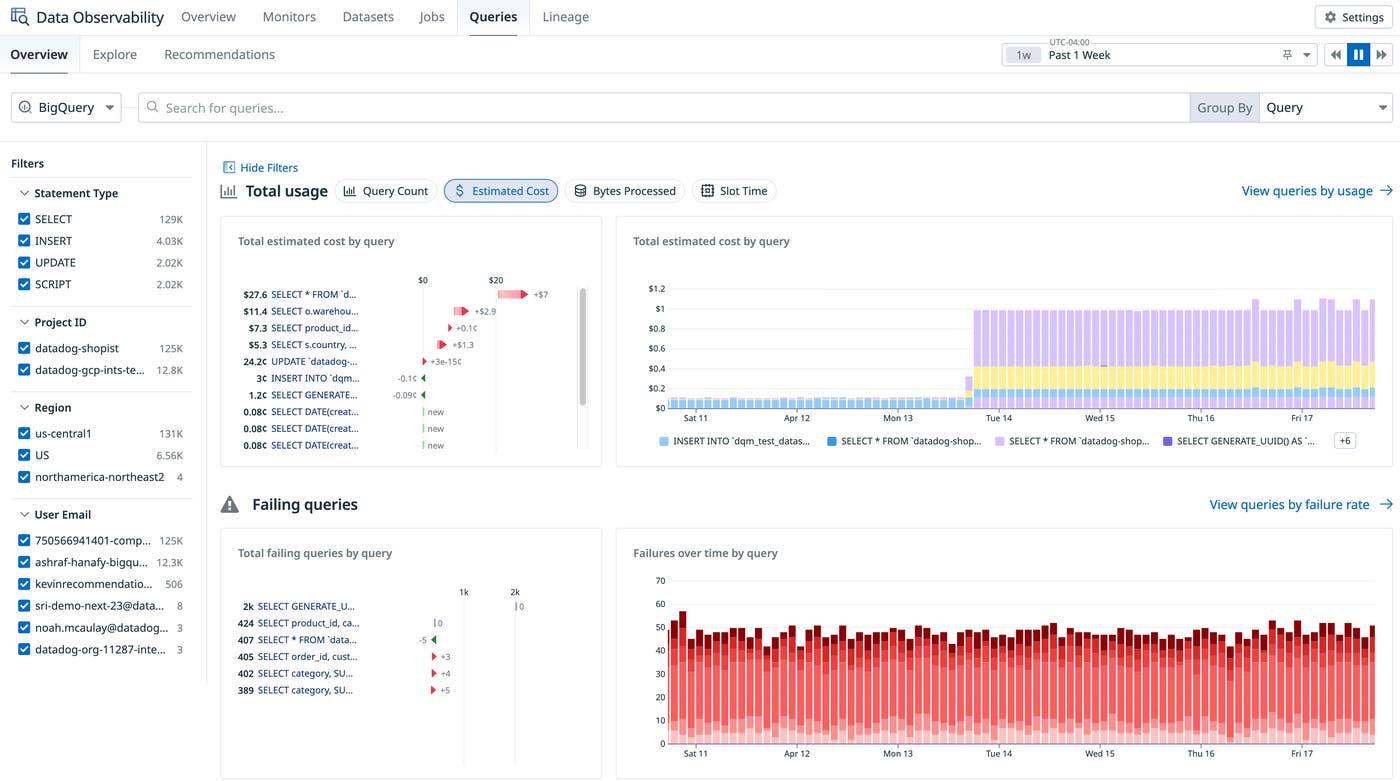

Improve data reliability and visibility

AI applications are only as reliable as the data pipelines, warehouses, and transformations behind them. As BigQuery becomes more central to analytics, ML, and AI workflows on Google Cloud, teams need visibility into both data health and query-level performance and cost. Datadog Data Observability gives teams a single place to monitor data quality, detect anomalies, analyze lineage, and prevent issues from reaching downstream BI and AI applications. Teams can use Datadog to detect failures early, catching bad data in BigQuery warehouses through ML-powered monitors before AI models, applications, or end users are impacted. They can also detect upstream pipeline failures in jobs run on Databricks, Spark, Airflow, or dbt.

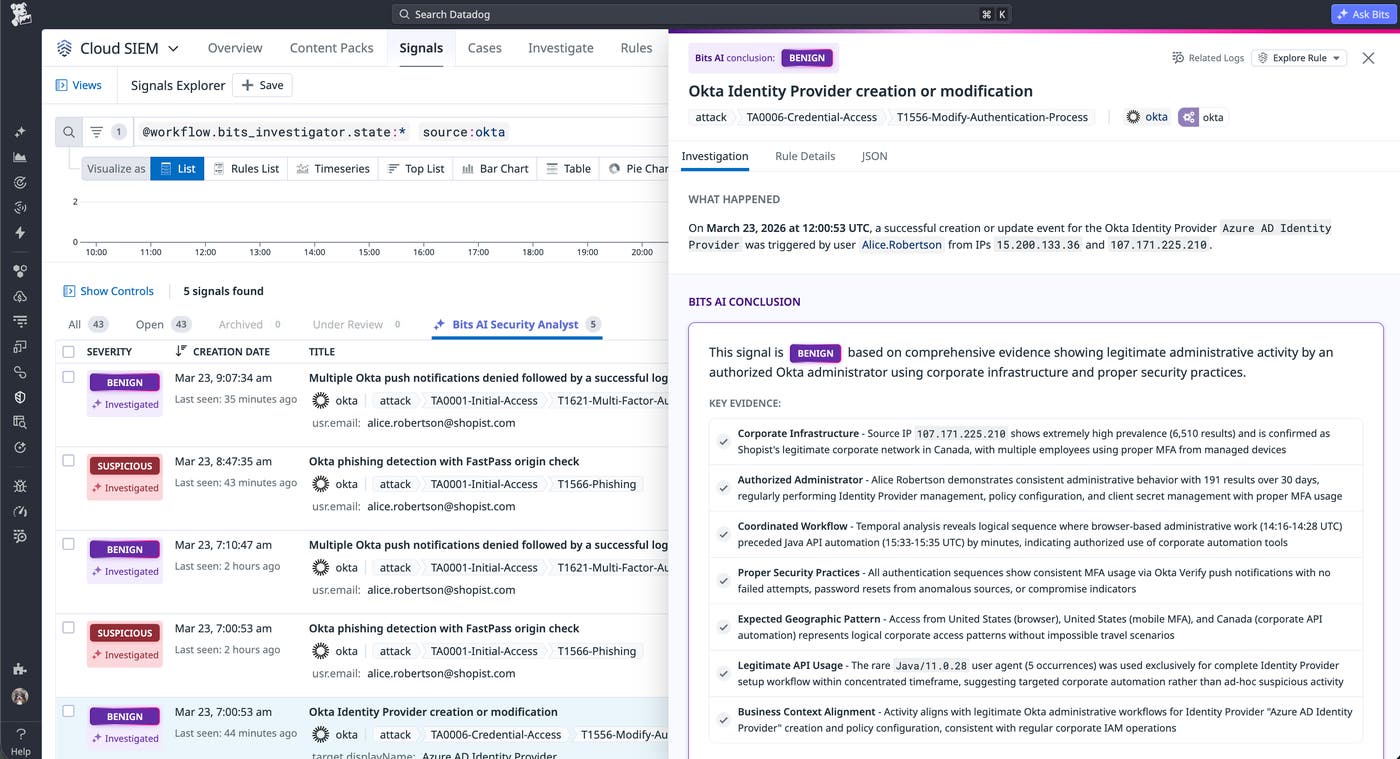

Strengthen security with AI-powered investigation and response

As cloud and AI environments expand, security teams face more signals, more surface area, and more pressure to quickly separate real threats from noise. Bits AI Security Analyst acts as an always-on SOC teammate for Datadog Cloud SIEM investigations, picking up each signal, querying relevant context, applying data-based reasoning, and recommending a verdict so analysts know where to focus. The result is faster triage, less manual effort, and quicker escalation of the alerts that actually require human attention. Because the investigation happens inside the same platform where Google Cloud infrastructure, application, and telemetry context already lives, Bits AI Security Analyst can reason over a broader set of signals than a standalone tool can.

And with Cloud SIEM Content Packs specific to Google Cloud, such as Google Workspace, Security Command Center, and Google Cloud Audit Logs, teams can quickly identify security threats on Datadog out of the box.

Get started faster with extensive support for Google Cloud

AI success on Google Cloud depends on more than model access. Teams need a single source of truth across application behavior, agents, data systems, infrastructure, release workflows, and security operations. Whether you’re just getting started or scaling an existing AI stack, Datadog gives Google Cloud users the tools to reduce complexity, improve reliability, optimize cost, and move faster. To learn more, visit our Google Cloud solutions page, read our documentation to get started, or sign up for a 14-day free trial. We look forward to seeing you at Google Cloud Next!