Michael Gerstenhaber

In a Kubernetes cluster, the machines are divided into two main groups: worker nodes and control plane nodes. Worker nodes run your pods and the applications within them, whereas the control plane node runs the Kubernetes Control Plane, which is responsible for the management of the worker nodes. The Control Plane makes scheduling decisions, monitors the cluster, and implements changes to get the cluster to a desired state.

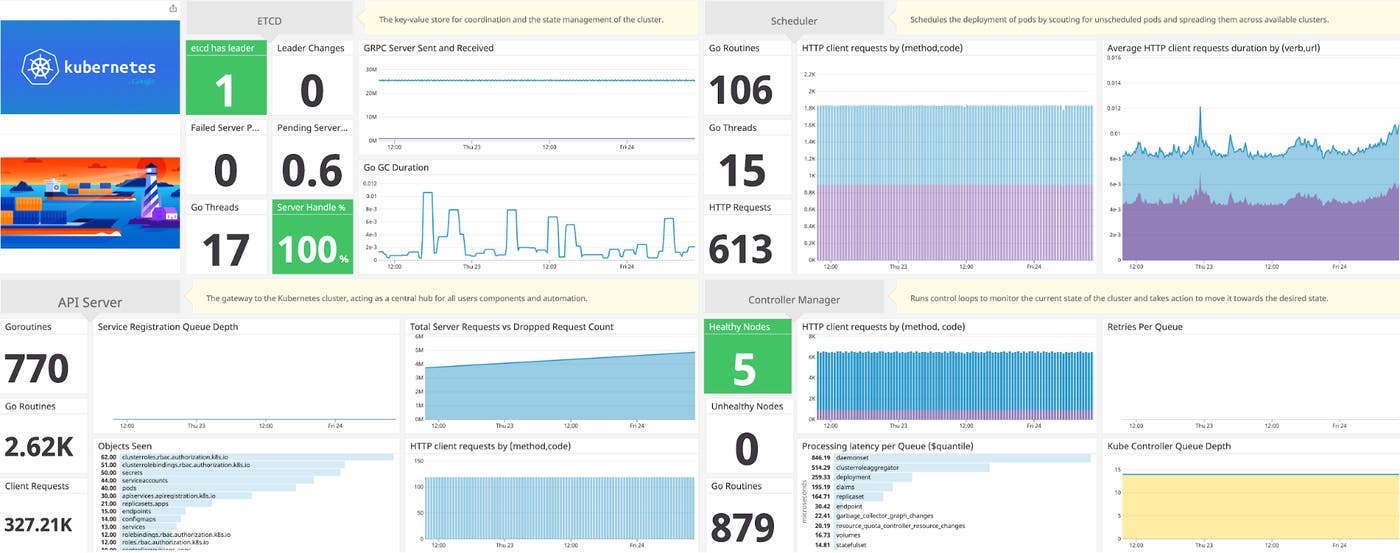

Datadog's Kubernetes integrations have always enabled you to monitor your worker nodes and the applications running in your cluster, and now we are pleased to announce new integrations specifically for monitoring the Kubernetes Control Plane as well. Combined with the NGINX ingress controller integration, these integrations together deliver complete visibility into how Kubernetes orchestrates your cluster.

Monitoring every part of the Control Plane

In an airport, the air traffic controller issues commands to the pilots about when to take off or land, monitors which runways are open, and keeps tabs on the statistics of every plane. The Kubernetes Control Plane shares similarities in that it is the source of truth and communication between the entire Kubernetes technology stack. And just like air traffic control, if the Control Plane goes offline or fails to carry out its duties, traffic starts to back up, which results in delayed scheduling of pods.

Datadog's Kubernetes integrations now provide out-of-the-box telemetry for the four main components of the Control Plane so you can monitor this critical piece of cluster infrastructure in detail:

The API Server, which acts as a communication hub that all components and developers must use to communicate with the cluster.

The Controller Manager, which watches the state of the cluster while attempting to make changes to move the cluster towards the desired state.

The Scheduler, which is responsible for maintaining the state of the cluster by assigning workloads to nodes.

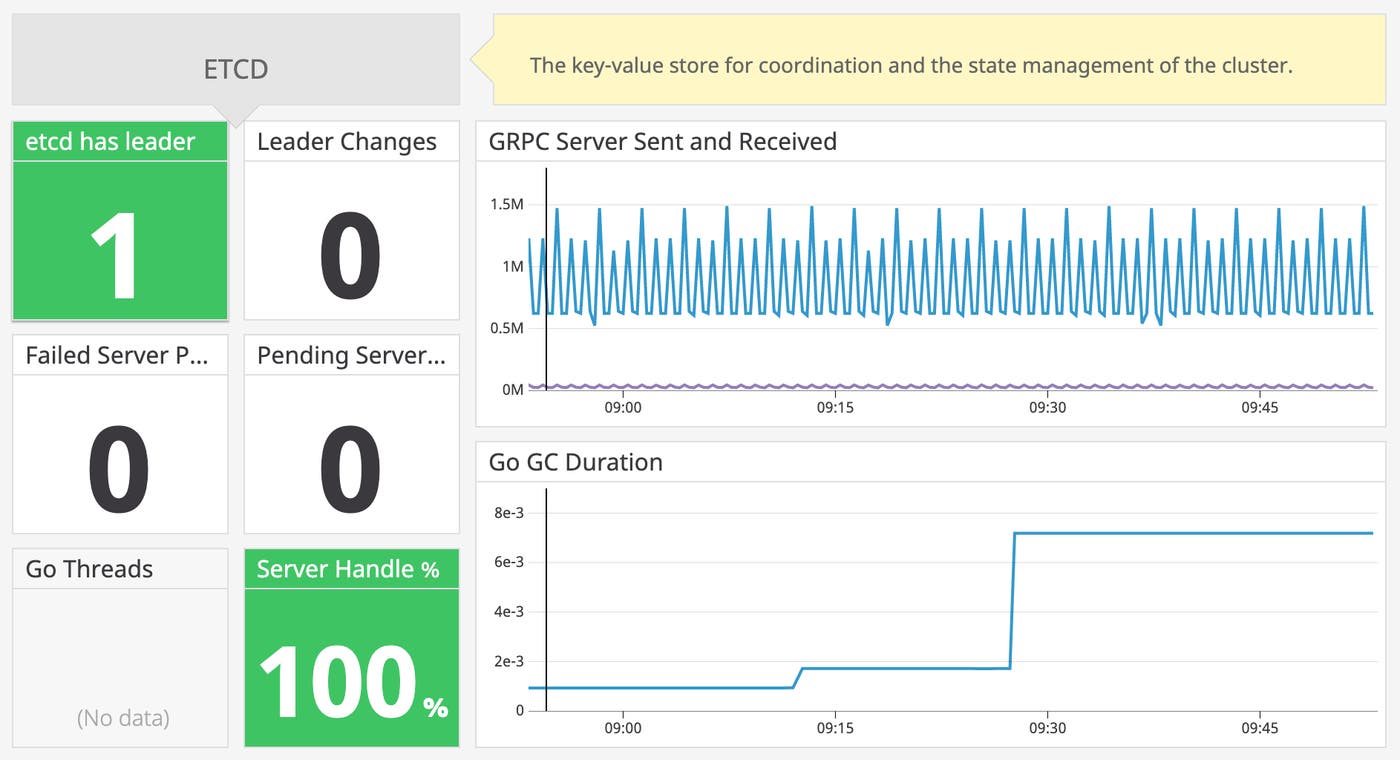

etcd, Kubernetes’ key-value store for storing data about cluster configuration, the current and desired state of all the components running in the cluster, and more.

With three new integrations for the API Server, Controller Manager, and Scheduler, alongside Datadog's existing etcd integration, you can collect key metrics from all four components of the Control Plane and visualize them in one place.

Key metrics for monitoring the Kubernetes Control Plane

Monitoring the components of the Control Plane allows you to more rapidly troubleshoot scheduling and orchestration issues that arise in your cluster. The metrics surrounding the Control Plane give a detailed view into its performance, including real-time data on its overall workload and latencies.

To get a better understanding of the Control Plane and how to monitor it, below we'll dig a bit deeper into the inner workings of each component.

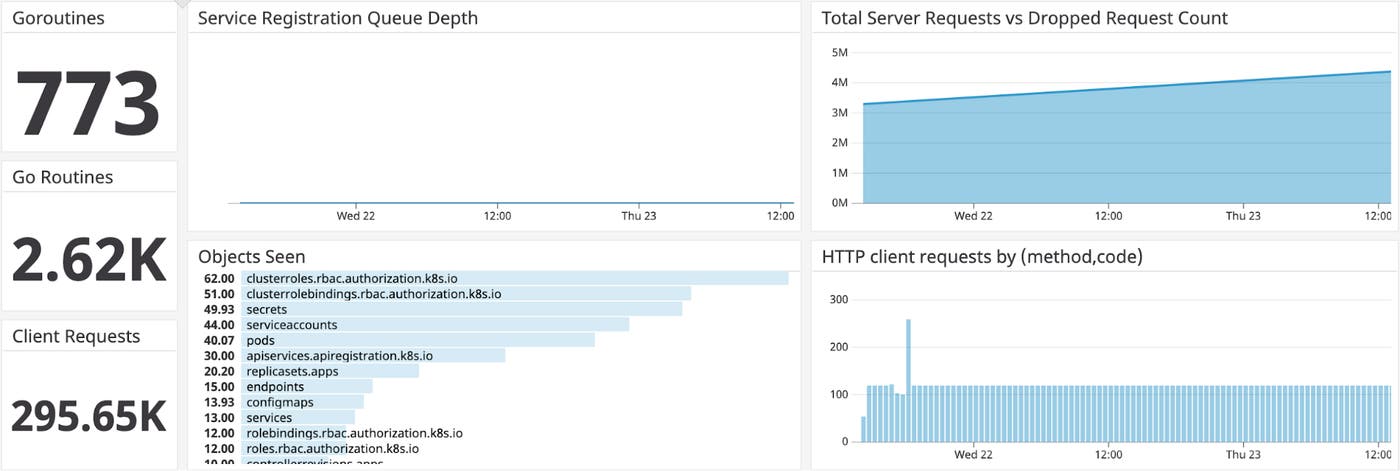

API Server

The API Server is the gateway to the Kubernetes cluster and acts as a central hub for all users, components, and automation processes. Alongside gRPC communication, the API Server also implements a RESTful API over HTTP and is responsible for storing API objects into etcd. The API Server also listens to the Kubernetes API and implements a number of verbs:

GET: retrieves specific information about a resource (e.g., data from a specific pod)

LIST: retrieves an inventory of Kubernetes objects (e.g., a list of all pods in a given namespace)

POST: creates a new resource based on a JSON object sent with the request

DELETE: removes a resource from the cluster (e.g., deleting a pod)

Key metrics to monitor

Acting as the gateway between cluster components and pods, the API Server is especially important to monitor. Datadog collects metrics that allow you to quantify the server's workload and its supporting resources, such as the number of requests (broken down by verb), goroutines, and threads. You can also monitor the depth of the registration queue, which tracks queued requests from the Controller or Scheduler and can reveal if the API Server is falling behind in its work. In addition to tracking the total number of server requests, the new integration enables you to monitor for an increase in the number of dropped requests, which is a strong signal of resource saturation.

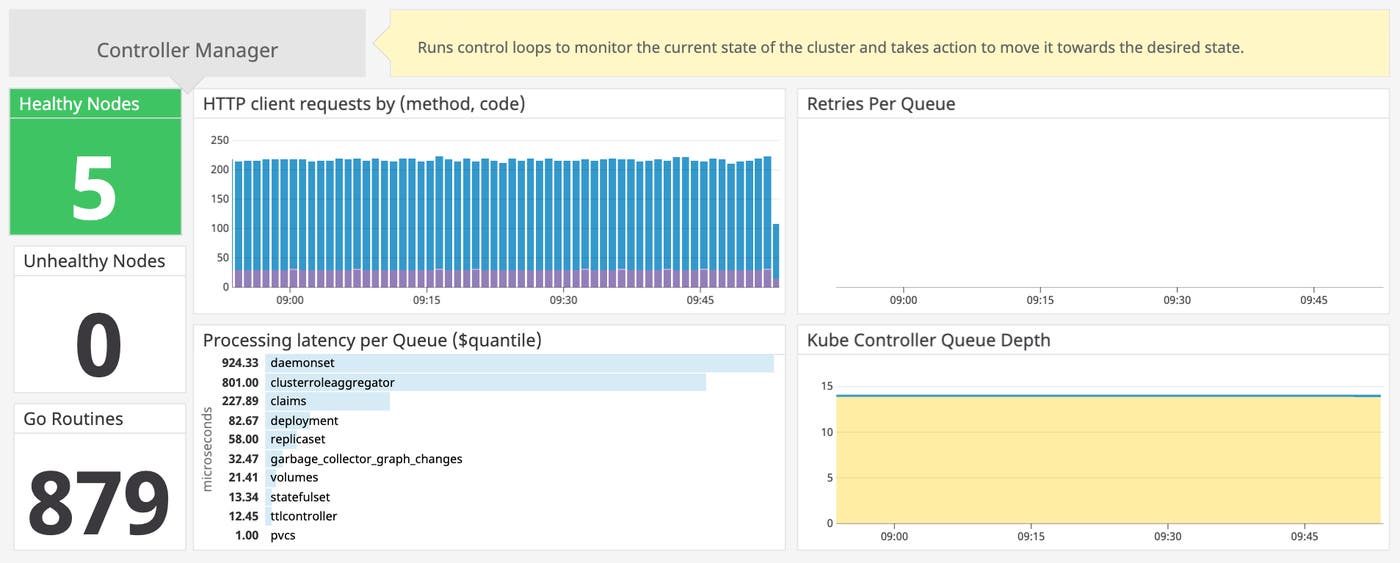

Controller Manager

The Controller Manager runs controllers that continually monitor the current state of the cluster and take action to maintain the desired state. For instance, the node controller monitors the health of all the nodes in the cluster and can automatically evict failing nodes, whereas the replication controller ensures that the number of pods running in the cluster matches the desired count. Monitoring the Controller Manager therefore provides insight into the overall state of the cluster, as well as the performance of the Controller Manager itself.

Key metrics to monitor

Because the Controller Manager has context about the state of all nodes in the cluster, monitoring the number of healthy versus unhealthy nodes can point to cluster-wide issues or the Controller Manager's failure to correctly handle failing nodes. On the pre-built Controller Manager dashboard in Datadog, you can track the number of HTTP requests from the manager to the API Server to help ensure that the two components are communicating normally. You can also monitor the manager's queues, where each actionable item (such as replication of a pod) is placed before it's carried out. Datadog's Controller Manager dashboard provides metrics on latency per queue, retries per queue, and depth per queue to track the performance of the manager.

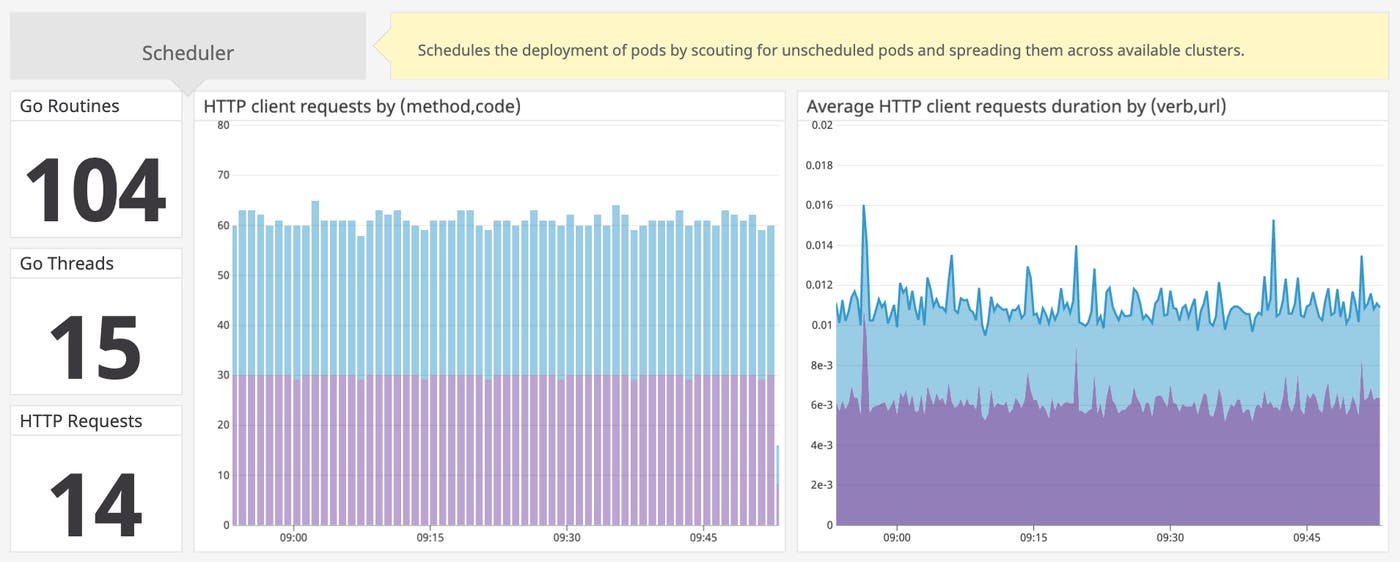

Scheduler

The Kubernetes Scheduler helps control the state of the cluster by communicating directly with the API Server, scouting for unscheduled pods, and then spreading those pods across the available nodes. The Scheduler weighs a number of criteria in placing workloads, including resource availability on the nodes and configured constraints that specify which node types can run certain pods.

Key metrics to monitor

On Datadog's pre-built Kubernetes Scheduler dashboard, you can visualize a number of key metrics for monitoring the Scheduler, including the number of goroutines, threads, and HTTP requests to and from the API Server. Monitoring the count of goroutines and threads provides a high-level indication of the overall workload of your Scheduler. Metrics on client request rates and duration provide more detail into what type of calls the Scheduler is making, and how efficiently those calls are being handled. Anomalously high request durations or an excess of non-200 HTTP response codes can alert you that you may need to take action to ensure that your workloads continue to be scheduled properly.

Etcd

Etcd is a key-value store for cluster coordination as well as the state management of the cluster. The service exposes all of the state about the running components and coordinates the protocol and proposals to maintain consensus and replicate data across the cluster.

Etcd is built off of the Raft protocol to manage leadership in the cluster. The etcd leader is responsible for managing the Raft protocol and proposals (requests that go through the Raft protocol) with other nodes in the cluster. When a leader node restarts or fails, a new leader is chosen from the other members in the cluster.

To learn more about our etcd integration visit our etcd blog post.

Key metrics to monitor

As a general rule, every cluster should always have a leader, so the best way to monitor the health of the etcd service is to ensure that each cluster has one. Although not necessarily detrimental, frequent leader changes can also alert you to issues within the cluster. Monitoring the number of pending and failed proposals can alert you to any issues with changes to your data propagating across the cluster.

Etcd relies on a gRPC proxy that acts as a gateway between the client and the etcd server. Monitoring the amount of data sent and received by the gRPC proxy can help you ensure that the downstream and upstream connections are alive and that etcd is communicating properly.

Start monitoring the Control Plane

These new integrations provide detailed insights into the health of your Kubernetes infrastructure, so you can monitor your container workloads alongside all the components of your Kubernetes cluster. The Control Plane integrations are pre-packaged with the Datadog Agent as of version 6.12.

To learn more about these integrations and how to configure the metrics being collected from the Control Plane, consult our docs:

If you’re not yet using Datadog, you can start a free trial to get deeper visibility into your cloud infrastructure, applications, and services.