Anthonin Bonnefoy

At Datadog, we track the life cycle of millions of ephemeral hosts that report telemetry data to our platform. When a host stops emitting data, we eventually need to clean it up to avoid bloating our metadata store.

To detect inactive hosts, the Datadog team that manages the host metadata store introduced a new upsert to track the last time a host was seen. We expected this new query to have minimal impact. Each host would be updated at most once a day, so even at 25,000 upserts per second, most queries should have been no-ops.

But when we rolled out the new query, disk writes doubled and Write-Ahead Logging (WAL) syncs quadrupled. We discovered that even when an upsert doesn’t change any values, it still locks the conflicting row, which is recorded in the WAL. Given that a Postgres cluster can only have a single writer, there’s a hard limit to how many writes it can handle. The increase in disk writes introduced by the new query was consuming too much of this limited budget and had to be fixed.

In this post, we’ll walk through how we diagnosed the unexpected overhead by inspecting Postgres’s WAL and how we rewrote the query to eliminate the cost without sacrificing correctness.

Designing a low-overhead upsert in Postgres

When the Datadog Agent runs, it collects host metadata—such as host_id, availability zone, and tags—and sends it to Datadog, where we store it in a Postgres database. Because hosts have a limited lifetime, they eventually terminate and stop emitting metrics. At that point, we can safely delete them from the database to keep it from growing indefinitely.

However, we don’t have a specific signal when a host has been terminated, other than the fact that it has stopped sending data. We decided that if a host hasn’t emitted metrics for 7 days, we can safely delete it. To support that, we needed a way to track the last time a host was seen. Updating a row every time we see a host is prohibitively expensive: In our largest data centers, this means more than 25,000 updates per second.

We needed to design our table and queries to avoid overloading our database, with three constraints in mind:

- Reduce update cost. Postgres uses MultiVersion Concurrency Control (MVCC), so every update creates a new row version. Adding a

last_ingestedcolumn to the existing host table would copy all host metadata on each update. Moving this field to a dedicated table will reduce the amount of data written per update. - Minimize write amplification by relying on Heap-Only Tuples (HOT) updates. By default, updates add entries to all indexes on the table. If an update does not modify indexed columns and there is enough free space on the page, Postgres can apply a HOT update, avoiding index writes and making updates significantly cheaper.

- Accept coarse-grained freshness. We only need to know whether a host has emitted data within the past 7 days, so 1-day granularity is sufficient.

With those constraints, we created the following table:

CREATE TABLE host_last_ingested ( host_id BIGINT PRIMARY KEY, last_ingested TIMESTAMP WITH TIME ZONE NOT NULL default now()) WITH (fillfactor=80);We didn’t create an index on the last_ingested column so that updates only modify unindexed columns, allowing Postgres to apply HOT updates. We also set the table’s fillfactor to 80%. This means inserts will only fill 80% of an 8 kB page, leaving free space on each page so that subsequent updates can stay on the same page and qualify for HOT updates.

For the query, we used this upsert:

INSERT INTO host_last_ingested as t VALUES($1, now()) ON CONFLICT (host_id) DO UPDATE SET last_ingested = EXCLUDED.last_ingested WHERE t.last_ingested < EXCLUDED.last_ingested - '1 day'::interval;This is a standard ON CONFLICT DO UPDATE upsert. If the host doesn’t exist, we insert it. Otherwise, we run the update. The WHERE condition limits updates to a host to once a day.

If we run the query twice on a nonexistent host:

<query>INSERT 0 1<query>INSERT 0 0The INSERT command outputs the number of processed_rows in the second integer (the first one is always 0). The first query inserts 1 row, and the second reports 0 processed rows because the WHERE condition prevents the update from being applied.

With this query, we expected that most upserts would behave like no-ops. But that assumption turned out to be false.

What we saw when we rolled out the upsert: Writes without updates

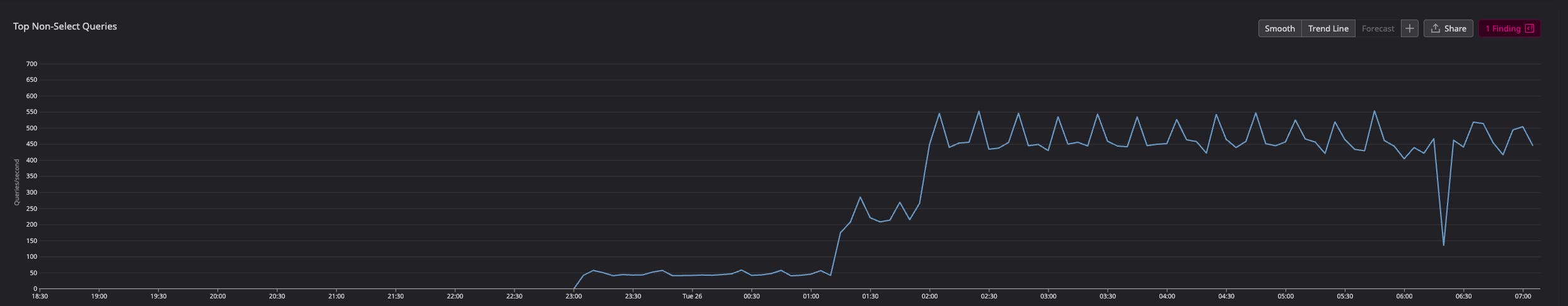

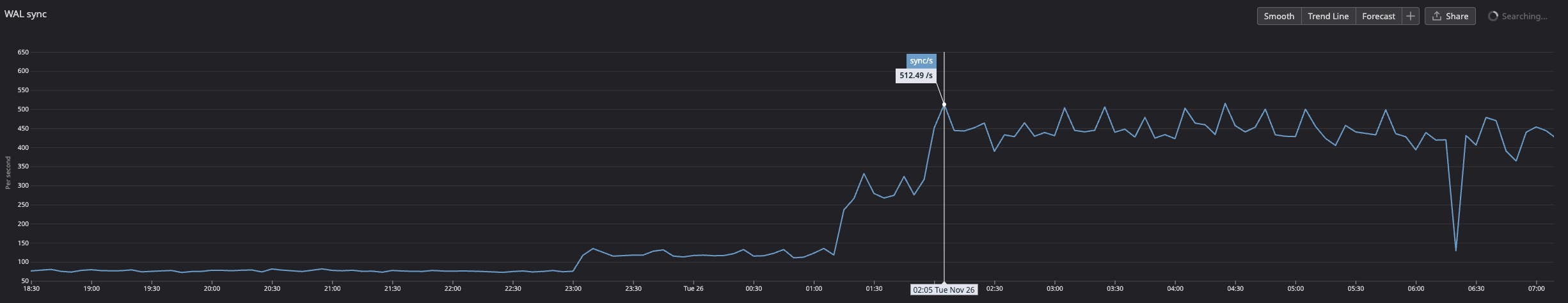

The new upsert was gradually rolled out on our smaller data centers until we reached about 500 upserts per second:

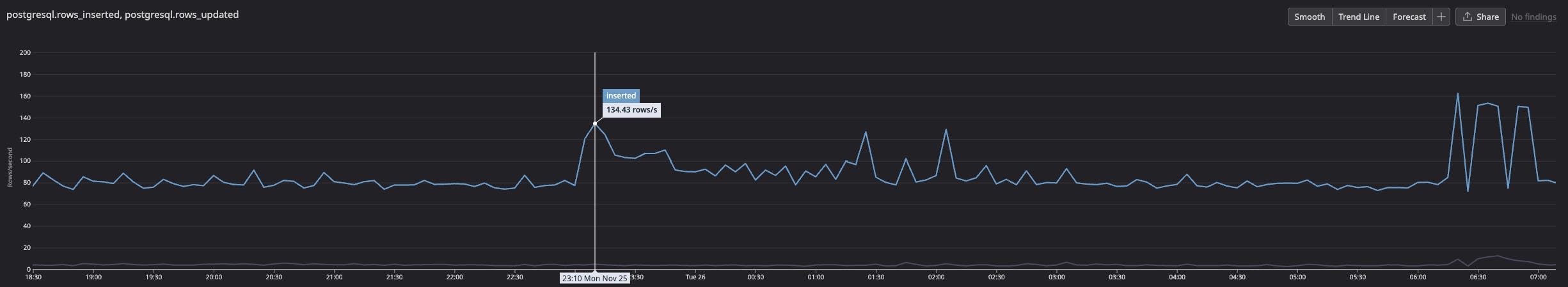

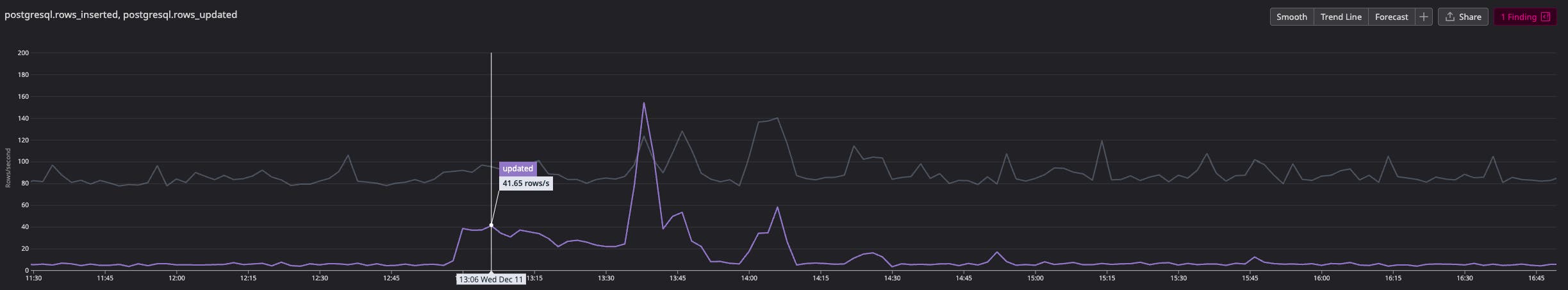

We saw an initial spike in insertions and no updated rows, which matched our expectation that most upserts would be no-ops:

However, the number of write IOPS more than doubled, even though the update rate remained flat:

Thankfully, it was a small data center and 500 IOPS was still within manageable limits. More importantly, WAL syncs increased by roughly the same amount:

In Postgres, all changes—such as inserting rows or modifying indexes—are recorded in the WAL. When a transaction commits, the WAL is flushed to disk to ensure durability, while table and index pages are flushed later by the background writer or during checkpoints. If a crash occurs, Postgres can replay changes from the WAL to restore consistency. The WAL sync metric reports the number of times these logs are flushed to disk.

Since the only change we’d introduced was the new query, it was likely the source of the WAL syncs, even though the rows weren’t actually being updated. To understand why, we needed to look deeper into what the upsert was writing to the WAL.

Digging into Postgres WAL to explain the IOPS spike

Starting in Postgres 15, the pg_walinspect extension provides debugging functions to inspect the WAL. Since our metrics were pointing squarely at WAL activity, this was the most direct way to see what the upsert was actually doing. We installed the extension with:

CREATE EXTENSION pg_walinspect;Once installed, the extension exposes the pg_get_wal_records_info function, which returns all WAL records between two log sequence numbers (LSNs). To make the extension easier to work with, we added the following shortcuts to our ~/.psqlrc:

\set wal_lsn_start 'SELECT pg_current_wal_lsn() \\gset'\set wal_records 'SELECT * FROM pg_get_wal_records_info(:''pg_current_wal_lsn'', ''FFFFFFFF/FFFFFFFF'');':wal_lsn_startsaves the current LSN to thepg_current_wal_lsnvariable.:wal_recordsdisplays all WAL records between the saved LSN and the WAL end, usingFFFFFFFF/FFFFFFFFas the upper bound (the maximum valid LSN value).

With those shortcuts available, we could reproduce the upsert and check whether it was generating WAL records:

:wal_lsn_startINSERT INTO host_last_ingested as t VALUES(1, now()) ON CONFLICT (host_id) DO UPDATE SET last_ingested = EXCLUDED.last_ingested WHERE t.last_ingested < EXCLUDED.last_ingested - '1 day'::interval;INSERT 0 0:wal_recordsThe query didn’t update any rows, but pg_walinspect still showed WAL activity:

-[ RECORD 1 ]----+-----------------------------------------------------------------start_lsn | 0/02469D30end_lsn | 0/02469D68prev_lsn | 0/02469CF8xid | 818resource_manager | Heaprecord_type | LOCKrecord_length | 54main_data_length | 8fpi_length | 0description | xmax: 818, off: 2, infobits: [LOCK_ONLY, EXCL_LOCK], flags: 0x00block_ref | blkref #0: rel 1663/5/16426 fork main blk 0-[ RECORD 2 ]----+-----------------------------------------------------------------start_lsn | 0/02469D68end_lsn | 0/02469D98prev_lsn | 0/02469D30xid | 818resource_manager | Transactionrecord_type | COMMITrecord_length | 46main_data_length | 20fpi_length | 0description | 2025-10-16 15:33:10.406748+00block_ref | <null>Two WAL records were written:

- A

Heap LOCKrecord indicates a row lock on the relation16426, which happens to be our newhost_last_ingestedtable. - A

COMMIT recordis written because a transaction ID (xid) was assigned to the transaction, and it must either be committed or rolled back.

To confirm what was generating the LOCK record, we attached a debugger to the Postgres backend process (lldb -p $backend_pid) and added a breakpoint on WALInsertLockAcquire. The backtrace (abridged) showed:

#0: postgres`WALInsertLockAcquire at xlog.c:1391:6#1: postgres`XLogInsertRecord(...) at xlog.c:823:3#2: postgres`XLogInsert(...) at xloginsert.c:524:12#3: postgres`heap_lock_tuple(...) at heapam.c:5244:12#4: postgres`heapam_tuple_lock(...) at heapam_handler.c:380:11#5: postgres`table_tuple_lock(...) at tableam.h:1554:9#6: postgres`ExecOnConflictUpdate(...) at nodeModifyTable.c:2749:9#7: postgres`ExecInsert(...) at nodeModifyTable.c:1152:10Looking at the code around the backtrace, we could trace what happens:

- The upsert checks for the existence of the tuple.

- The tuple exists, so ExecOnConflictUpdate runs and locks the row.

- Locking the row modifies its metadata to include the locking transaction ID, assigning a transaction ID (

xid). - A LOCK record is written to the WAL.

- The

WHEREcondition evaluates to false, so the row is not updated. - Because a transaction ID was assigned, the transaction must be committed

- A

COMMITrecord is written, and the WAL is flushed to disk.

Postgres’s INSERT documentation explicitly confirms this lock behavior for ON CONFLICT DO UPDATE:

Only rows for which this expression returns true will be updated, although all rows will be locked when the

ON CONFLICT DO UPDATEaction is taken. Note thatconditionis evaluated last, after a conflict has been identified as a candidate to update.

This explains the surge in IOPS and WAL syncs we saw. Each upsert triggers a WAL sync, and each sync writes an 8 kB page to disk. With 500 upserts per second, we had 500 WAL syncs per second using 500 IOPS.

There’s only a limited amount of sync a disk can handle. We used pg_test_fsync to estimate how many WAL syncs per second the hardware could sustain. On a gp3 EBS disk, we reach around 1,000 syncs per second using 8 kB writes. Once that limit is reached, Postgres starts batching commits, which introduces latency.

This particular cluster handled 500 upserts per second just fine. But our biggest clusters will add 25,000 upserts per second. That would overwhelm the sync capacity for a query that was expected to behave like a no-op.

We needed to change our approach to avoid this overhead.

Rewriting the query to avoid upsert overhead

We couldn’t rely on ON CONFLICT DO UPDATE, but we still needed to track each host’s last ingested time. The application also has strict latency requirements, so everything needs to happen in a single query. To do that, we emulated an upsert by using a data-modifying Common Table Expression (CTE).

Here’s how it works:

- The CTE attempts to insert the row using

ON CONFLICT DO NOTHING, which avoids locking existing rows. - If the insertion succeeds, the CTE returns the inserted row.

- If no insertion occurs, the CTE returns nothing.

- The outer

UPDATEuses the presence (or absence) of a returned row to decide whether to run.

WITH insert_attempt AS ( -- Try to insert INSERT INTO host_last_ingested (host_id) -- Values to insert VALUES (:host_id) -- Only try to insert if row doesn't already exist ON CONFLICT DO NOTHING RETURNING *)-- Do the updateUPDATE host_last_ingested SET last_ingested=now() WHERE host_id=:host_id -- Only modify if last_ingested is older than 1 day AND last_ingested < now() - '1 day'::interval -- And only if no insertion was done AND NOT EXISTS (SELECT * FROM insert_attempt);Compared to ON CONFLICT DO UPDATE, this version introduces potential race conditions. For example:

- Tx1: The insert attempt fails because the row already exists.

- Tx2: Deletes the row.

- Tx1: Attempts to update the row that was deleted.

This happens because we’re not locking the row. This trade-off is acceptable for our use case: Host tracking can be imprecise, and the likelihood of seeing a host again after 7 days of inactivity is low.

We then checked the WAL records generated by this query to confirm that it behaved as expected.

WAL record behavior

If the row doesn’t exist, we see the following records:

| xid | resource_manager | record_type |

|---|---|---|

| 798 | Heap | INSERT+INIT |

| 798 | Btree | NEWROOT |

| 798 | Btree | INSERT_LEAF |

| 798 | Heap | HEAP_CONFIRM |

| 798 | Transaction | COMMIT |

Those WAL records add a new row to the table (Heap INSERT+INIT) and a new entry in the index (Btree NEWROOT + INSERT_LEAF). Because the insert uses ON CONFLICT DO NOTHING, it is performed speculatively and needs to be confirmed via the HEAP_CONFIRM WAL record.

If the row already exists and last_ingested is older than 1 day, we see the following records:

| xid | resource_manager | record_type |

|---|---|---|

| 800 | Heap | HOT_UPDATE |

| 800 | Transaction | COMMIT |

In this case, the update qualifies as a HOT update and does not require any index changes.

And if the row exists and last_ingested age is less than 1 day, no WAL records are generated.

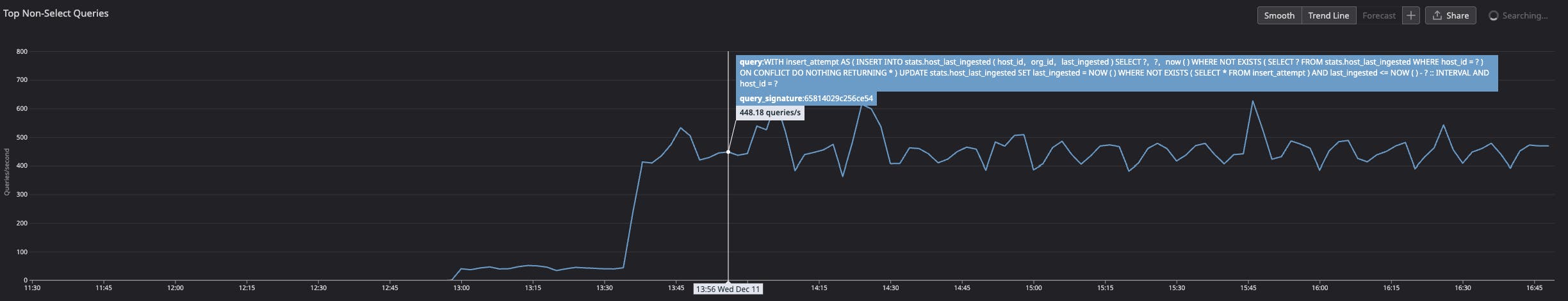

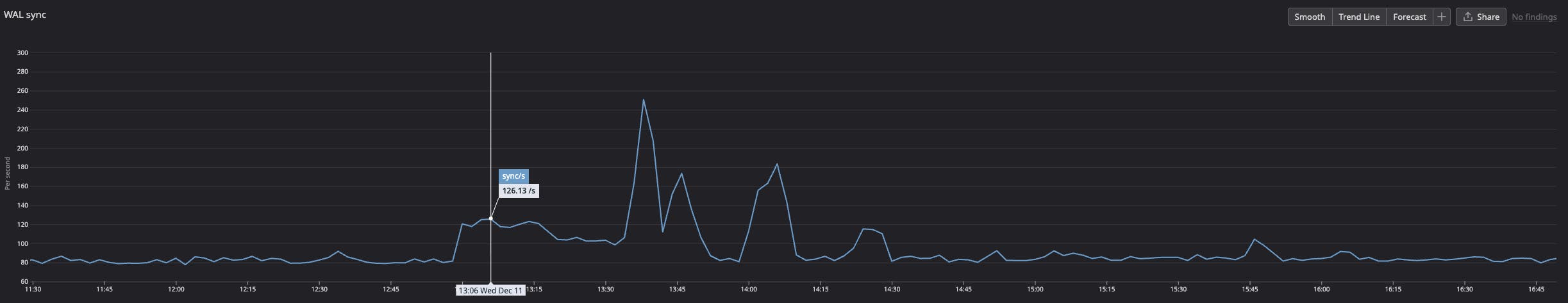

After confirming that the new query avoided unnecessary WAL records, we rolled it out again to our small data center:

We saw the expected spike in update queries during the rollout:

This time, the increase in WAL syncs closely matched the actual row updates. When no rows were modified, the WAL sync rate remained steady:

Lessons learned: WAL and query optimization

By using pg_walinspect, we were able to pinpoint the source of our unexpected overhead: During conflict resolution, an upsert locks the row, even if it doesn’t update anything. That lock modifies the row’s metadata with a transaction ID. Once a transaction ID is allocated, the transaction must be committed—even if no row was updated—triggering a WAL sync.

Thanks to this information, we:

- Rewrote the query to avoid the implicit row lock

- Validated the new behavior by inspecting the resulting WAL records

- Successfully removed the unnecessary overhead while preserving correctness

Postgres’s WAL tooling has broader applications, too. For example, pg_get_wal_stats provides insight into the types and sizes of WAL records, which can help quantify the cost of index maintenance or the impact of full page images.

When you’re operating databases at scale, even “no-ops” queries can turn into performance surprises. Tools like pg_walinspect help make those surprises visible and fixable.

If you enjoy digging into systems like this to uncover hidden performance costs and building scalable solutions to fix them, we’re hiring! Explore open roles on our engineering team.