AJ Stuyvenberg

AJ Stuyvenberg is a Staff Engineer at Datadog and an AWS Serverless Hero. A version of this post was originally published on his blog.

In AWS Lambda, a cold start occurs when a function is invoked and an idle, initialized sandbox is not ready to receive the request. Features like Provisioned Concurrency and SnapStart are designed to reduce cold starts by pre-initializing execution environments. But did you know that AWS may warm your Lambda functions to reduce the impact and frequency of cold starts, even if you’re not using features like Provisioned Concurrency?

This is known as proactive initialization of Lambda functions—but until recently, it hadn’t been documented anywhere. In this post, I’ll share the backstory of how I worked with the AWS Lambda service team to document this little-known feature. We’ll also explore how proactive initialization works, how it differs from a cold start, and how frequently it occurs. But first, I’ll share a quick summary of this post.

TL;DR

AWS Lambda occasionally pre-initializes execution environments to reduce the number of cold start invocations.

This does NOT mean you’ll never have a cold start.

The percentage of true cold start initializations to proactive initializations varies depending on many factors, but you can clearly observe it.

Depending on your workload and latency tolerance, you may need Provisioned Concurrency.

Proactive initialization, as defined in the docs

In March 2023, AWS updated the Lambda documentation to include an interesting new detail:

“For functions using unreserved (on-demand) concurrency, Lambda may proactively initialize a function instance, even if there’s no invocation. When this happens, you can observe an unexpected time gap between your function’s initialization and invocation phases. This gap can appear similar to what you would observe when using provisioned concurrency.”

This excerpt is buried in the docs, and it indicates something not widely known about AWS Lambda: AWS may warm your functions to reduce the impact and frequency of cold starts, even when you’re using on-demand concurrency.

Last week, they clarified this with further details:

“For functions using unreserved (on-demand) concurrency, Lambda occasionally pre-initializes execution environments to reduce the number of cold start invocations. For example, Lambda might initialize a new execution environment to replace an execution environment that is about to be shut down. If a pre-initialized execution environment becomes available while Lambda is initializing a new execution environment to process an invocation, Lambda can use the pre-initialized execution environment.”

This update is no accident—I spent several months working closely with the AWS Lambda service team to make it happen.

The rest of this post will walk through everything you need to know about this feature—and how I learned about it in the first place.

Tracing proactive initialization

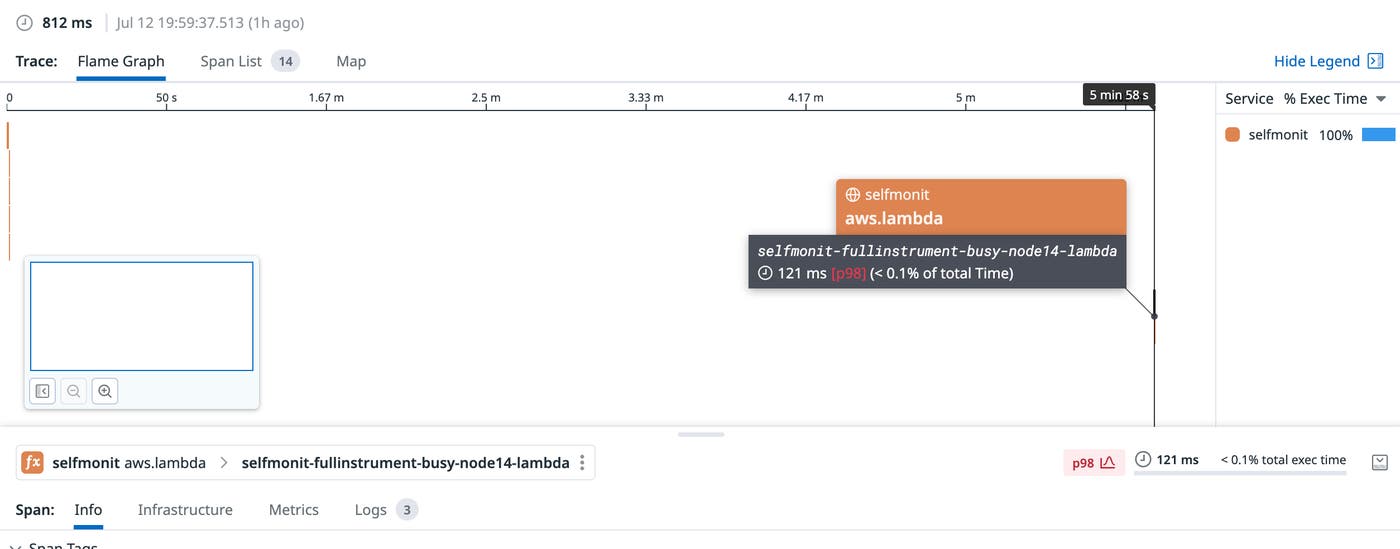

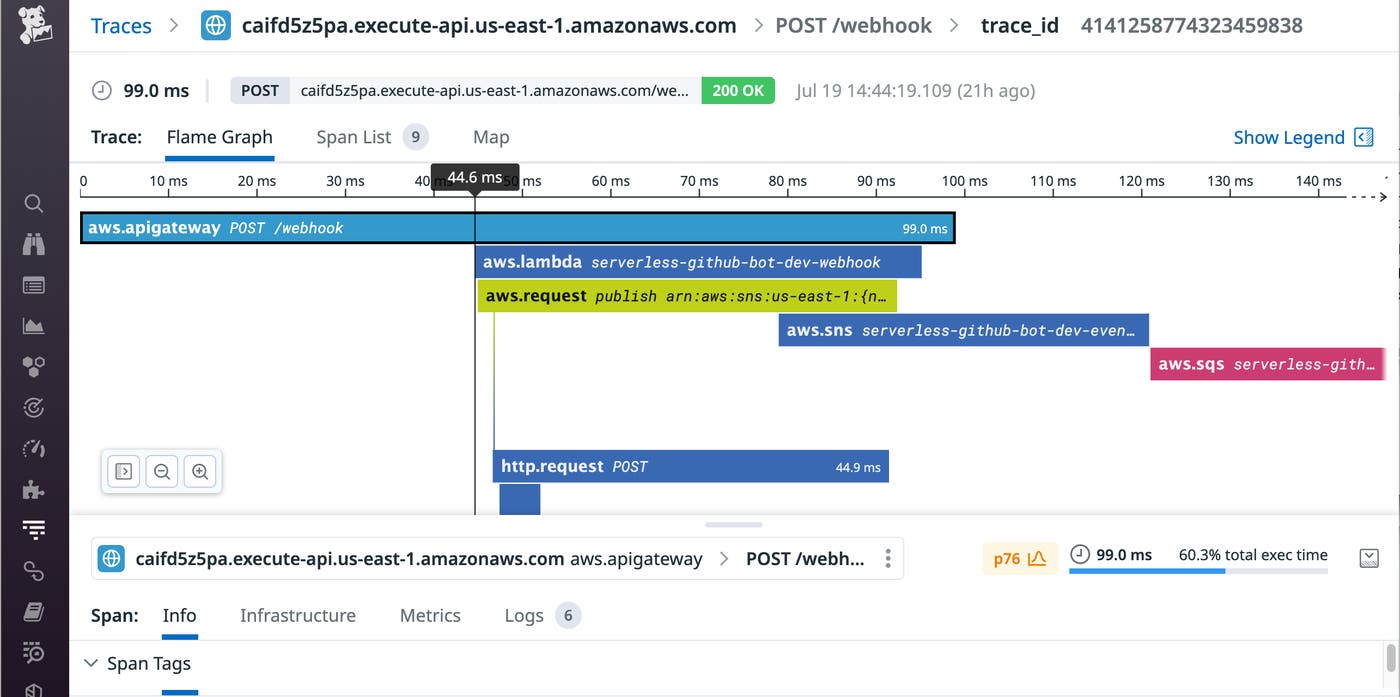

This adventure began when I noticed what appeared to be a bug in a distributed trace. The trace correctly measured the Lambda initialization phase, but it appeared to show the first invocation occurring several minutes after initialization. This can happen with SnapStart or Provisioned Concurrency, but this function wasn’t using either of these capabilities and was otherwise entirely unremarkable.

Here’s what the flame graph looked like:

We can see a massive gap between function initialization and invocation. In this case, the invocation request wasn’t even made by the client until ~12 seconds after the sandbox was warmed up.

We’ve also observed cases where initialization occurs several minutes before the first invocation. In this case, the gap was nearly six minutes:

After much discussion with the AWS Lambda support team, I learned that I was observing what is known as a proactively initialized Lambda sandbox. It’s difficult to discuss proactive initialization without first defining a cold start, so let’s start there.

What is a cold start?

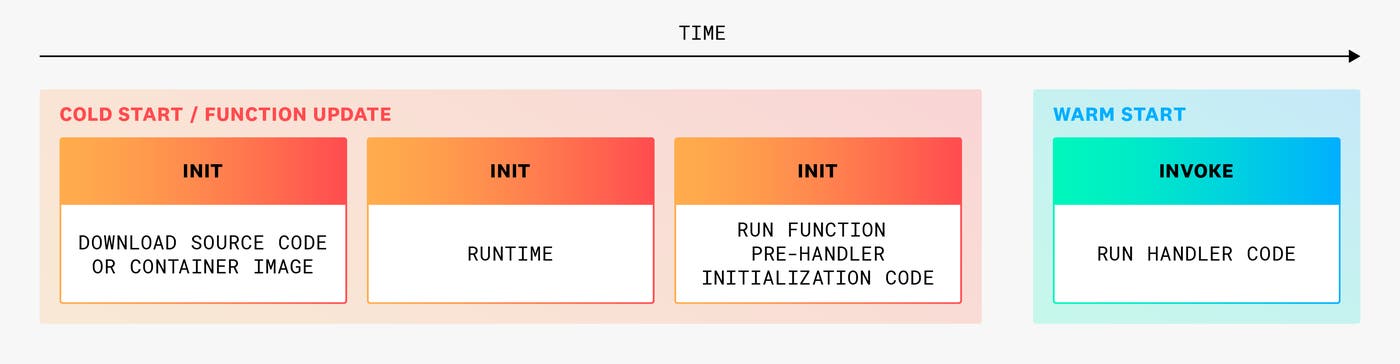

AWS Lambda defines a cold start as the time it takes to download your application code and start the application runtime.

When a function invocation experiences a cold start, users see a delay ranging from 100 ms to several full seconds of latency, and developers observe an Init Duration reported in the CloudWatch logs for the invocation.

Now that we’ve defined cold starts, let’s expand this to understand the definition of proactive initialization.

What is proactive initialization?

Proactive initialization occurs when a Lambda function sandbox is initialized without a pending Lambda invocation. As a developer, this is desirable because each proactively initialized sandbox means one less cold start, which would otherwise lead to a painful user experience. As a user of the application powered by Lambda, it’s as if they never had to encounter a cold start at all.

When a function is proactively initialized, the user making the first request to the sandbox does not experience a cold start (similar to Provisioned Concurrency, but for free).

Proactive initialization serves the interests of both the team running AWS Lambda and developers running applications on Lambda. From an economic perspective, we know that AWS Lambda wants to run as many functions on the same server as possible (yes, serverless has servers…). We also know that developers want their cold starts to be as infrequent and fast as possible.

If we consider the fact that cold starts absorb valuable CPU time in a shared, multi-tenant system (time that is currently not billed), it’s clear that minimizing this time is mutually beneficial for AWS and its customers.

Now let’s consider how this applies specifically to AWS Lambda. Because Lambda is a distributed service, Worker fleets need to be redeployed, scaled out, scaled in, and respond to failures in the underlying hardware. After all, everything fails all the time.

This means that even with steady-state throughput, Lambda needs to rotate function sandboxes for users over the course of hours or days. AWS does not publish minimum or maximum lease durations for function sandboxes, but in practice, I’ve observed ~7 minutes on the low side and several hours on the high side.

The service also needs to run efficiently. AWS Lambda is a multi-tenant system, so creating a heterogeneous workload is key to load balancing the service at scale. In distributed systems parlance, this is known as bin packing (aka shoving as much as possible into the same bucket). The less time AWS spends on initializing functions that it knows will serve invocations, the better—for everyone.

When does AWS proactively initialize Lambda functions?

There are two logical conditions which can lead to proactive initialization: deployments and eager assignments. We’ll cover both cases in more detail below.

Proactive initialization for deployments

First, let’s talk about deployments. Consider you’re working with a function that, at steady state, experiences 100 concurrent invocations. When you deploy a change to your function (or function configuration), AWS can reasonably guess that you’ll continue to invoke that same function 100 times concurrently after the deployment finishes. Instead of waiting for each invocation to trigger a cold start, AWS will automatically reprovision (roughly) 100 sandboxes to absorb that load after the deployment finishes. Some users will still experience the full cold start duration, but others won’t.

This can similarly occur when Lambda needs to rotate or roll out new hosts into the Worker fleet.

These aren’t novel optimizations in the realm of distributed systems, but this is the first time AWS has confirmed they make these optimizations.

Proactive initialization for eager assignments

In certain cases, proactive initialization is a consequence of natural traffic patterns in your application. More specifically, an internal system (known as the AWS Lambda Placement Service) will assign pending Lambda invocation requests to sandboxes as they become available.

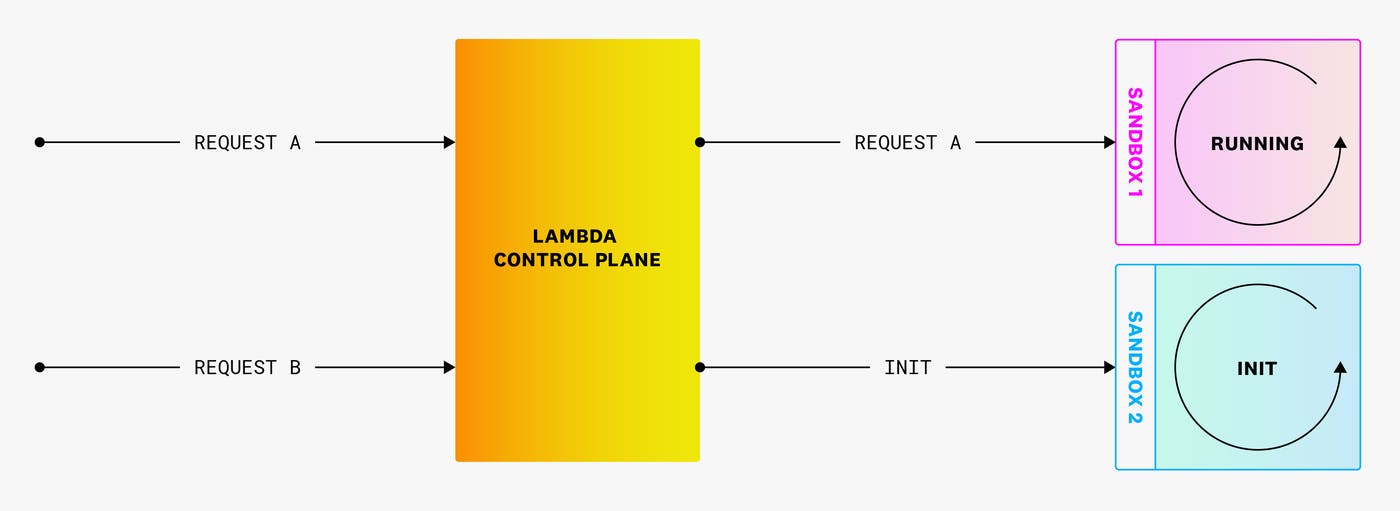

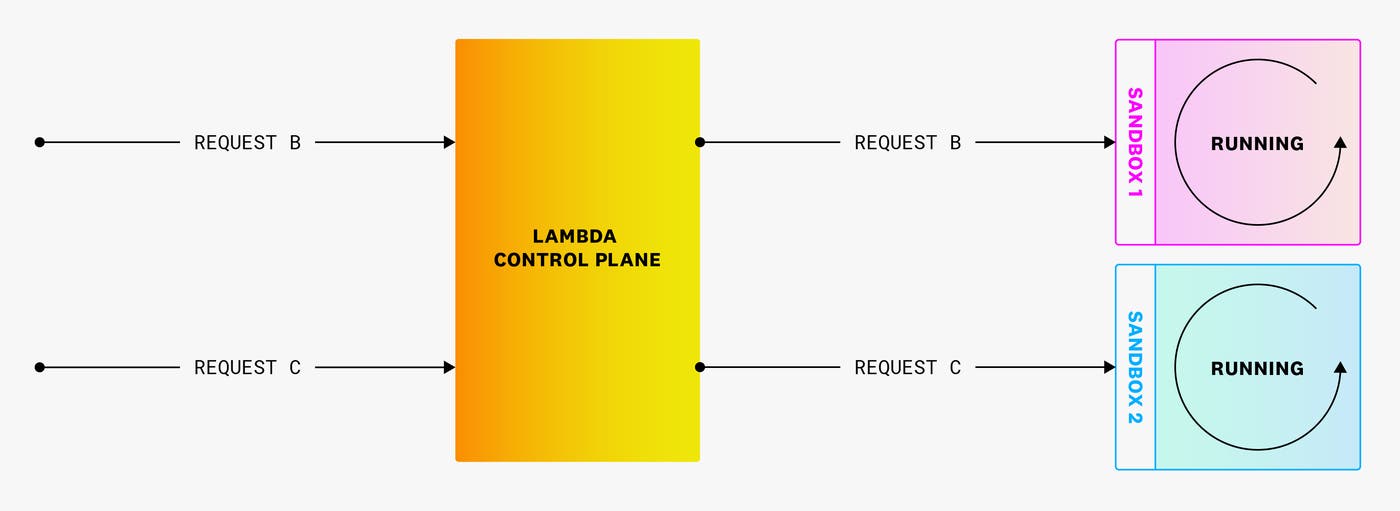

Here’s how it works. Consider a Lambda function that is currently processing a request (request A in the following diagram). In this case, only one sandbox is running. When a new request triggers a Lambda function, AWS’s Lambda Control Plane will check if there are any available warm sandboxes to run the request. If none are available, the Control Plane initializes a new sandbox, as shown in the following diagram.

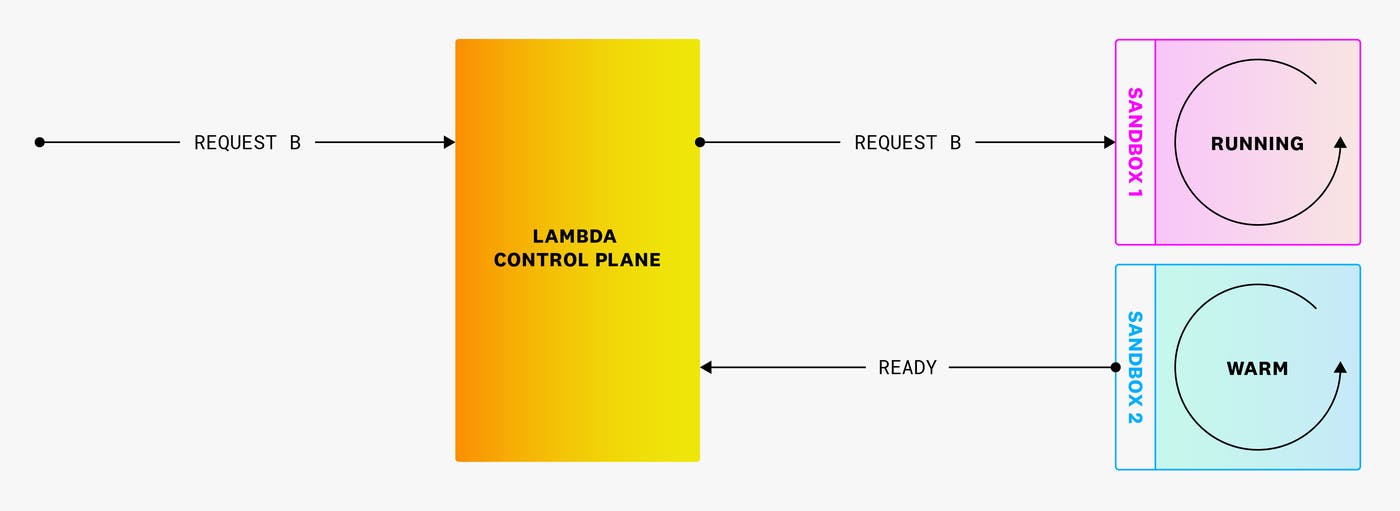

However, it’s possible that in this time, a warm sandbox completed request A and is ready to receive a new one. In this case, Lambda will assign request B to this newly available, warm sandbox.

This means that the new sandbox that was just created doesn’t have a request to serve. When this happens, AWS may still keep it warm, so it can serve new requests. This is known as proactive initialization.

When a new request (request C) arrives, it can be routed to this warm sandbox without delay! When this happens, it means that the user does not need to wait for the sandbox to warm up.

In this example, Request B did spend some time waiting for a sandbox (but less than the full duration of a cold start). This latency is not reflected in the duration metric, which is why it’s important to monitor the end-to-end latency of any synchronous request through the calling service (like API Gateway). In the following screenshot, you can see 45 ms of latency between API Gateway and Lambda.

Request duration plays a factor in determining whether users will experience a full cold start duration or less latency (due to proactive initialization). In a normal cold start, a user must wait for the sandbox to be warm before the request can be served.

With the new, eager-assignment algorithm, there is now a race condition between any sandbox that is currently serving a request and the time it takes for a new sandbox to initialize.

This means that if request durations are typically very long, users will see more cold starts, as initializations are shorter than the median request duration. If requests are very short, but initializations are very long, then users will see fewer cold starts.

Detecting proactive initializations

Now that we understand how proactive initialization works, it’s natural to wonder how often it actually occurs in the real world. To find the answer to this question, we can leverage the fact that AWS Lambda functions must initialize within 10 seconds (otherwise, the Lambda runtime is re-initialized from scratch). Using this fact, we can safely infer that a Lambda sandbox is proactively initialized when both of the following are true:

A function’s first invocation was processed more than 10 seconds after the sandbox was first initialized.

A sandbox is processing its first invocation.

Both of these can easily be tested. Here’s the code for Node.js:

const coldStartSystemTime = new Date()let functionDidColdStart = true

export async function handler(event, context) { if (functionDidColdStart) { const handlerWrappedTime = new Date() const proactiveInitialization = handlerWrappedTime - coldStartSystemTime > 10000 ? true : false console.log({proactiveInitialization}) functionDidColdStart = false } return { statusCode: 200, body: JSON.stringify({success: true}) }}And here’s the code for Python:

import jsonimport time

init_time = time.time_ns() // 1_000_000cold_start = True

def hello(event, context): global cold_start if cold_start: now = time.time_ns() // 1_000_000 cold_start = False proactive_initialization = False if (now - init_time) > 10_000: proactive_initialization = True print(f'{{proactiveInitialization: {proactive_initialization}}}') body = { "message": "Go Serverless v1.0! Your function executed successfully!", "input": event }

response = { "statusCode": 200, "body": json.dumps(body) }

return responseHow often does AWS proactively initialize Lambda functions?

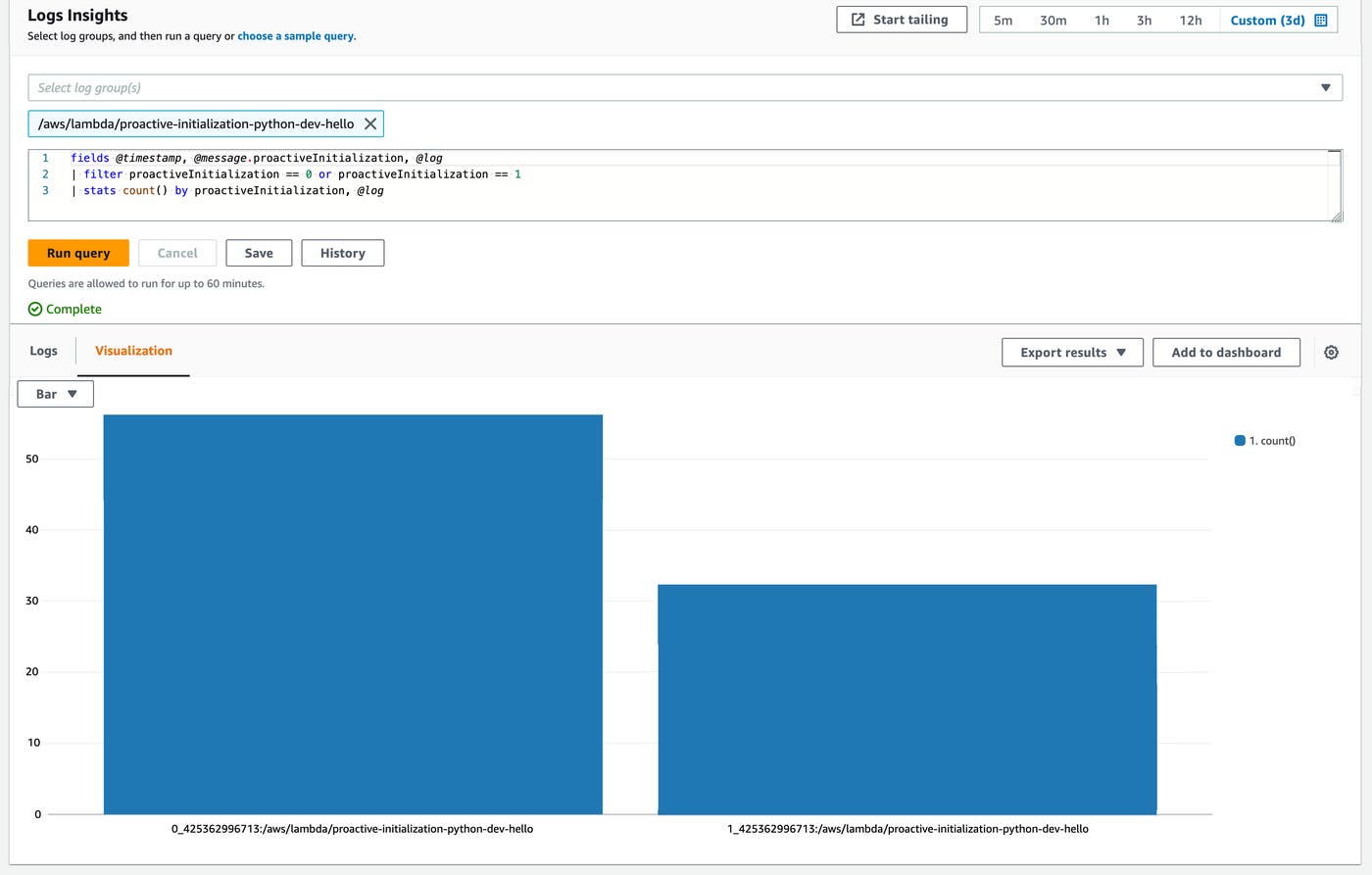

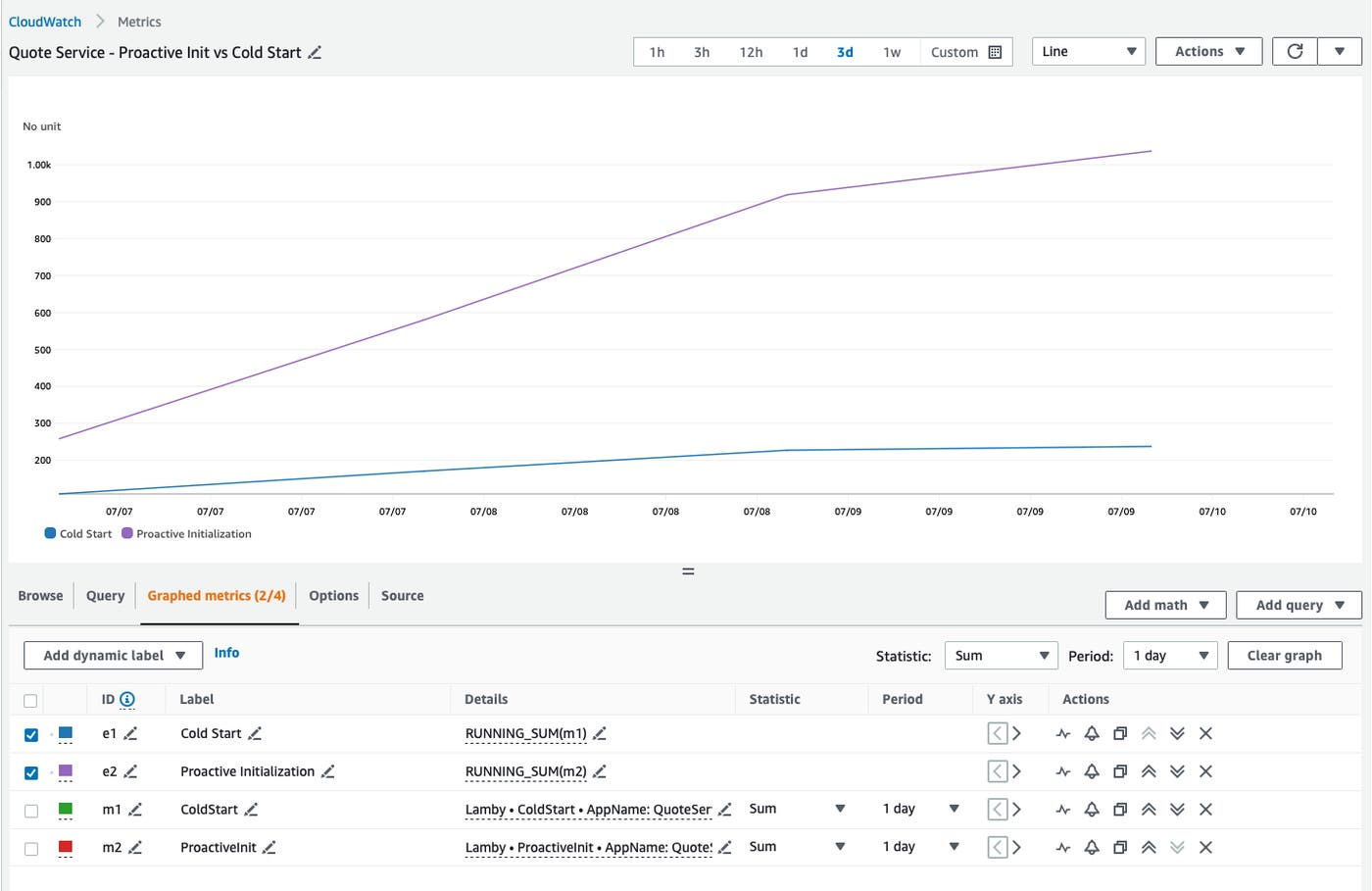

AWS Lambda functions without steady traffic do not typically benefit from proactive initializations. In order to test out just how often they occur in functions that receive more traffic, I called some simple functions over and over in an endless loop (thanks to AWS credits provided by the AWS Community Builder program). The results indicated that almost 65 percent of my cold starts were actually proactive initializations, so they did not contribute to user-facing latency. Here’s the CloudWatch Logs Insights query:

fields @timestamp, @message.proactiveInitialization| filter proactiveInitialization == 0 or proactiveInitialization == 1| stats count() by proactiveInitializationThe following screenshot shows the detailed breakdown of proactive initializations (left) vs. cold starts (right). Note that each bar reflects the sum of initializations.

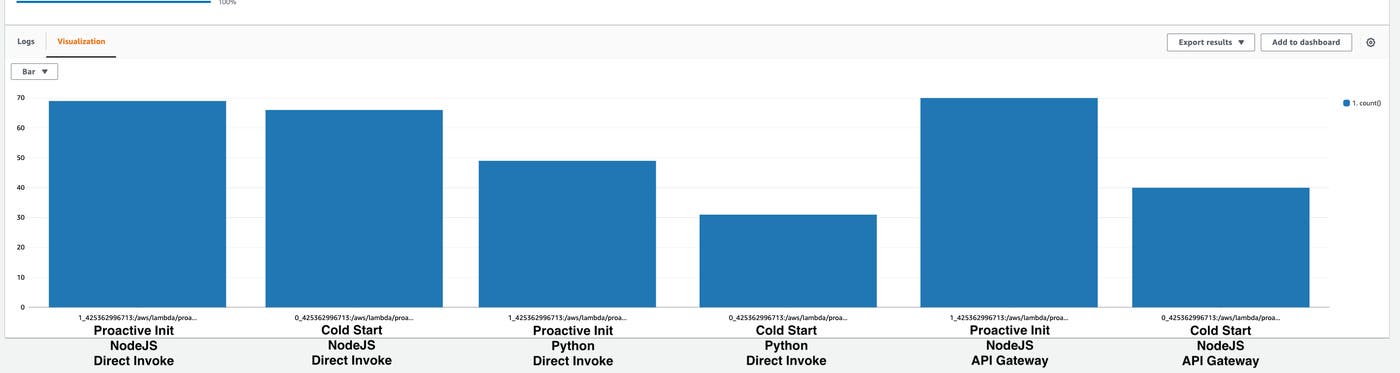

When I ran this query over several days across multiple runtimes and invocation methods, I observed that between 50 and 75 percent of initializations were proactive (whereas 25 to 50 percent were true cold starts):

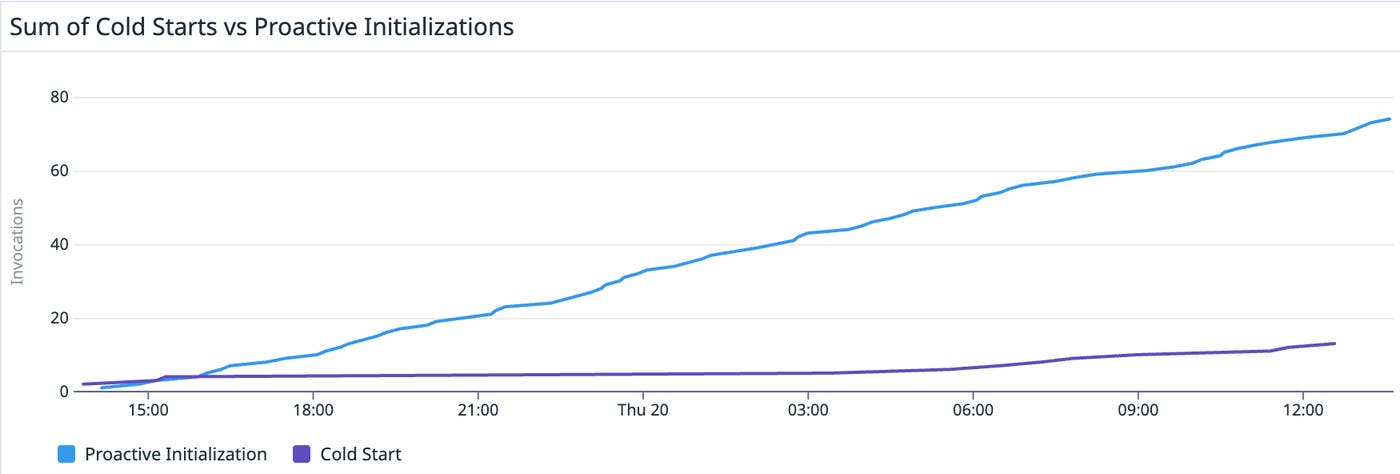

We can see this reflected in the cumulative sum of invocations over a one-day window. Here’s a Python function invoked at a very high frequency:

After one day, we had 78 proactive initializations and only 18 cold starts. That means that 81 percent of initializations were proactive!

AWS Serverless Hero Ken Collins maintains a very popular Rails-Lambda package. After some discussion, he added the capability to track proactive initializations and came to a similar conclusion. When he ran a three-day test using Ruby with a custom runtime, he observed that 80 percent of initializations were proactive, as shown in the following graph.

Proactive initialization takeaways

This post confirms what we’ve all speculated but never knew with certainty: AWS Lambda is warming your functions. We’ve demonstrated how you can observe this behavior, and we’ve followed this through until the public documentation was updated.

But that begs the question: What should you do about AWS Lambda proactive initialization?

Nothing.

This fulfills the promise of serverless in a big way. You get to focus on your own application while AWS improves the underlying infrastructure. Cold starts become something managed out by the cloud provider, and you never have to think about them.

We use serverless because it enables us to offload undifferentiated heavy lifting to cloud providers. Your autoscaling needs and my autoscaling needs probably aren’t that similar, but by taking all these workloads in aggregate with millions of functions across thousands of customers, AWS can predictively scale out functions and improve performance for everyone involved.

Start monitoring your proactively initialized functions

This post provided a first look at proactive initialization, and explored how to observe and understand your workloads on AWS Lambda. For more details about AWS Lambda, check out our in-depth monitoring guide.

If you want to track metrics and APM traces for proactively initialized functions, it’s available for anyone using Datadog. You can use the proactive_initialization facet to find proactively initialized functions. If you aren’t already using Datadog, sign up for a 14-day free trial.