David M. Lentz

Editor’s note: We’re happy to announce that Datadog’s integration now supports Vertica versions 10 through 12!

Vertica is a platform that uses machine learning capabilities to help you analyze large amounts of data. Vertica provides high availability and parallel processing by replicating data onto multiple nodes in a cluster, and uses a column-based data store for efficient querying. You can deploy Vertica in the cloud, on premise, or as a hybrid of the two. It works with standard ETL and business intelligence tools, and you can query your data using standard SQL over database drivers like ODBC, JDBC, ADO.NET, and more. We’re pleased to announce that Datadog now integrates with Vertica to help you monitor the performance and availability of your Vertica infrastructure as well as the other big data technologies in your stack.

How the Vertica analytics platform works

Vertica stores data in projections, which contain a subset of the data in a table. Vertica automatically optimizes query efficiency by analyzing your data and creating one or more projections for each table in your database. You can also provide sample queries and hints to improve these projections. When you query your data, Vertica uses whichever projection is most efficient—for example, the one that contains only the columns it needs, or that contains data whose sort order matches that required by the query. Vertica automatically keeps all projections up to date as data changes.

Projections can be segmented—divided evenly into subsets of data which can be stored on different nodes. Vertica can query segmented projections efficiently by using the computing power of multiple nodes in parallel. Each node executes the query on its segment of the data, and Vertica returns the aggregated results.

To ensure the availability of your data, you can deploy Vertica as a multi-node cluster and configure it to automatically replicate data on multiple nodes. The fault tolerance of your Vertica cluster is expressed as a K-safety value, which signifies the number of times the data is replicated. Vertica allows you to configure the following K-safety values:

- 0: Segmented projections are not replicated.

- 1: Each segment of a projection is replicated to one other node. (Vertica calls this copy of the data a buddy projection.) Vertica recommends that you configure your database with 1 as your intended K-safety value.

- 2: Each segment is replicated to two buddy projections on two different nodes.

If a projection is small, you can leave it unsegmented, in which case the entire projection is replicated to all nodes in the cluster regardless of the K-safety value of the database.

Make sure your data’s available

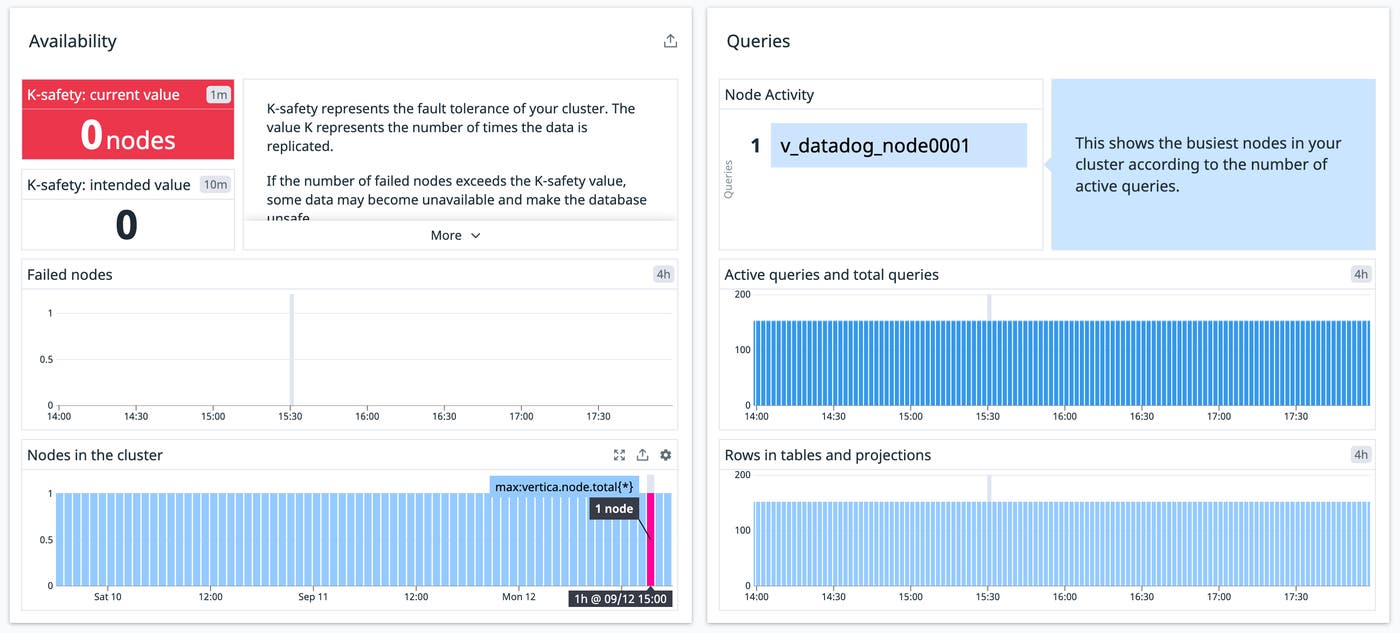

The K-safety value of your database can vary from its intended value as nodes go down and come back up. You should keep an eye on it to understand whether you’re at risk of your data becoming unavailable. A Datadog dashboard makes it easy to see your cluster’s current K-safety value and compare it to the intended K-safety value.

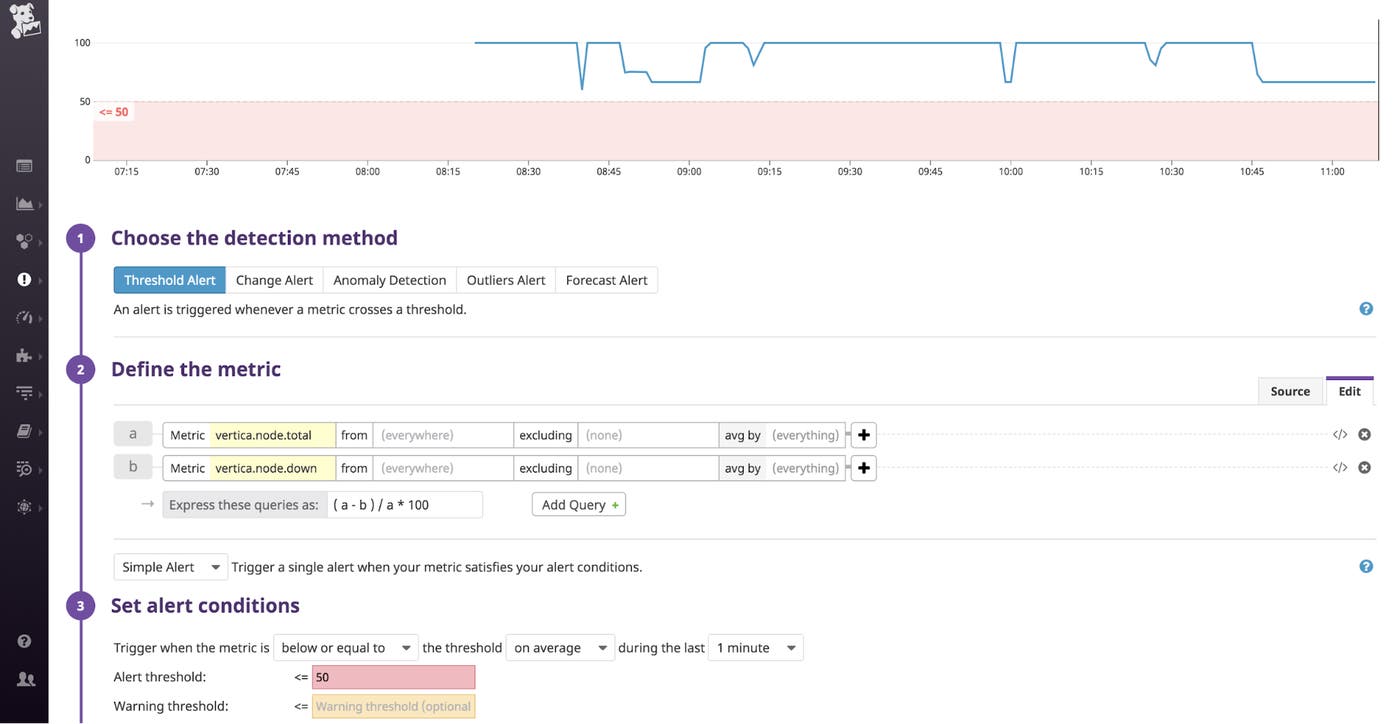

If half of your nodes become unavailable—even if you haven’t lost any data—Vertica will stop your database. You can easily monitor vertica.node.down (the number of nodes that are currently down) and vertica.node.total (the number of nodes in the cluster, whether they’re up or down) to ensure that your cluster is running a node count safely above 50 percent. The screenshot below illustrates how you can create an alert that will automatically notify you if the percentage of healthy nodes indicates a problem.

Watch your cluster’s resource usage

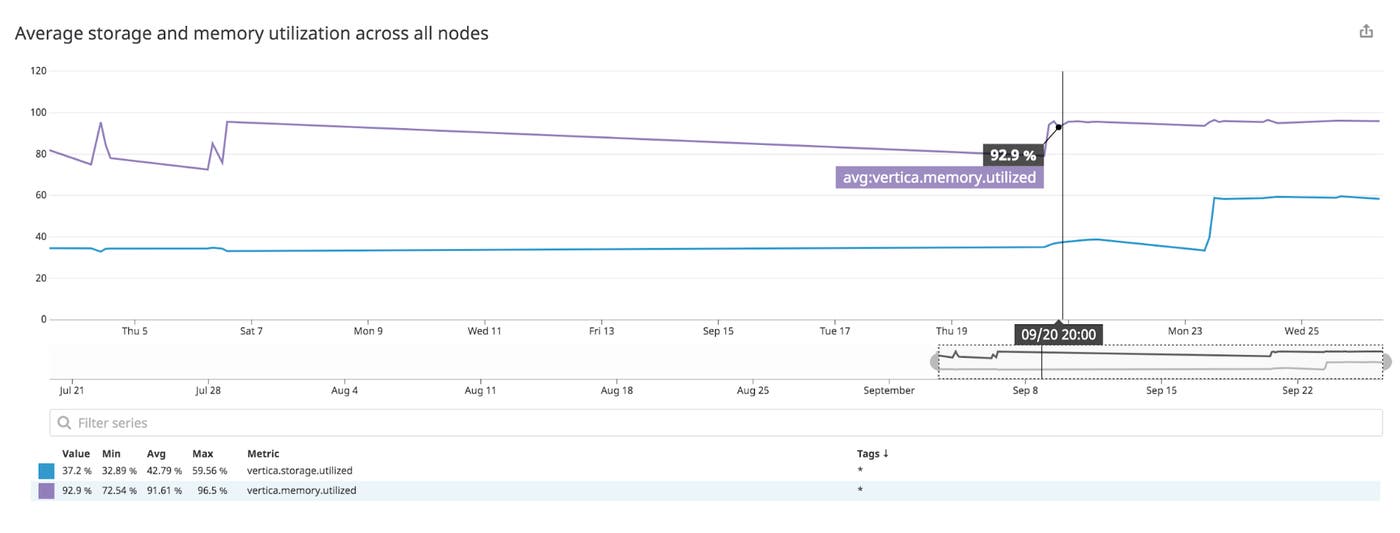

To ensure your Vertica infrastructure is healthy and meeting the needs of your users, you should monitor Vertica’s use of storage space and memory. You can monitor the vertica.storage.utilized metric to see the percentage of a node’s available storage that’s in use. When disk space becomes limited, Vertica will begin to reject transactions, so you need to monitor your storage space to keep your database executing queries successfully.

A growing database can also mean that Vertica needs more memory as it queries the data. A query that initially retrieved a small amount of data will require increasing memory as the amount of data grows. It’s important to monitor vertica.memory.utilized, which shows you the percentage of a node’s available memory that’s in use. As your database grows and your queries require increasing memory, you can ensure the health of your cluster by pruning your data, redesigning your projections, and/or adding memory to your nodes.

Collect and analyze Vertica logs

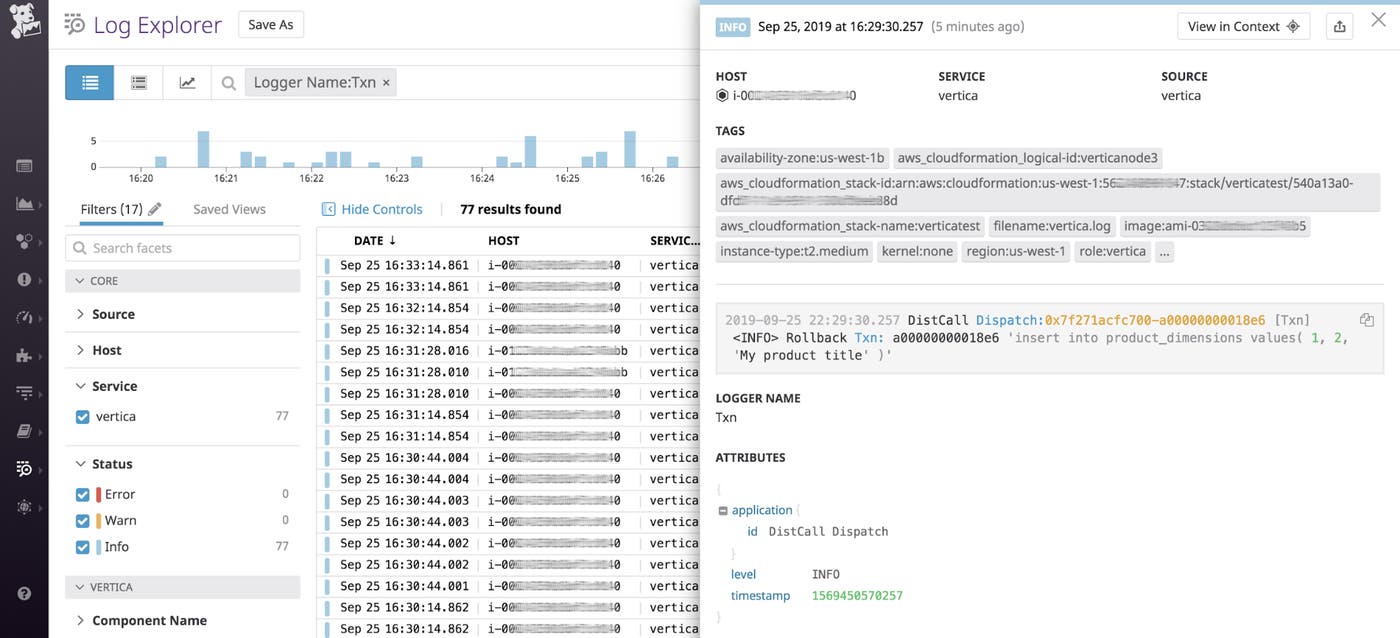

Vertica logs can be a valuable source of information when you’re troubleshooting an issue such as a change in your current K-safety value. When you configure the Vertica integration to collect logs, you can analyze them in Datadog and correlate them with your Vertica metrics to understand the factors that affect your cluster’s performance. Datadog automatically tags your logs with metadata from your host and—if applicable—your cloud provider, and parses them into facets that you can use for search and analysis. For example, if you filter by the Logger Name facet to view only Resource Manager logs, you can understand how Vertica allocated shared resources to execute multiple queries. Logs like this could reveal queries that Vertica rejected due to insufficient memory on the node, or which had to be queued because the node’s memory was in use executing other queries.

Or, as shown in the screenshot below, you can filter to find logs from the Txn logger to see the SQL statements from any transactions Vertica had to roll back. This gives you valuable context if you need to investigate issues caused by problematic queries.

Start monitoring Vertica

Tracking the real-time status of your Vertica infrastructure is critical for ensuring that your queries execute efficiently and that your data is available. Enable the integration to start monitoring Vertica metrics and logs, as well as related technologies like Kafka and Hadoop, all in a single platform. If you’re not already using Datadog, you can start by signing up for a free 14-day trial.