Julien Delange

Bahar Shah

Static application security testing (SAST) tools help developers quickly catch potential vulnerabilities as they code. However, these tools rely on inflexible rules that often generate a high number of false positives, reducing trust in their accuracy and slowing adoption.

To help developers access context-aware vulnerability detection, we’ve released an open source AI-native SAST solution. This tool scans code changes incrementally and surfaces security issues in real time. Our project is able to deliver more accurate vulnerability detection than traditional solutions, outperforming the latter on OWASP benchmarks.

What is AI-native SAST?

Traditional SAST tools analyze source code using static rules and pattern matching to detect potential vulnerabilities. Each rule targets a specific type of vulnerability, such as SQL injection, cross-site scripting (XSS), or command injection. A 2018 study from Google found that false positives and the lack of actionable recommendations are the primary barriers to SAST adoption.

AI-native SAST solutions analyze code using large language models (LLMs) to enhance traditional SAST functionality with more flexible, intelligent vulnerability detection. They can reason about code semantics or execution context, such as call stack details or which services the code is associated with, to more reliably assess whether a potential vulnerability is present. This reasoning enables these tools to better contextualize code, decreasing the rate of false positives compared to traditional approaches.

While AI-native solutions are more accurate, they’re also more expensive to run. Each analysis requires multiple LLM calls, incurring significant token and inference costs. At scale, AI-native scanning becomes prohibitively expensive without the right architecture. Our project helps minimize these costs through incremental analysis, which reduces the number of full-repository scans that need to be performed.

How does Datadog’s AI-native SAST feature work?

Our project relies on four steps:

- Identification: Candidate files are filtered based on heuristics that help us pinpoint code likely to contain risks. For example, when evaluating code that could be vulnerable to SQL injection, the tool looks for database libraries that may be in use, SQL-like strings, and user-controlled input in queries.

- Context retrieval: The tool gathers any additional files or functions invoked by the target code to build the full context for analysis.

- Analysis: The file and its context are sent to the LLM. The LLM processes the file and analyzes it for potential sources of vulnerabilities, such as improperly validated input. It then contextualizes these findings to verify whether a vulnerability is present. For example, if the input is treated as data instead of as an executable command, injection attacks are less likely.

- Post-processing: Results are categorized using two approaches. First, a set of heuristic functions looks for specific words or patterns in the results to identify the vulnerability type. For example, a function may search for language relating to SQL injection. Next, the tool applies LLM-based false positive filtering to determine whether the findings represent genuine issues.

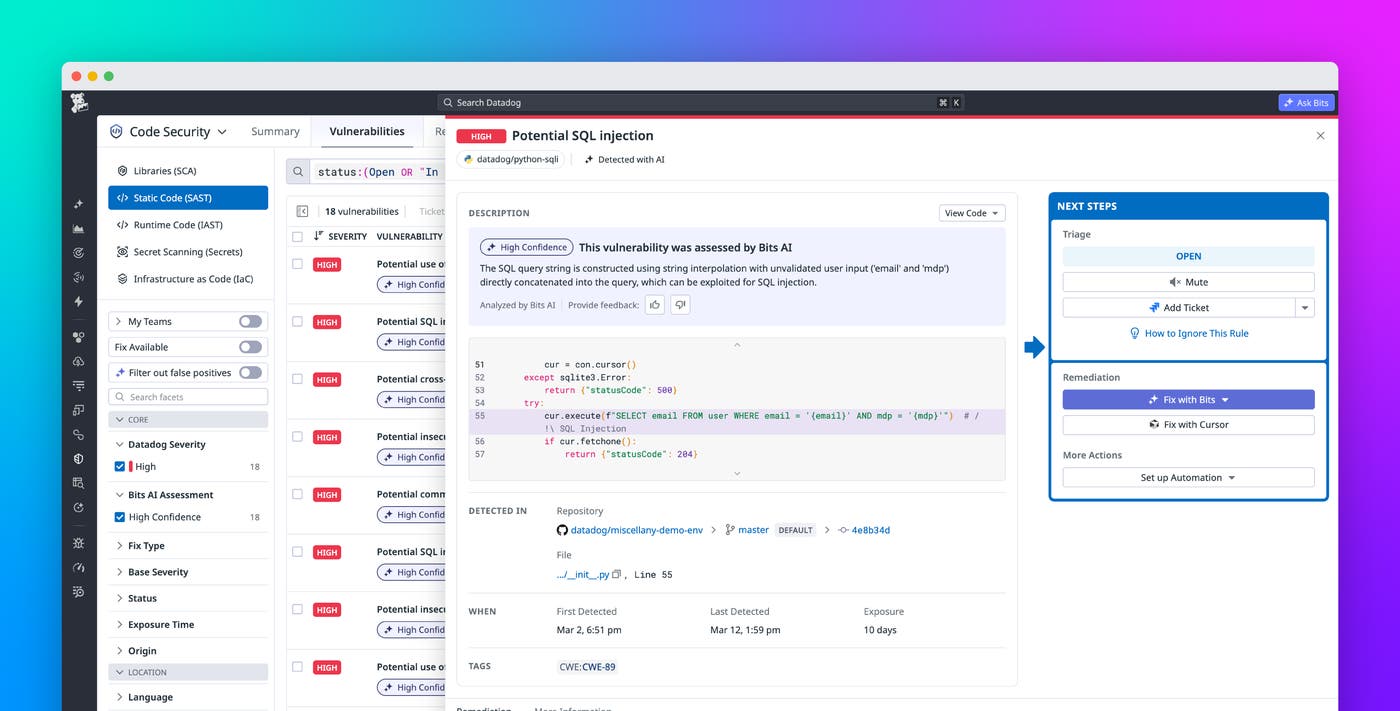

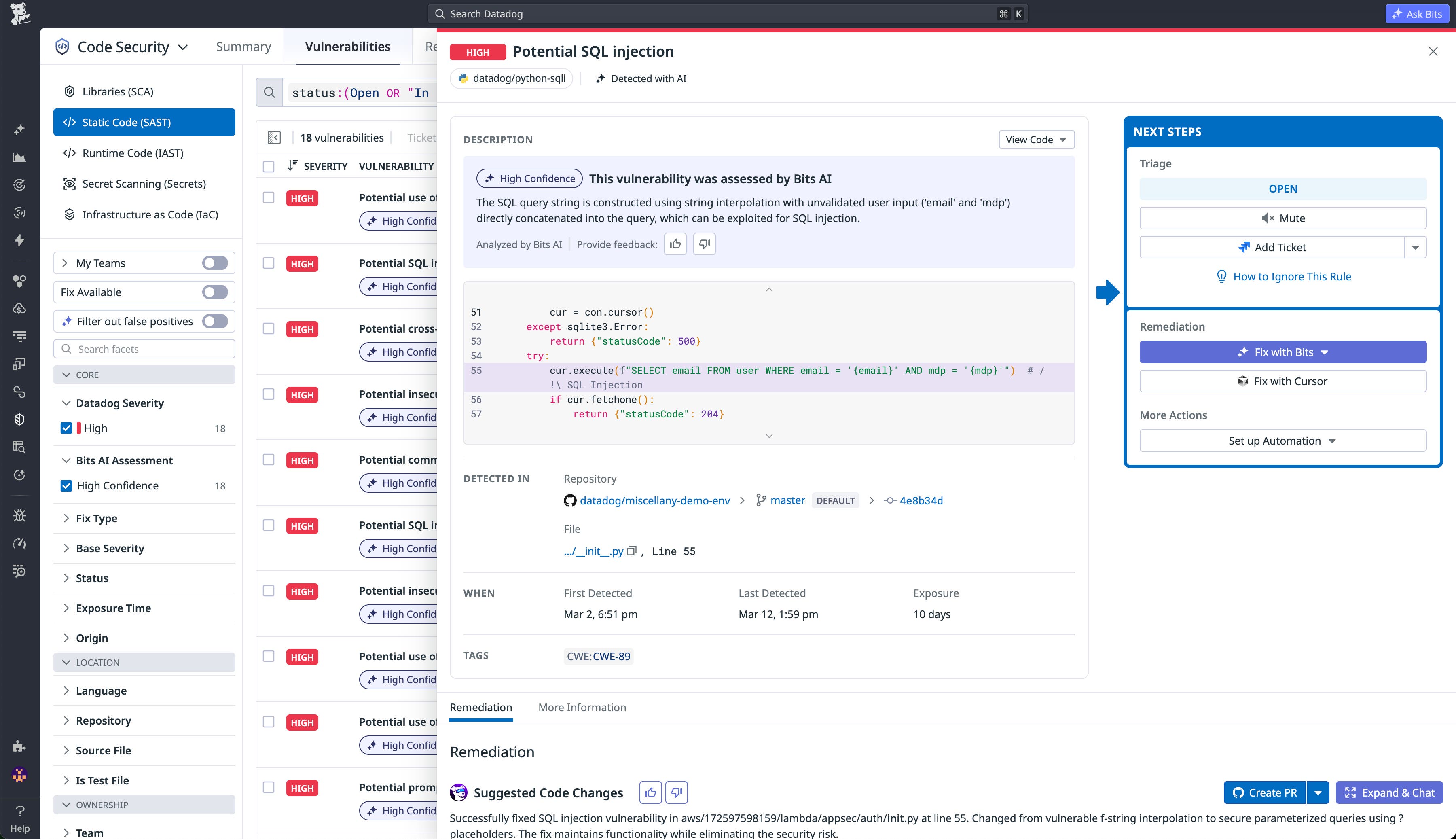

Once this process is finished, you’ll have a list of vulnerabilities and the corresponding code snippets they were found in. If you use Datadog Code Security, your SAST vulnerability findings will automatically be enhanced with the finalized AI assessments. For each vulnerability, you’ll see a clear explanation of how the code could be exploited along with a suggested fix.

Fine-tuned performance

Because the analysis process is repeated on each file in a repository—and each run requires multiple LLM calls—a full scan can be both slow and costly. Costs can increase further when using more advanced models with higher latency, such as Claude Opus.

To overcome this limitation, we run a complete repository scan at onboarding. Once this initial scan is done, Datadog only performs incremental analysis when a file’s content or context changes. Subsequent scans are faster and more cost effective as a result. To balance accuracy and cost, our AI-native SAST solution can provide better detection for high-impact and high-prevalence vulnerabilities rather than the broader rule set covered by traditional SAST tools.

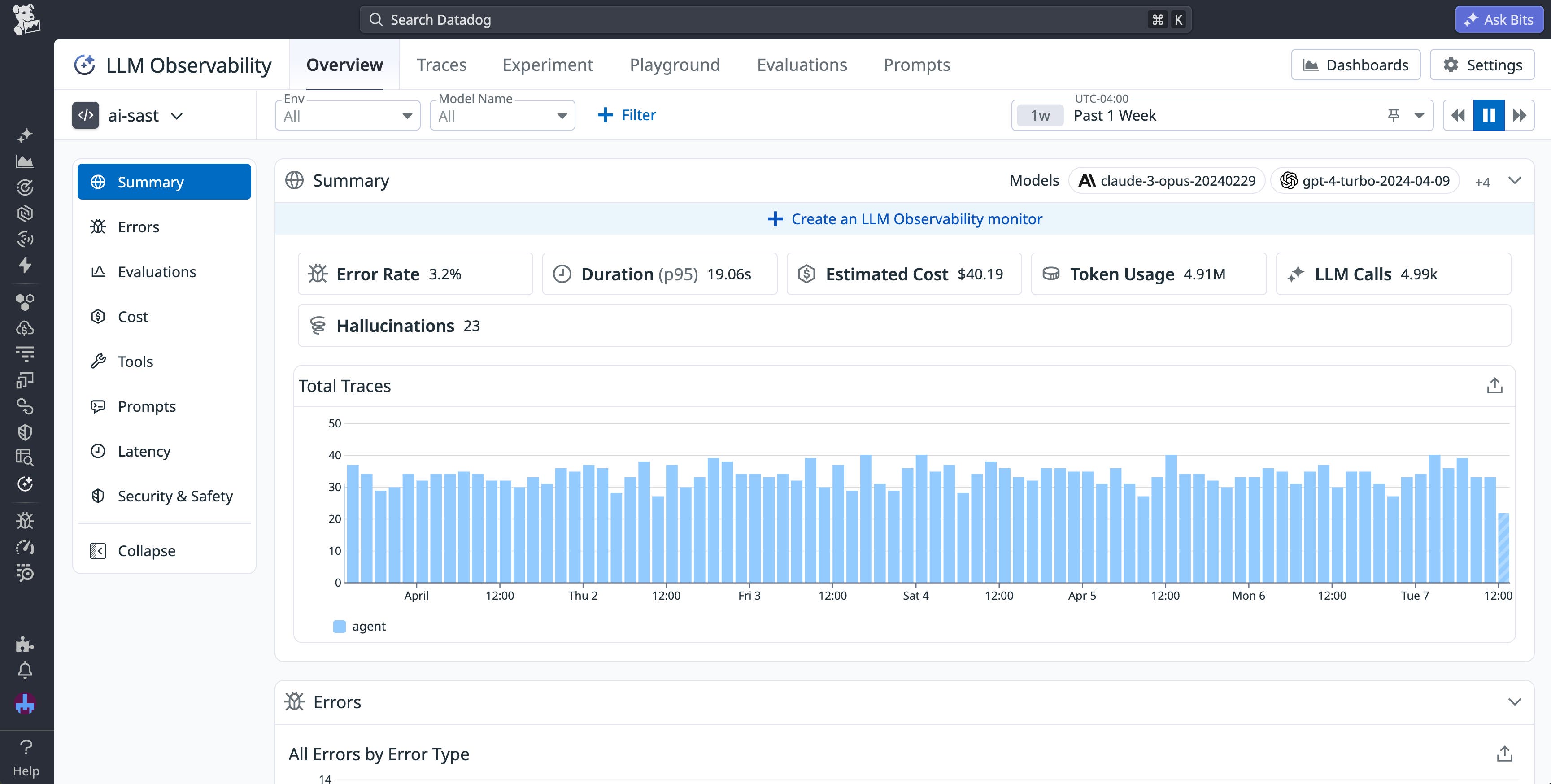

Additionally, we use Datadog LLM Observability to monitor both performance and cost for our AI-native SAST solution. Here, we’re able to track latency, token usage, LLM calls, and estimated cost. This data helps us ensure that our project continues to perform optimally throughout any refinements.

Evaluating accuracy against the OWASP Benchmark

To determine whether our AI-native project surfaces more accurate results, we used the OWASP Benchmark framework to compare its true positive rate against our traditional SAST solution (enhanced with LLM false-positive filtering).

The table below shows the true positive rate (recall) for each OWASP Benchmark vulnerability category.

| Vulnerability | Traditional SAST + LLM Filtering | AI-native SAST |

|---|---|---|

| Command Injection | 59% | 90% |

| Cross-Site Scripting (XSS) | 65% | 93% |

| Insecure Cookie | 87% | 100% |

| LDAP Injection | 70% | 85% |

| Path Traversal | 64% | 90% |

| SQL Injection | 63% | 86% |

| Trust Boundary | 46% | 81% |

| Weak Encryption Algorithm | 100% | 100% |

| Weak Hashing Algorithm | 69% | 54% |

| Weak Randomness | 100% | 99% |

| XPath Injection | 68% | 95% |

In almost every category, our AI-native solution outperforms our traditional SAST tool, even when the latter has been augmented with LLM-based filtering.

The results suggest that the AI-native solution provides more accurate detection for context-dependent vulnerabilities. For vulnerabilities like command injection or SQL injection—where data flow across files must be analyzed to accurately detect a vulnerability—the true positive rates from our AI-native solution are at least three times higher than our traditional SAST tool. For context-independent vulnerabilities such as weak randomness or weak encryption algorithms, we see a similar level of accuracy across tools. Because our AI-native project surfaces findings alongside traditional SAST results, both approaches can be used together to identify true vulnerabilities more completely.

Why open source it?

Since the early 2000s, many SAST tools, including Datadog SAST, have been released under an open source license. This helps the security community contribute toward building better tools and improve security posture across the industry.

We believe that LLMs open up new opportunities for creating more robust, efficient application security tools and we want to be a leader of this change. By open sourcing our solution, we aim to increase transparency for our customers and invite collaboration from the security community.

The future of AI-enhanced SAST at Datadog

This first iteration of our AI-native SAST solution provides better accuracy than traditional tools. Its integration within Datadog allows it to run at scale for every code change. The current architecture relies on structured prompting rather than a full agentic approach, giving tighter control over resource usage and predictable execution costs.

In the coming months, we plan to explore more agentic scanning techniques to improve contextual reasoning and vulnerability discovery depth. You can view the latest version of the Datadog AI-native SAST project on GitHub.

Note that while the codebase is open source, incremental analysis is only available for Datadog customers. Our AI-enhanced incremental analysis feature is currently in Preview. To learn more about Datadog’s in-platform SAST feature, see the AI-enhanced Static Code Analysis documentation.

To explore Datadog’s security tooling, you can sign up for a 14-day free trial.