Candace Shamieh

Tony Redondo

Jorge Torres Martinez

Kyle Guske

Platform engineering teams have access to hundreds of metrics, yet over 40% of platform initiatives cannot demonstrate measurable value within the first year. Teams that cannot quantify their impact fail to obtain executive sponsorship, risk being defunded, and ultimately, face deprecation. To accurately calculate a platform’s ROI, platform engineering teams need to differentiate between signals that measure platform effectiveness and those that should be used solely for investigative purposes.

In this post, we use a tiering system to categorize metrics and explain how to contextualize them. We clarify which metrics demonstrate the platform’s value, reveal pain points, or aid in investigations. We also describe common pitfalls to avoid when tracking and analyzing these metrics.

A hierarchy for understanding platform metrics

Whether you’re using DORA’s software delivery metrics, SPACE, DevEx, HEART, or the DX Core 4 framework, concrete recommendations on the types of platform metrics to collect and how to interpret them can guide your platform investments. Platform metrics can be organized into a three-tier hierarchy: outcomes, drivers, and diagnostics. Outcome metrics appear at the top of the hierarchy, providing a high-level view of overall platform health. Driver metrics explain the conditions that cause the outcomes, and diagnostic metrics help you trace issues to their source. The hierarchy reflects a sequence of dependency, where each tier relies on the one above. Without outcome metrics, driver metrics lack organizational context; without driver metrics, diagnostic metrics lack investigative direction. When combined, the metrics in the hierarchy enable you to make informed decisions and targeted interventions.

The relationship among the tiers can be best understood through an example. Suppose deployment frequency—an outcome metric—drops over a specified time period. While you cannot fix it directly, you can turn to driver metrics to identify the cause. In this case, an increase in PR review time may explain the drop, but what caused the increase? To find the answer, you dig into diagnostic metrics such as the number of times PRs were sent back for changes, the time between review cycles, and the number of approvals required before a merge. You identify a spike in a specific team’s review cycle count, which tells you who to consult to address the increase.

Use outcome metrics to confirm platform value

Outcome metrics answer a single question: Does the platform enable developers to ship code quickly and reliably? Reviewed monthly or quarterly by leadership, outcome metrics are the North Star signals that confirm whether platform investments deliver organizational value.

Without outcome metrics, local platform improvements can be wrongly credited for having broader organizational impact and efforts to optimize can be misguided. For example, an engineering team producing more builds looks productive, but if it doesn’t translate into increased deployment frequency, then build quantity has become decoupled from the organization’s goal of shipping quickly.

Three categories of data inform your outcome metrics: delivery performance, developer perception, and platform impact.

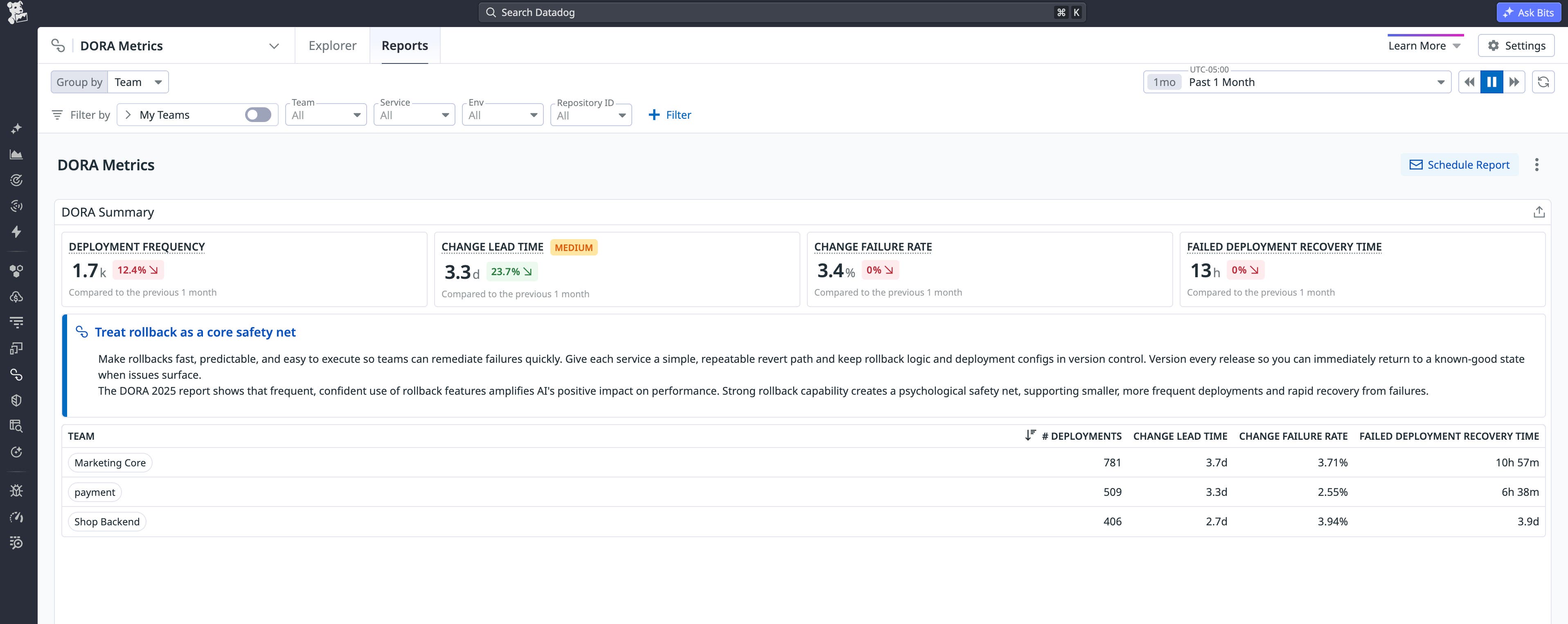

Track delivery performance with DORA metrics

The DORA framework is the leading standard for measuring software delivery performance. It defines five key metrics that assess how effectively an organization ships changes and maintains system reliability. DORA’s research demonstrates how these metrics influence overall organizational performance and team well-being, and how speed and stability are not trade-offs. DORA’s framework includes the following software delivery metrics:

- Change lead time: The time it takes for a change to go from committed to version control to deployed in production.

- Deployment frequency: The number of deployments over a given period or the time between deployments.

- Failed deployment recovery time: The time it takes to recover from a deployment that fails and requires immediate intervention.

- Change failure rate (CFR): The ratio of deployments that require immediate intervention after release, typically resulting in a rollback or a hotfix to quickly remediate any issues.

- Deployment rework rate: The ratio of deployments that are unplanned but happen as a result of an incident in production.

Collecting DORA metrics requires connecting three data sources: your version control system, CI/CD pipeline, and incident management platform. Datadog DORA Metrics automatically ingests data from Application Performance Monitoring (APM), CI/CD pipelines, Git providers, and incident management tools to help you calculate and generate reports for the metrics.

Each report contains Benchmark Thresholds that compare your software delivery performance to that of the broader Datadog community. You can also schedule and send automated reports to key stakeholders via email, Slack, or Microsoft Teams.

Datadog’s 2026 State of DevSecOps study found that teams with higher DORA metrics not only shipped code faster but also had a stronger security posture and recovered more quickly from failures. Frequent, stable deployments resulted in smaller windows of exposure for vulnerabilities and faster feedback loops for patching and upgrades.

Capture developer perception with survey data

Delivery metrics reflect results but not how developers experienced their workflows. Aggregated sentiment data from periodic surveys can capture developer satisfaction, well-being, and perceived productivity. At Datadog, we send our developer experience survey twice annually.

Developer perception data provides key context when combined with delivery metrics. High velocity paired with low perception can signal a risk of burnout and technical debt accumulation far before it affects your outcome metrics. For example, a healthy CI latency metric may indicate that builds are running smoothly, but if survey data reveals developers feel frustrated with builds, further investigation is warranted.

Quantify platform impact with adoption and onboarding metrics

Platform impact metrics provide insight into platform usage and assess whether the platform reduces the cost, time, and effort required to get started. The following signals are two examples of platform impact metrics:

- Adoption rate measures the percentage of developers or services that have migrated to the platform. It tells you whether the platform has enough reach to influence DORA metrics and North Star signals. Without adoption, the platform cannot significantly impact software delivery.

- Onboarding velocity measures the time required for a new hire or service to complete the first deployment. It reflects both platform simplicity and the quality of the self-service tools. Slow onboarding increases cost and cognitive load rather than reducing them.

Use driver metrics to identify bottlenecks

Reviewed weekly or biweekly by the platform team, driver metrics are system-level signals that help identify bottlenecks before they impact outcome metrics. For example, a spike in CI infrastructure overhead this week will adversely affect change lead time by next month if left unaddressed.

The table below maps each DORA metric to a corresponding driver metric and clarifies their relationship.

| DORA metric | Driver metric | Why it matters |

|---|---|---|

| Change lead time | CI infrastructure overhead | The “Black Box” delay: Aside from code efficiency, change lead time latency is influenced by a number of infrastructure-level tasks, including runner scheduling, source checkout, cache restoration, and queuing time. These systemic delays remain entirely outside of the developer’s ability to optimize. |

| Deployment frequency | Automated risk mitigation | Confidence is the bottleneck: Without automated error detection and rollbacks, teams have to babysit deployments. Developers have no choice but to ensure that their shipping speed doesn’t exceed a pace they can personally monitor. |

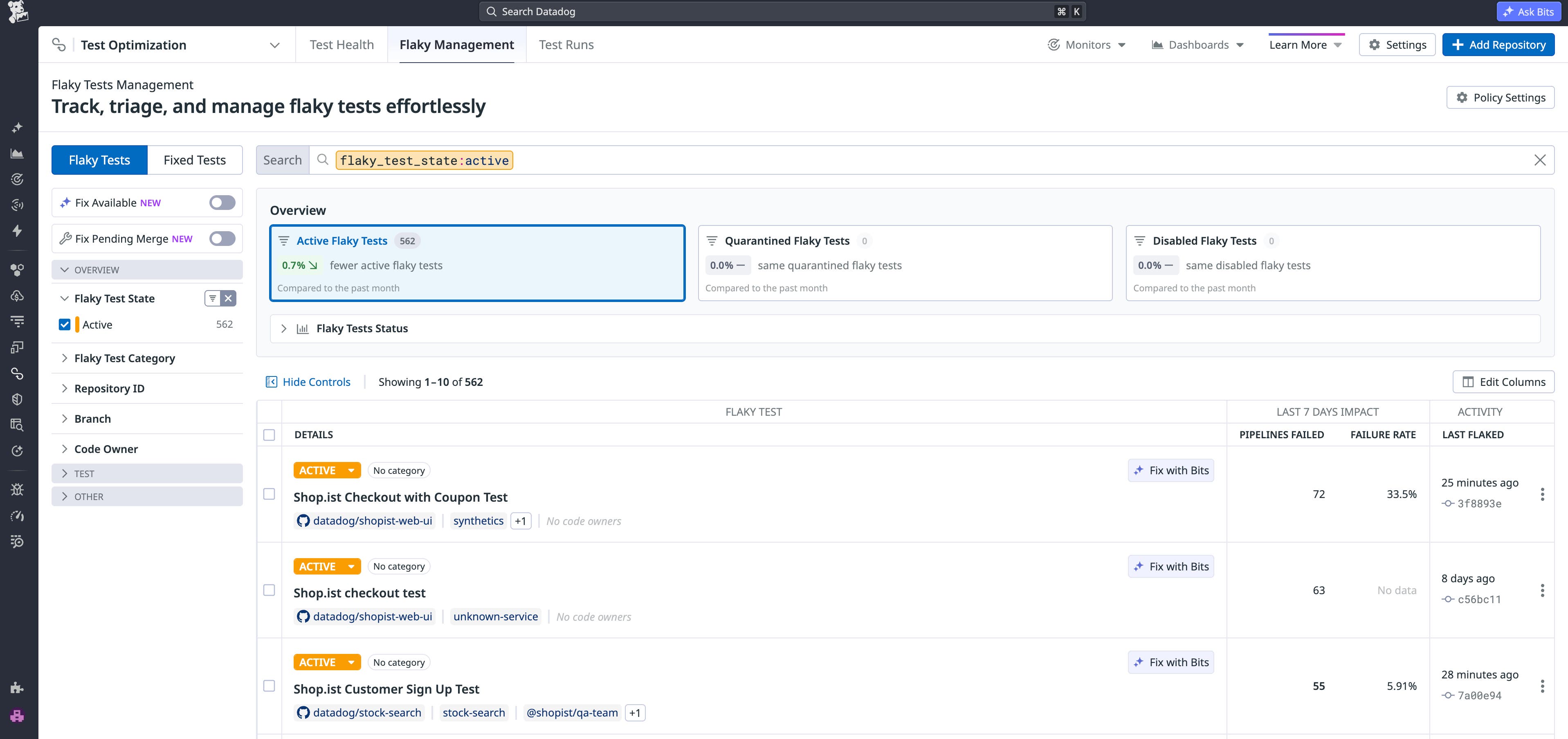

| Change failure rate (CFR) | Test flake rate | Erodes system trust: While a high test flake rate can impact change lead time and deployment frequency first, it can also affect CFR. High flakiness causes alert fatigue in the CI pipeline, which causes developers to think that pipeline flakes are just noise. If developers start to ignore failures or rerun jobs blindly until they pass, bugs can slip into production. |

| Failed deployment recovery time | Service catalog health | Extends orientation time: The biggest delay in a crisis is often finding the right owner or runbook. High documentation freshness increases recovery speed. |

| Deployment rework rate | Deployment batch size | Increases bug exposure: Large deployments are challenging to review thoroughly and increase the probability that bugs will reach production. When bugs result in incidents or user reports, engineers must trigger reactive deployments, which consume resources that could otherwise support new feature development. |

Additional examples of driver metrics include the following:

- CI queue time: The time that jobs spend waiting for a runner before pipeline execution. Undersized or oversubscribed runners cause job backlogs, increasing change lead time. This metric is distinct from CI infrastructure overhead, which measures execution time after the pipeline starts.

- Quality gate pass rate: The percentage of code changes that successfully pass automated quality checks before merge. A low pass rate indicates that defects are reaching later stages of the pipeline, where they are costlier to catch and more likely to reach production. Monitoring the quality gate pass rate offers platform teams an early warning before CFR is impacted.

- Environment provisioning time: The time required to provision a development or staging environment. Excessive provisioning time leads developers to share environments or skip parity checks, which increases CFR.

- Unplanned work ratio: The ratio of engineering time allocated to reactive tasks versus planned delivery. An increase in this ratio reduces deployment frequency because teams need to focus on incident response and rework rather than shipping.

Collecting driver metrics manually requires building and maintaining multiple integrations across your version control system, CI/CD pipeline, and incident management platform. As toolchains evolve, maintenance demands increase. Because Datadog DORA Metrics automates metric ingestion, metrics like PR review latency and automated risk mitigation are automatically captured as part of the change lead time and CFR analysis.

When you connect Datadog to a CI provider, like GitLab, Jenkins, or GitHub Actions, Datadog Continuous Integration (CI) Visibility automatically collects pipeline performance metrics, including CI infrastructure overhead. Test flake rate can be monitored through Datadog Test Optimization, which detects flaky tests at the commit level.

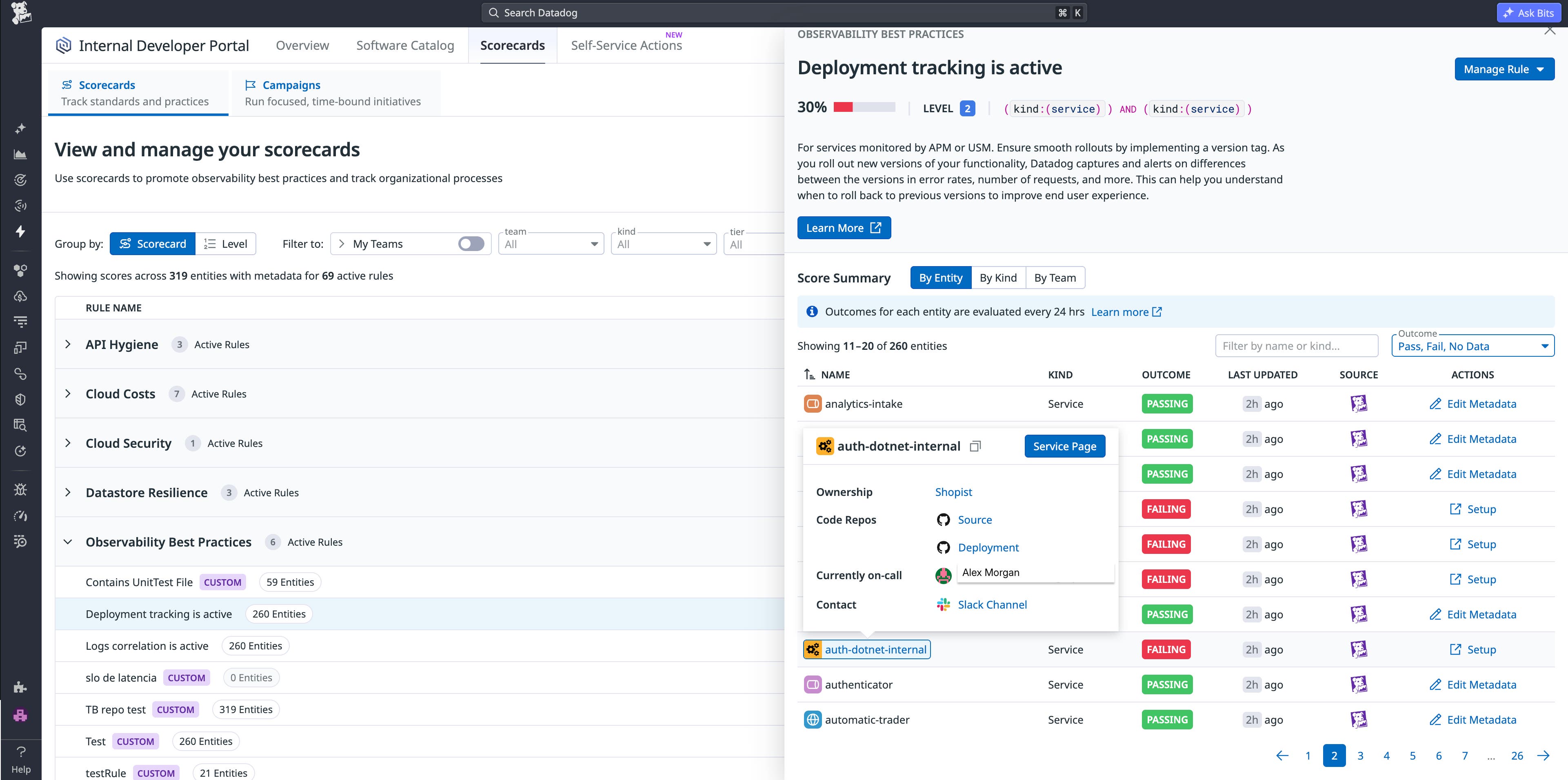

Service catalog health can be maintained in the Software Catalog of Datadog’s Internal Developer Portal, where Scorecards evaluate the freshness and completeness of service documentation, ownership responsibilities, and on-call information. Datadog DORA Metrics also tracks deployment batch size by monitoring commits per deployment over time.

Use diagnostic metrics to investigate root causes

Diagnostic metrics are situational, investigative tools used by engineers and platform team individual contributors (ICs) such as platform product managers. Diagnostics are granular, point-in-time signals; they are not intended to identify trends. Examples of diagnostics include error logs, trace spans, resource utilization metrics, readiness scorecards, and AI-generated investigation outputs, like error summaries.

Standardize diagnostic metric coverage across services

Instrumentation libraries vary in maturity depending on language or framework, and individual service teams cannot predict which data will be needed across service boundaries for an investigation. Without a platform-enforced standard, diagnostic metric coverage and quality will be inconsistent and gaps will only become apparent during an incident.

Platform teams that embed auto-instrumentation into their golden paths and enforce observability coverage through production-readiness scorecards ensure that diagnostic data is consistently available across all services without manual effort. Datadog Scorecards can evaluate service observability coverage, providing platform teams with visibility into instrumentation gaps before incidents occur.

As outlined previously, diagnostic metrics such as the number of times PRs were sent back for changes, the time between review cycles, and the number of approvals required before a merge are useful during investigations. Other examples of diagnostics include the following metrics:

- Test-level failure analysis: If the test flake rate increases, conducting test-level failure analysis helps distinguish genuine regressions from unreliable tests. Identifying failed tests, affected branches, and the commit that introduced the failure enables intervention and prevents disruption to other developer pipelines.

- CI pipeline stage analysis: If CI infrastructure overhead increases, pinpointing the stage responsible, such as runner scheduling, source checkout, cache restoration, or job execution, focuses remediation efforts.

- Deployment diff: When CFR increases, reviewing the specific commits within the failed deployment enables engineers to identify the code change causing the failure during an incident, so they can initiate a rollback or deploy a targeted fix.

All of these diagnostic metrics are accessible in Datadog. Test Optimization provides test-level failure details by commit and branch. CI Visibility breaks down pipeline execution time by stage and job, enabling you to identify the cause of latency. Datadog DORA Metrics reports the commits associated with a failed deployment, so you can quickly narrow the scope of an issue. Correlating the commits with error rates and performance changes in Datadog APM deployment tracking helps you determine the quickest path to remediation.

Recognize common platform metrics pitfalls

You need a robust feedback loop to measure platform effectiveness, but the way you treat the metrics is just as important as the metrics themselves. At every stage of platform maturity, teams are susceptible to common pitfalls that can undermine trust, misinform decision-making, or keep them accountable to service level objectives (SLOs) that no longer coincide with organizational goals.

Avoid optimizing driver metrics in isolation

Driver metrics are monitored to explain changes in your outcome metrics. But when they become isolated goal targets, it effectively invites Goodhart’s Law into your platform.

For example, CI duration helps to explain change lead time. If your team decouples the CI duration metric from change lead time and sets an isolated goal to reduce it to under 5 minutes, that may be achieved through caching, parallelization, or minimizing test coverage. This could lead to bugs reaching production and an increase in deployment failures, which negatively affects your CFR and, ultimately, user experience. Keeping driver metric goals anchored to outcomes ensures that they cannot be reached in ways that harm the broader goals they are meant to serve.

Don’t promote diagnostic metrics to KPIs

Diagnostic metrics are investigative in nature and can answer specific questions. You review and analyze them when issues arise to help you identify the cause.

For example, a team’s review cycle count can provide valuable insight into PR review latency. But if the metric is added to a quarterly dashboard, it will lose the context that makes it valuable. Making a diagnostic metric like this a key performance indicator (KPI) pressures engineers to optimize for arbitrary goals that do not holistically reflect platform efficiency.

Retire metrics that have served their purpose

Metrics have a life cycle. Monitoring CI slowdown may be useful during an incident, but once the incident is resolved, the metric should be retired. When stale metrics accumulate across dashboards, reports, and performance review cycles, they can start to obscure the most important signals. By auditing metrics periodically to deprecate what’s stale, you ensure that every metric has a clear reason for being tracked.

Put platform engineering metrics into practice

As platform engineering evolves into a standard organizational capability, selecting the right metrics becomes as important as the tools that produce them. Teams that track outcome metrics that reflect overall platform health, interpret driver metrics as explanations for outcomes, and use diagnostics as investigative signals are better positioned to convert data into actionable insights and demonstrate the organizational value of platform investments.

To learn more, visit the DORA Metrics, Test Optimization, CI Visibility, and Internal Developer Portal documentation. If you’re new to Datadog, get started with a 14-day free trial.