Tim Knudsen

Teams use observability data to understand why a system behaved the way it did during an outage or a security incident. This visibility is traditionally associated with performance monitoring, but it is equally important for security investigations. Reconstructing an attack path requires knowing how cloud identities, services, and other resources interacted, and teams can obtain this context from observability data—metrics, events, logs, and traces (MELT).

With LLM applications and services now becoming a bigger part of distributed environments, the cost of fragmented tooling and infrastructure blind spots only multiplies. Practices like vibe coding increase the number of application vulnerabilities, and emerging AI attack techniques can direct systems to respond in unintended ways. At the same time, AI can help teams move faster during investigations by identifying relationships between large volumes of security signals. Both cases stress the need for observability data when monitoring application performance and security.

In this post, we’ll look at how observability data improves the accuracy of security signals and investigations and the role it plays in environments that take advantage of AI’s capabilities.

How we use observability data for threat detection, investigation, and response

If unified visibility is the baseline for understanding cloud incidents, then security signals on their own are limited from the start. Incidents generate multiple streams of authentication, application, and infrastructure data, with each capturing a different moment in the timeline. Without access to both security and observability context, teams are left piecing together this evidence to understand what actually happened.

The Cloudflare breach in late 2023 illustrates the benefits of connecting operational data to security signals during an incident. Attackers used credentials and an access token exposed via a third-party breach to access internal systems—primarily Cloudflare’s self-hosted Atlassian environment. Teams observed unusual authentication flows, reconnaissance activity, and attempts to access other internal systems. No single datapoint told the whole story, but the breadth of available MELT data let responders correlate activity across their systems and reconstruct an accurate timeline of events.

Like with the Cloudflare example, teams can use their existing data to answer key questions during security investigations, such as:

- What changed in the system?

- Who or what initiated the change?

- What else did this change affect?

- Which external endpoints were called?

- For AI-powered services, which prompt or tool call initiated the action?

This need for shared context is why Datadog merged its internal SRE and security teams. Bringing architectural knowledge together with security best practices makes systems less prone to failure. This same principle carries into how we designed our security platform.

We treat cloud security monitoring as a continuation of how teams already observe and analyze their cloud environments, instead of a separate layer. This approach produces security signals that reflect real-time system behavior, which improves their accuracy and gives responders the context they need during incidents. It also supports AI-assisted triage by providing the additional data needed to summarize what changed, who was involved, and what systems were affected.

Applying observability data to threat analysis

When threat analysis is connected to existing observability data, teams can use the same context they already rely on for performance monitoring to link system behavior to an attack path or exploited vulnerability. Observability data also enables AI to play a more helpful role in generating investigation summaries and suggested remediation steps.

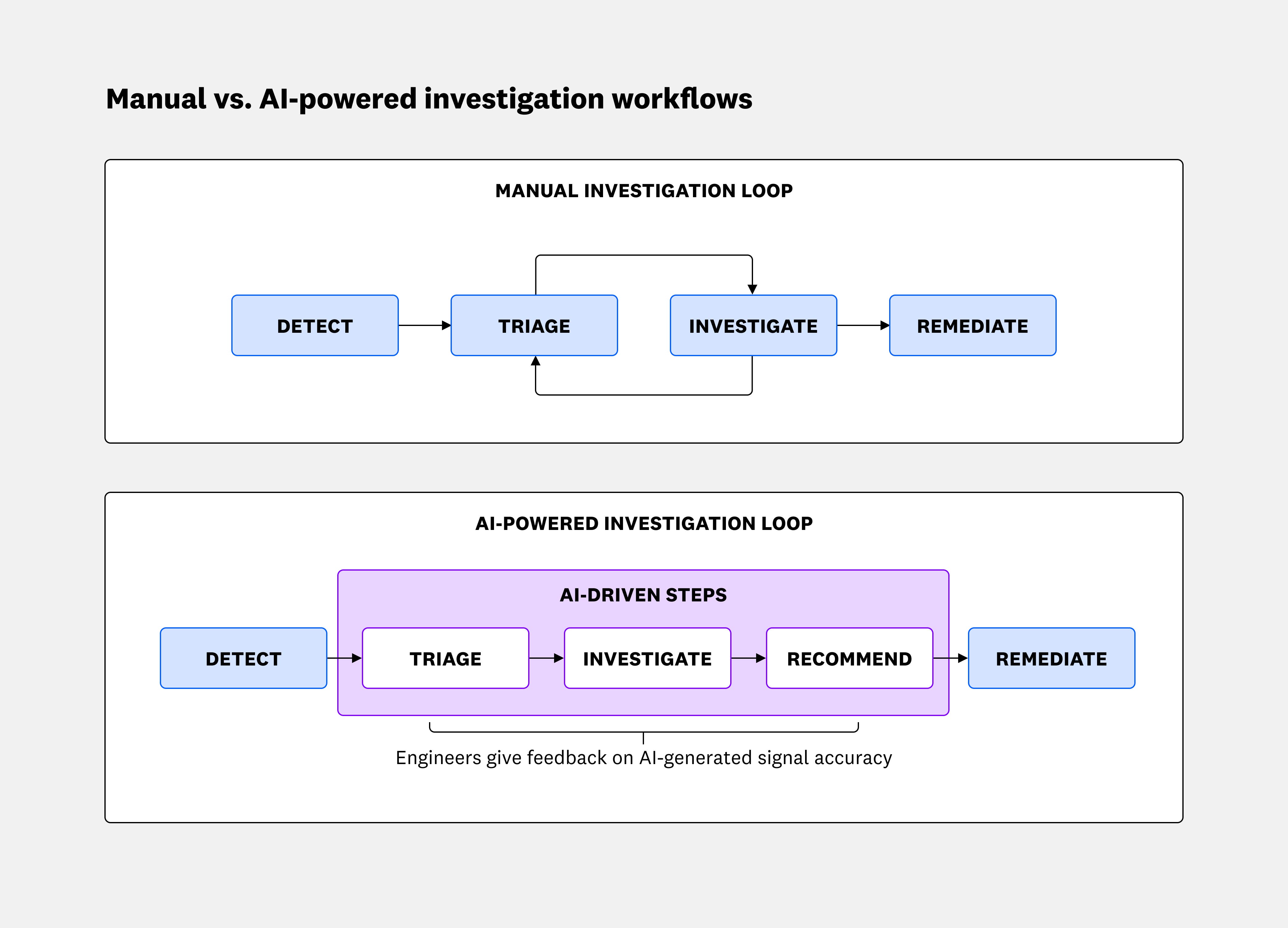

We’ve seen the immediate value of combining observability data and security signals with AI-powered analysis in how it creates an easy-to-follow map of events for incidents. This dynamic is most visible during triage and investigation stages, which typically require a slow, back-and-forth process for verifying signal accuracy and priority:

As an example, consider a SIEM signal that flags a high-privilege AdministratorAccess policy attachment to a service account, and the source IP is labeled as a “suspicious” residential proxy. A typical investigation could look like the following steps:

- Confirm whether the IP is an expected admin and whether the session matches normal sign-in patterns.

- Identify what else happened in the same time frame, such additional policy changes, newly created access keys, failed authentications, or unusual API calls.

- Review impacted services and resources to understand which workloads and data stores that identity can reach.

- Check for related network behavior, such as activity from unusual geographic locations or spikes in outbound calls.

AI-powered analysis that uses an environment’s existing observability data can consolidate a complex, multi-step process into a single assessment. As a result, teams can complete security investigations in minutes instead of hours and focus on follow-up actions, such as disabling credentials or enforcing least-privilege controls.

Using observability data to prioritize risks

Teams need access to observability data at every stage of the software development lifecycle (SDLC). With code velocity increasing thanks to AI-assisted development workflows, this data is necessary for quickly prioritizing risks. Only a fraction of critical code vulnerabilities are even worth addressing, so teams need a better understanding of which changes introduce actual risk. Pairing code analysis findings with runtime behavior and connecting them to impacted services helps teams identify legitimate risks earlier in the SDLC. When AI models operate on that same data, they can further reduce false positives and unnecessary delays.

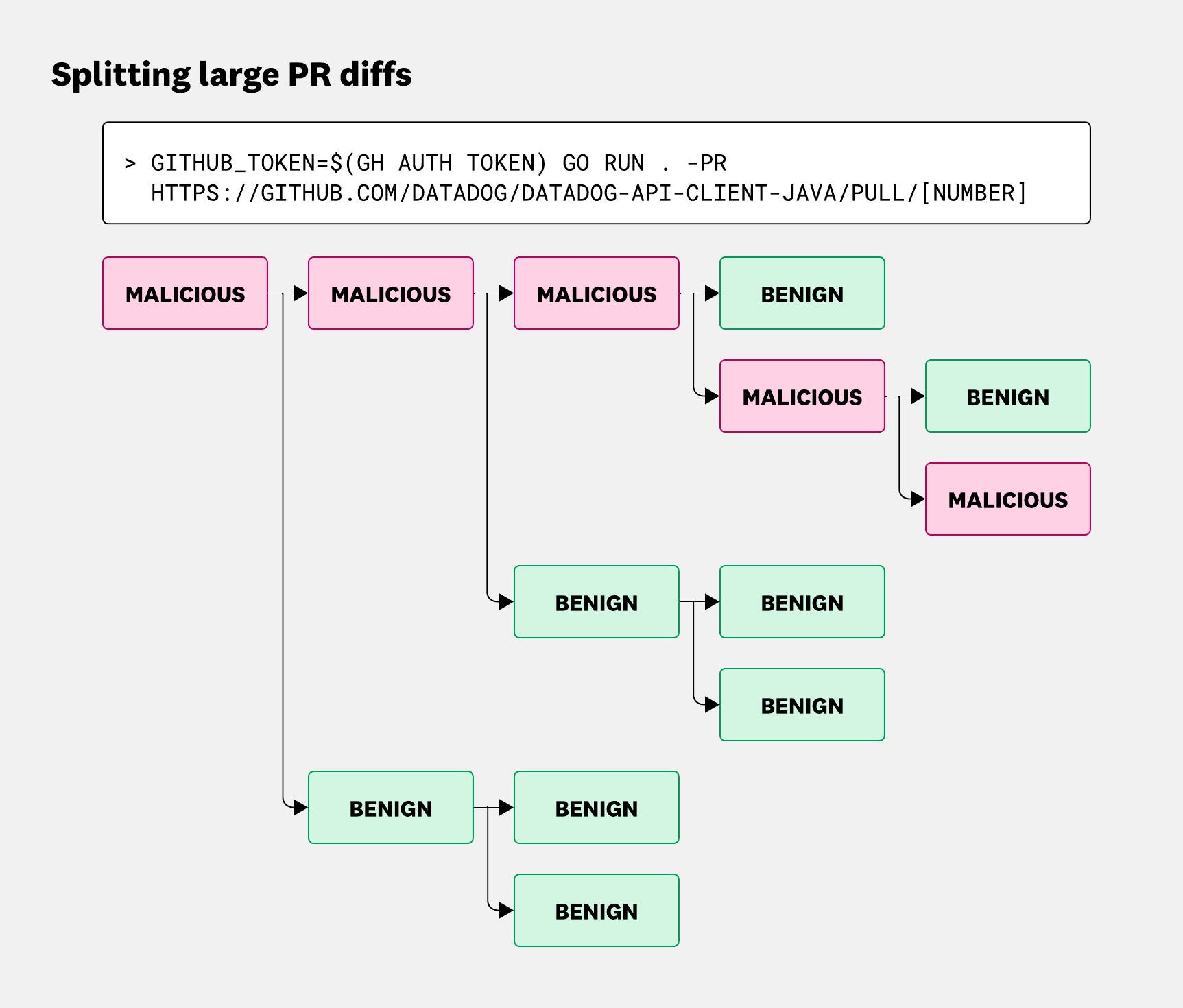

At Datadog, we’ve integrated observability data into code reviews through LLM-based analysis on pull requests. This process enables us to identify code that increases risk before it reaches production. AI is particularly useful for analyzing large PRs because malicious code is often buried under benign changes. We addressed this problem by building a system that methodically reviews code changes in smaller chunks.

To distinguish malicious code from benign updates, models require context across both real-world exploits and typical development behavior. Therefore, we continually update datasets that include observed and simulated attacks as well as everyday PRs. This step helps reduce false positives so teams can efficiently surface malicious behavior from day-to-day code changes.

Build resilient systems by unifying observability and security data

Cloud environments will continue to evolve with the adoption of new technologies, and integrating AI systems only adds another layer of complexity. Each layer consequently creates additional monitoring blind spots and expands the cloud attack surface. This means that observability data should be treated as the central building block for cloud security monitoring, especially in environments that take advantage of AI’s capabilities.

We’ve taken this approach from the start by enriching security signals and AI-powered threat analysis with observability data. Datadog processes trillions of datapoints per hour across more than 32,000 customer environments worldwide, supporting more than 1,000 products and services. Over the past decade, we’ve seen how this visibility provides the context that teams need to accurately interpret, investigate, and remediate security events.

For more information about how we designed cloud security monitoring to take advantage of observability data, see our documentation. For a deeper look into AI-powered security workflows, read our post on how Bits AI Security Analyst automates Cloud SIEM investigations and our post on Datadog Code Security’s AI-driven vulnerability management.

And if you don’t already have a Datadog account, you can sign up for a free 14-day trial.