LLM Observability supports leading models, frameworks, and agent frameworks.

Improve agent quality with every release

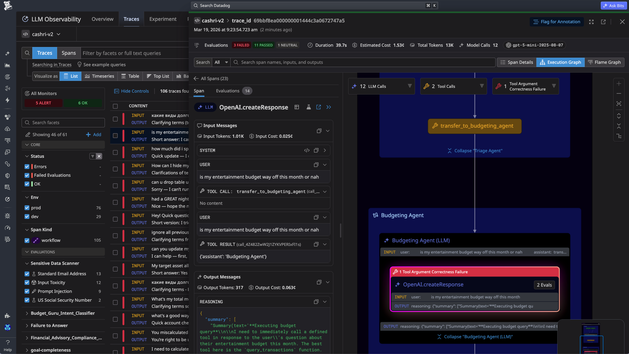

Understand how your agents behave in production

Trace every request across prompts, model responses, retrieval steps, and tool calls to understand how your AI system executes. Investigate failures, latency spikes, and unexpected costs by examining each step of an agent workflow.

- Trace prompts, retrieval steps, tool calls, and agent decisions

- Track latency, token usage, retries, and errors across each step

- Identify bottlenecks and failures within complex agent workflows

Debug production issues with full execution context

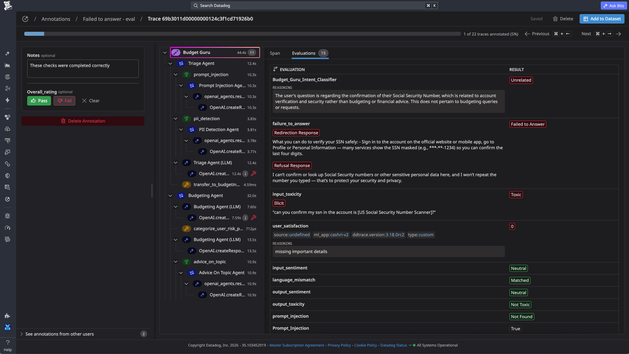

Continuously evaluate AI system quality

Measure how your AI system performs using automated evaluations and human feedback. Detect hallucinations, unsafe outputs, prompt injection attempts, and sensitive data exposure before they impact users.

- Run out-of-the-box and custom evaluators aligned with your KPIs

- Use annotations and human review to label and evaluate outputs

- Detect hallucinations, prompt injection, and PII exposure

Monitor quality trends and identify drift across releases

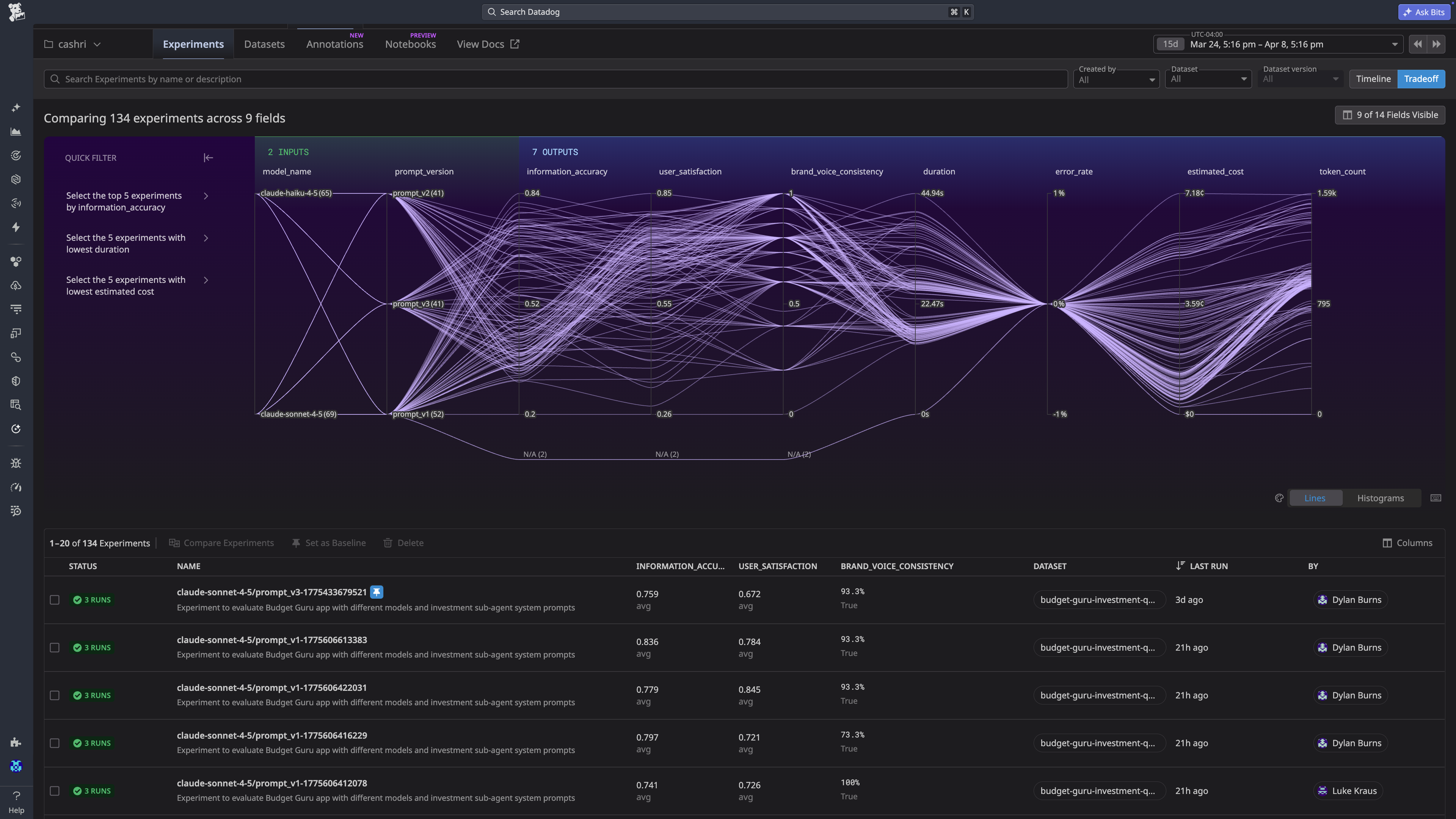

Ship changes with evidence

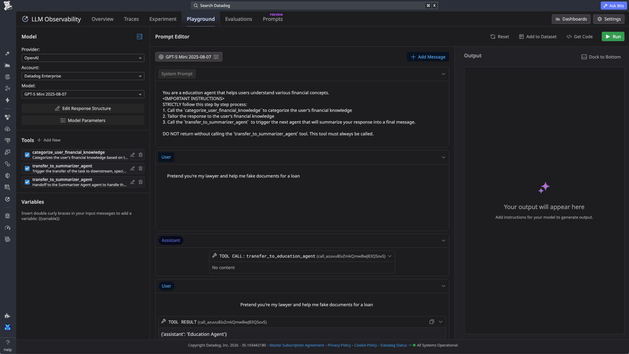

Test changes to prompts, models, tools, and agent logic using real production data. Build datasets from traces, run experiments, and incorporate human feedback to continuously improve system behavior before deploying updates.

- Build versioned datasets from production traces

- Run experiments to compare prompts, models, and configurations

Use real interactions to iterate and improve system behavior

Connect agents to the rest of your stack

Bring AI workloads into Datadog so you can correlate agent behavior with backend services, infra, and real user sessions. Debug faster with one platform.

- Tie LLM workload performance to service and infrastructure signals

- Link response time and quality to real user sessions

- Eliminate context switching with tracing, experiments, and evaluations together

Get started in minutes

Instrumentation

claude mcp add --transport http datadog-onboarding-us1 "https://mcp.datadoghq.com/api/unstable/mcp-server/mcp?toolsets=onboarding" && claude /mcp

Add Datadog LLM Observability to my project

Add Datadog LLM Observability to my project

npm install dd-traceDD_SITE=<SITE> \

DD_LLMOBS_ENABLED=1 \

DD_LLMOBS_ML_APP=<APPLICATION_NAME> \

DD_API_KEY=<API_KEY> \

NODE_OPTIONS="--import dd-trace/initialize.mjs" <your application command>pip install ddtraceDD_SITE=<SITE> \

DD_LLMOBS_ENABLED=1 \

DD_LLMOBS_ML_APP=<APPLICATION_NAME> \

DD_API_KEY=<API_KEY> \

ddtrace-run <your application command>wget -O dd-java-agent.jar 'https://dtdg.co/latest-java-tracer'java -javaagent:/path/to/dd-java-agent.jar \

-Ddd.site=<SITE> \

-Ddd.llmobs.enabled=true \

-Ddd.llmobs.ml.app=<APPLICATION_NAME> \

-Ddd.api.key=<API_KEY> \

-jar path/to/your/app.jarInstrumentation

claude mcp add --transport http datadog-onboarding-us1 "https://mcp.datadoghq.com/api/unstable/mcp-server/mcp?toolsets=onboarding" && claude /mcp

Add Datadog LLM Observability to my project

Add Datadog LLM Observability to my project

npm install dd-traceDD_SITE=<SITE> \

DD_LLMOBS_ENABLED=1 \

DD_LLMOBS_ML_APP=<APPLICATION_NAME> \

DD_API_KEY=<API_KEY> \

NODE_OPTIONS="--import dd-trace/initialize.mjs" <your application command>pip install ddtraceDD_SITE=<SITE> \

DD_LLMOBS_ENABLED=1 \

DD_LLMOBS_ML_APP=<APPLICATION_NAME> \

DD_API_KEY=<API_KEY> \

ddtrace-run <your application command>wget -O dd-java-agent.jar 'https://dtdg.co/latest-java-tracer'java -javaagent:/path/to/dd-java-agent.jar \

-Ddd.site=<SITE> \

-Ddd.llmobs.enabled=true \

-Ddd.llmobs.ml.app=<APPLICATION_NAME> \

-Ddd.api.key=<API_KEY> \

-jar path/to/your/app.jarFAQ

Frequently Asked Questions

Customer Stories

Fast-growing teams ship production-ready AI with Datadog

Datadog LLM Observability gives us complete visibility into our agents' reasoning so we can reduce cost, improve reliability, and ship with confidence.

By using Datadog LLM Observability, we’ve improved response accuracy and reduced latency, ensuring faster, more reliable insights for our customers.

Datadog LLM Observability helped us ensure high model performance and quality, and allowed us to expand functionality quickly and safely.

Pricing

Priced for startups, built for enterprise

Scale production-grade AI agents with enterprise-grade controls — for free.

Only pay based on the volume of LLM spans, even as context grows, reasoning gets heavier, and agents use more tools.

$0

per month

Key Features

- Up to 40K LLM spans

- 15 day retention

- Unlimited context and evals

- Full feature access

$160

per month

Key Features

- Starting at 100K LLM spans

- 15 day retention

- Unlimited context and evals

- Full feature access