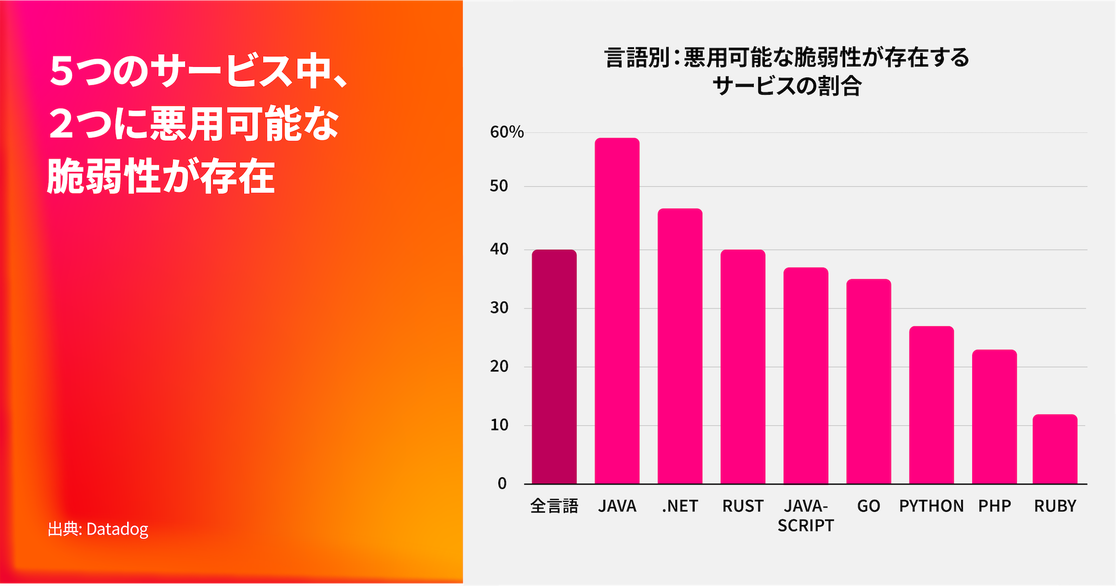

ほぼすべての組織が、本番環境で稼働するサービスに既知の悪用可能な脆弱性を抱えている

87%の組織が少なくとも1つの悪用可能な脆弱性を抱えており、**全サービスの40%**がその影響を受けています。これらの脆弱性は、Javaサービスで59%と最も多く見られ、続いて.NETが47%、Rustが40%となっています。さらに、実際に悪用が確認されている脆弱性については、脆弱なサービスが侵害される可能性がより高まります。

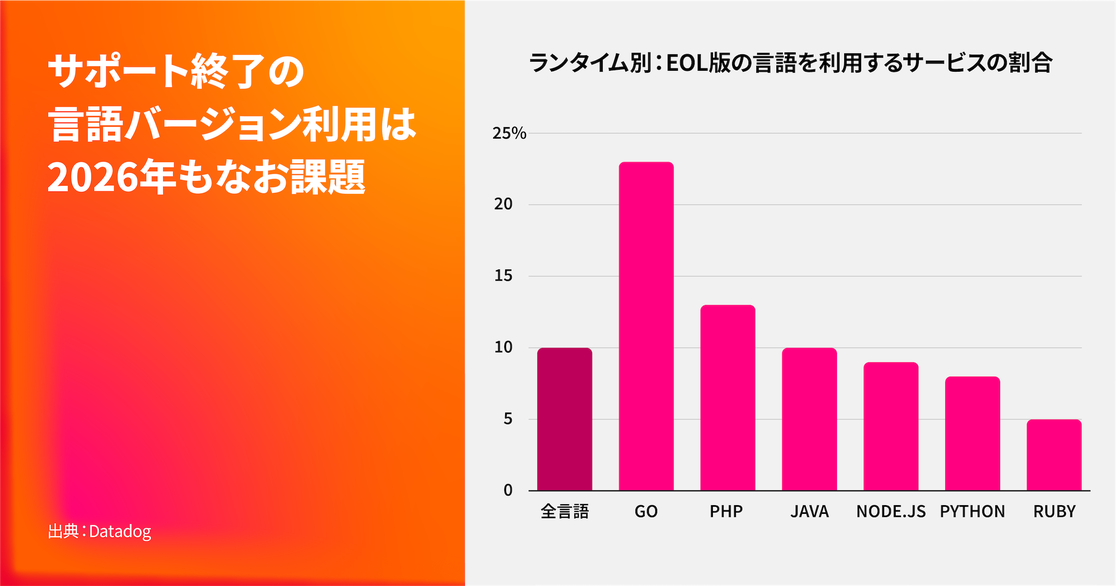

言語やランタイム環境を最新の状態に保つことは、アプリケーションやサービスにおける悪用可能な脆弱性を防ぐための基本的な対策であり、依存ライブラリを最新に維持しやすくするうえでも重要です。いったんアプリケーションが複数バージョン分遅れてしまうと、最新リリースへアップグレードするには大幅な時間やコスト、労力が必要になります。その結果、対応が先送りされ、最終的にはサポート終了(EOL)バージョンのまま稼働し続けてしまうケースが少なくありません。

世界全体では、10%のサービスが少なくとも1つのEOLバージョンの言語またはランタイム環境を基盤としています。言語別では、Javaは10%で中間的な水準に位置しており、Goは23%、PHPは13%となっています。

さらに詳しく分析すると、各言語のEOL(サポート終了)ポリシーの積極性が影響している可能性が見えてきました。たとえば、GoはEOLポリシーが比較的厳格で、各バージョンのサポート期間は1年間に限定されています。一方、PHPは4年間サポートされます。この違いが、他の言語と比べてGoで割合が高くなっている要因の一つと考えられます。

また、組織がEOLバージョンの言語やランタイム環境を使用している場合、その言語で構築されたサービスの50%が悪用可能な脆弱性を抱えています。これは、EOLバージョンを使用していない場合の37%と比較して、明らかに高い割合です。

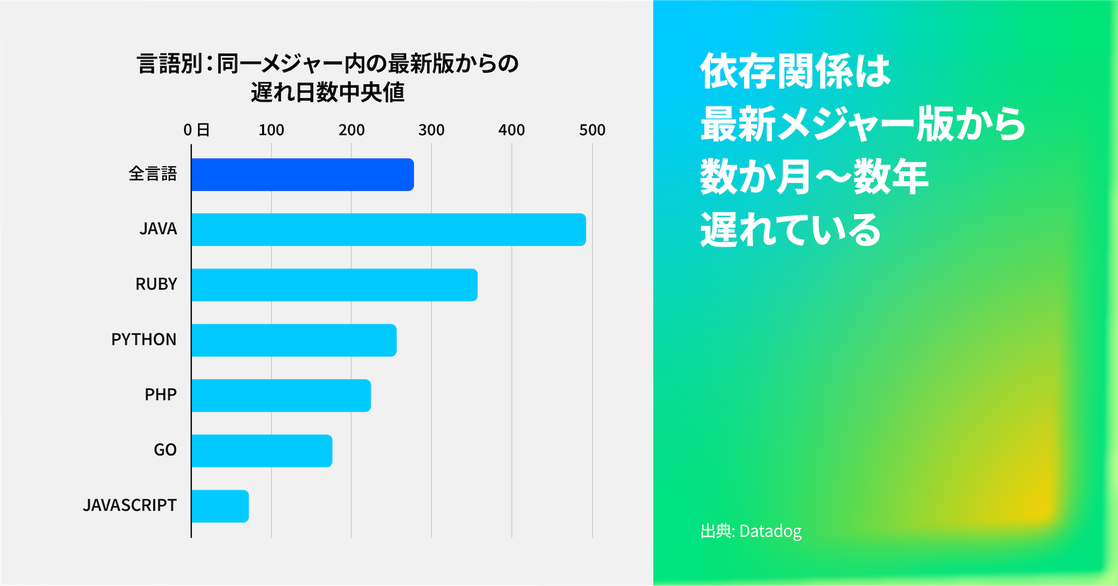

ライブラリを最新の状態に保つことは、依然として開発者にとって大きな課題である

現代のアプリケーションは、多数のサードパーティ製依存ライブラリに支えられており、それぞれが独自の更新サイクルや破壊的変更(Breaking Change)のリスクを抱えています。今回の調査では、依存ライブラリは最新のメジャー バージョンに対して中央値で278日遅れていることが分かりました。これは、昨年の215日遅れと比べて拡大しています。言語別では、Javaが492日遅れ、Rubyが357日遅れと、いずれも全体の中央値を上回る結果となりました。

月に1回未満しかデプロイされないサービスの依存関係は、毎日デプロイされるサービスと比べて70%も古い傾向があります。中央値で見ると、最新バージョンからの遅れは295日であり、毎日デプロイされるサービスの172日遅れと大きな差があります。

これは、デプロイ頻度が単なる生産性指標にとどまらず、実質的なセキュリティ コントロールとしても機能していることを示しています。DevOps Research and Assessment(DORA)メトリクスを用いてライブラリのアップグレード状況を評価すると、開発のスピードと安定性が高い組織ほど、DORA指標の低いサービスと比較して、全体的なセキュリティ態勢も向上していることが分かります。

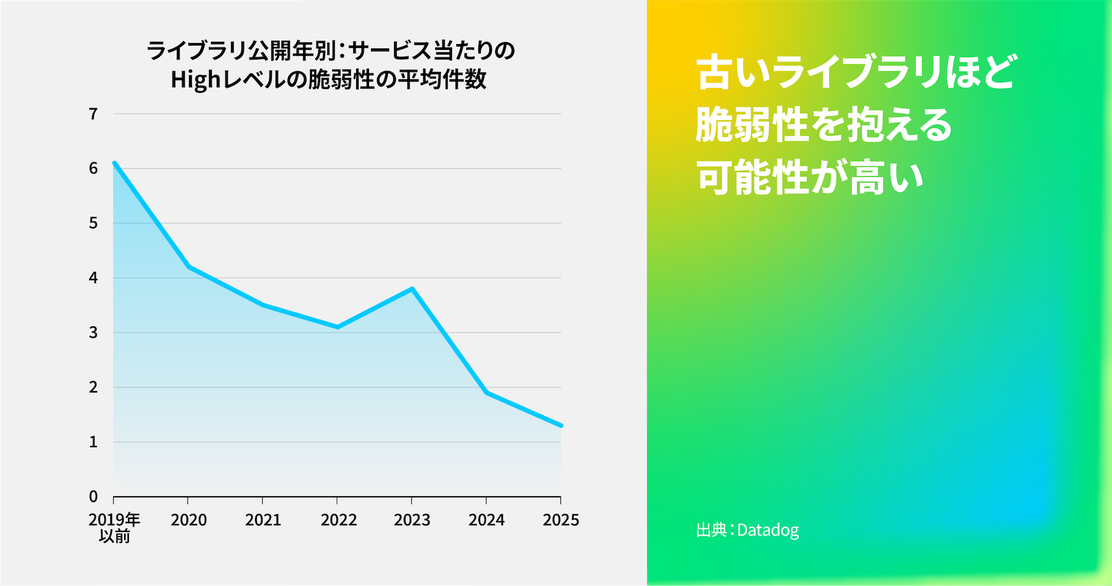

第1の事実でも述べたとおり、サービスや依存ライブラリを最新の状態に保つことで、脆弱性を抱える可能性は低くなります。今年は、依存ライブラリの公開時期と脆弱性数の関係を分析しました。その結果、新しいライブラリほど、発見される脆弱性の数が少ない傾向があることが分かりました。

サービス単位で見ると、2025年に公開されたライブラリの脆弱性数は平均1.3件であり、2024年は1.9件、2023年は3.8件でした。2023年の急増は、主にJavaサービスに影響を与えたSpring FrameworkおよびSpring Bootに関する6件のCVEによるものです。Javaで脆弱性が多く見られる傾向は、前述のとおり、Javaの依存ライブラリが比較的古い状態にあることとも一致しています。

過去1年間で、アクティブに保守されておらず、既知の脆弱性を含むライブラリを使用しているサービスの割合に大きな変化は見られませんでした。これは、機能開発のスピードや増え続ける脆弱性対応のバックログを背景に、多くの企業がこれらの問題を依然として優先できていない可能性を示しています。セキュリティ脆弱性や非推奨化、さらにはサプライチェーン上の問題は短期間で発生し、次々と積み重なっていくため、どのアップデートを緊急対応すべきかを見極めるのは容易ではありません。

ライブラリの脆弱性が懸念されるのは、どのバージョンであっても、いつでも新たに発見される可能性があるためです。そのため、ライブラリを定期的に更新することは、破壊的変更(Breaking Change)のリスクを抑え、将来のアップデートをより円滑に進めるうえで有効です。特に大きなバージョン アップを行う場合には、回帰テストが不可欠です。まずはアプリケーションのテスト カバレッジを高めることで、破壊的変更が発生していないことへの信頼性を向上させたり、問題が発生した箇所を特定しやすくしたりすることができます。

リリースから1日以内のアップデートには、固有のリスクが伴う

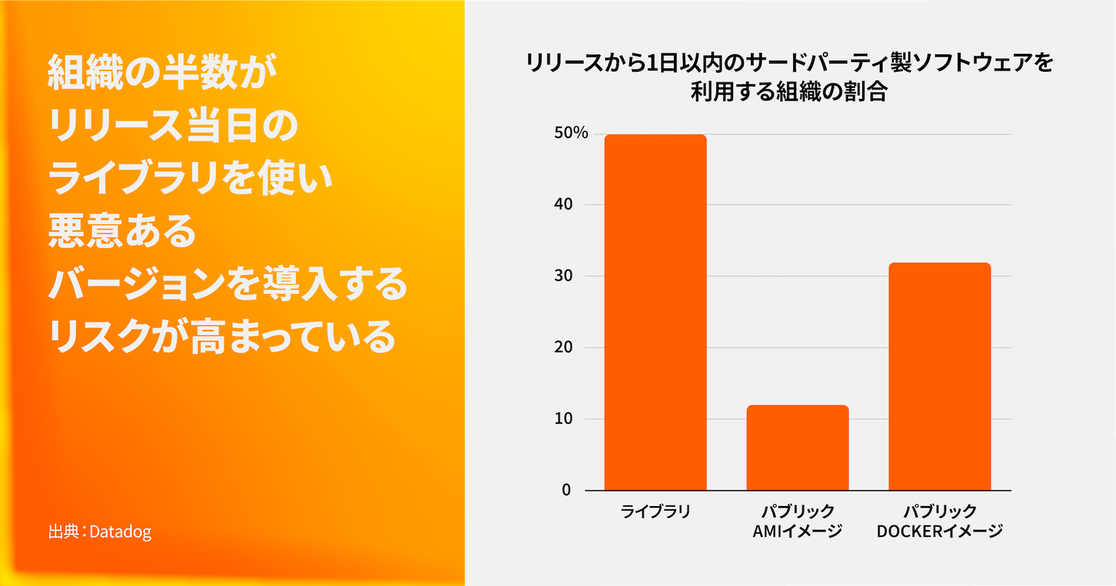

ソフトウェアを新しいリリースへ自動的にアップデートすることは、ライブラリを最新の状態に保ち、第1の事実や第2の事実で述べたリスクを回避するための簡単な方法のように思えるかもしれません。しかし、サプライチェーン侵害のリスクを考慮すると、リリースから1日以内に新バージョンへ更新することは、悪意あるソフトウェアを知らずにインストールしてしまう可能性があるため、アプリケーション全体のセキュリティに悪影響を及ぼす恐れがあります。

このリスクをより明確に把握するために調査したところ、50%の組織がサードパーティ製ライブラリをリリースから1日以内に利用していることが分かりました。また、12%の組織がパブリックAMIイメージを、32%の組織がパブリックDockerイメージを、それぞれリリースから1日未満で利用しています。

リリース直後の利用割合はライブラリより低いものの、AMIやDockerイメージはシステム全体に広範なアクセス権を持つため、万が一侵害された場合の影響はライブラリよりも大きくなる可能性があります。

ライブラリー

過去1年間で、いくつかの大規模なサプライチェーン攻撃の拡大が確認されました。特に、s1ngularityやShai-Huludの2件の攻撃は、組織がライブラリの新しいバージョンを公開直後に導入してしまうケースが一因となり、影響が広がりました。

依存関係をマルウェアから守るためには、依存ライブラリのバージョンを完全なコミットSHAでピン留めすることを推奨します。もしそれが難しい場合は、JavaScriptの代替パッケージ マネージャーであるYarnやpnpmが提供する minimum release age(最低公開期間)の設定を利用することで、新しいバージョンが公開されてから一定期間待ってから利用することができます。同様の目的で、GitHubのDependabotにもアップデートに「クールダウン期間」を設定する機能があります。

このようなバッファ方式を採用することで、パッケージを最新の状態に保ちながら、インストールまでに一定の時間を設けることができ、悪意あるソフトウェアを取り込んでしまうリスクを低減できます。たとえば1週間程度のクールダウン期間を設けることで、多くの悪意ある依存パッケージの侵入を防ぐことが可能になります。

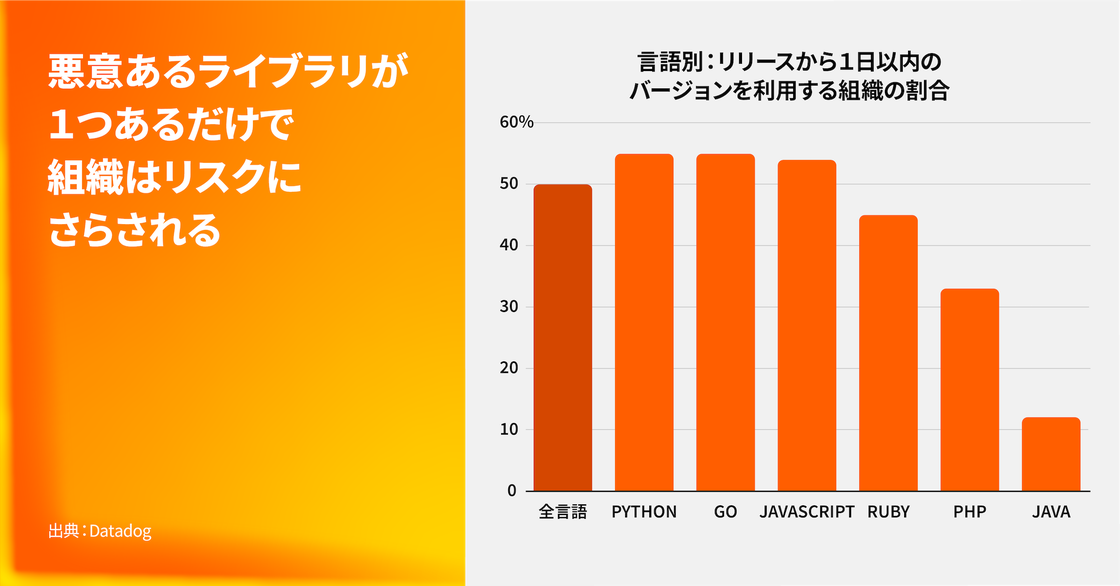

さらに詳しく見ると、JavaScriptを利用している組織の54%、Pythonを利用している組織の**55%が、ライブラリの新しいバージョンをリリースから1日未満で利用していることが分かりました。また、npmを利用している組織の1.6%**が、過去1年間に少なくとも1つの悪意ある依存パッケージを使用していたことも確認されています。

これは、npmの利用規模を考えると決して小さくない数字であり、数万規模の組織が悪意あるコードを実行してしまった可能性を示しています。つまり、適切なセキュリティ対策が講じられていない場合、サプライチェーン攻撃は現実に起こり得る運用上のリスクであることを意味しています。

パブリックなAMI

2025年初頭、DatadogはAWSのAmazon Machine Images(AMI)に影響する「クラウドイメージ名の混同(name confusion)」攻撃に関する調査結果を公開しました。この調査では、AMIの所有者を指定せずに、単純に「最も最近作成されたAMI」を取得してしまうと、知らないうちに悪意のあるAMIを利用してしまう可能性があることが明らかになりました。さらに調査を進めたところ、12%の組織が、作成から1日以内のAMIを少なくとも1つ利用していることが分かりました。

この調査を受けて、AWSは「Allowed AMIs」という新機能を提供しました。この機能は、特にコミュニティAMIを利用している場合に、こうした攻撃を防ぐための有効な対策となります。組織内で未検証のコミュニティAMIが使用されているかを確認したい場合は、whoAMI-scannerというオープンソース ツールを利用することでレポートを作成できます。また、パブリックなAMIやその系統を調査するには、Investigator.cloudも有用なリソースです。

Dockerイメージ

ライブラリやAMIだけが、リリース直後に利用されるソフトウェアではありません。実際には、32%の組織がパブリックDockerイメージを作成から1日以内に利用しており、これもセキュリティ上のリスクとなります。Dockerコンテナ自体は新しい攻撃対象ではありませんが、近年はライブラリやAMIに関する攻撃研究が注目を集めており、過去に確認されてきた悪意のあるコンテナ イメージの問題は相対的に目立たなくなっています。

可能な限り、コンテナ イメージは信頼できる一次提供元から取得するようにしてください。例えば、Docker Hubの「Trusted Content」タグ(Docker Official Images、Verified Creators、Sponsored OSSなど)を利用することで、悪意あるイメージを使用してしまうリスクを低減できます。もし信頼できる一次提供元のイメージを利用できない場合は、研究者による検証の時間を確保するためのクールダウン期間を設けることや、独自の分析を行うことも検討してください。完全に安全な方法は存在しませんが、これらの対策によってパブリックなイメージを利用する際のリスクを減らすことができます。

GitHub Actionsはサプライチェーン攻撃に対して脆弱な状態に置かれている

この1年で、GitHub Actionsをさまざまな形で悪用する大規模なサプライチェーン攻撃が増加しています。これらの攻撃では、リポジトリへの初期侵入やデータの外部流出の手段としてGitHub Actionsが利用されており、カスタムのGitHub Actionsだけでなく、Marketplaceで公開されているGitHub Actionsにも影響が及んでいます。たとえば、tj-actionsが提供するchanged-filesアクションが侵害され、攻撃者が悪意あるペイロードを挿入したことで、影響を受けたパブリック リポジトリがワークフロー ログにシークレット情報を書き出してしまう事例が発生しました。

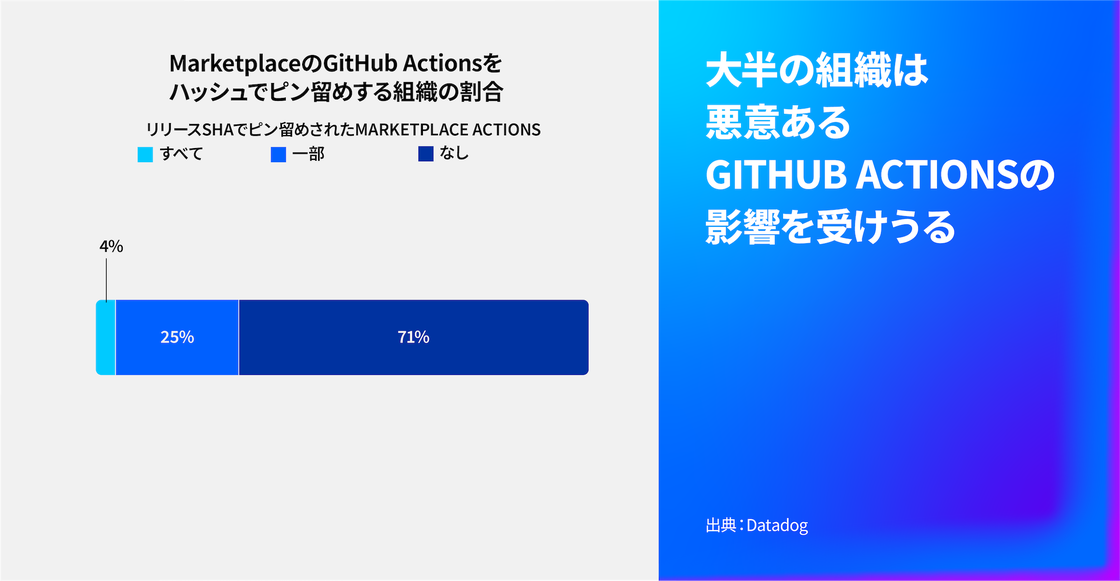

本レポートでは、「Marketplaceアクション」とはGitHub Marketplaceで公開されているパブリックなアクションを指します。GitHub Actionsに関する脆弱性の状況自体は大きく変化していないものの、調査の結果、GitHub Actionsを利用しているすべての組織が少なくとも1つのMarketplaceアクションを使用している一方で、すべてのMarketplaceアクションをハッシュでピン留めしている組織はわずか**4%**にとどまることが分かりました。さらに、71%の組織は、使用しているアクションのいずれについてもハッシュによってピン留めしていません。これは大きなリスクとなります。というのも、第3の事実でも述べたように、GitHubによれば、アクションを完全なコミットSHAでピン留めすることが、自動更新を防ぐ唯一の方法だからです。

さらに詳しく分析すると、80%の組織が、GitHubによって管理されていないMarketplaceアクションを少なくとも1つ使用しており、しかもそれらをコミット ハッシュでピン留めしていないことが分かりました。これは、推奨されている対策に対する認知不足や、YAMLファイルを更新する手間が増えることなどが背景にある可能性があります。

また、GitHub Actionsを利用している組織の**2%**が、過去に侵害されたことのあるアクションを少なくとも1つ使用しており、そのアクションもコミット ハッシュでピン留めされていない状態でした。侵害されたアクションの事例は増加傾向にあるため、コミット ハッシュによるピン留めが広く採用されない限り、この割合は今後も年々増加していくと考えられます。

“GitHub Actionsのワークフローでは、日常的に本番環境の認証情報を扱い、リリース ビルドを生成し、高い権限を持つコンテキストでサードパーティ コードを実行しています。それにもかかわらず、多くの組織は、本番インフラと同じ厳格さでそれらを保護していません。2025年に発生したGitHub Actionsの大規模な侵害事案により、攻撃者がこのギャップを組織的に狙っていることが明らかになりました。組織には、CI/CDセキュリティに対する原理原則に基づくアプローチが必要です。すべてのワークフローの影響範囲を把握し、実行時の挙動を監視し、ビルド インフラを価値の高い資産として扱うことが重要です。こうした基礎的な考え方があったからこそ、tj-actions、Shai-Hulud、s1ngularityのような攻撃を早期に検知できました。2026年には、業界全体がこの考え方を広く取り入れる必要があります。”

StepSecurity共同創業者 兼 CTO

多くの脆弱性は、人による即時対応を必要としない

重大(Critical)な脆弱性は迅速に修正すべきですが、それは本当に重大な場合に限ります。脆弱性の深刻度は通常、CVSSのベース スコアによって評価されますが、実行環境のコンテキストやCVEの状況を考慮することで、実際の重要度を調整することができます。

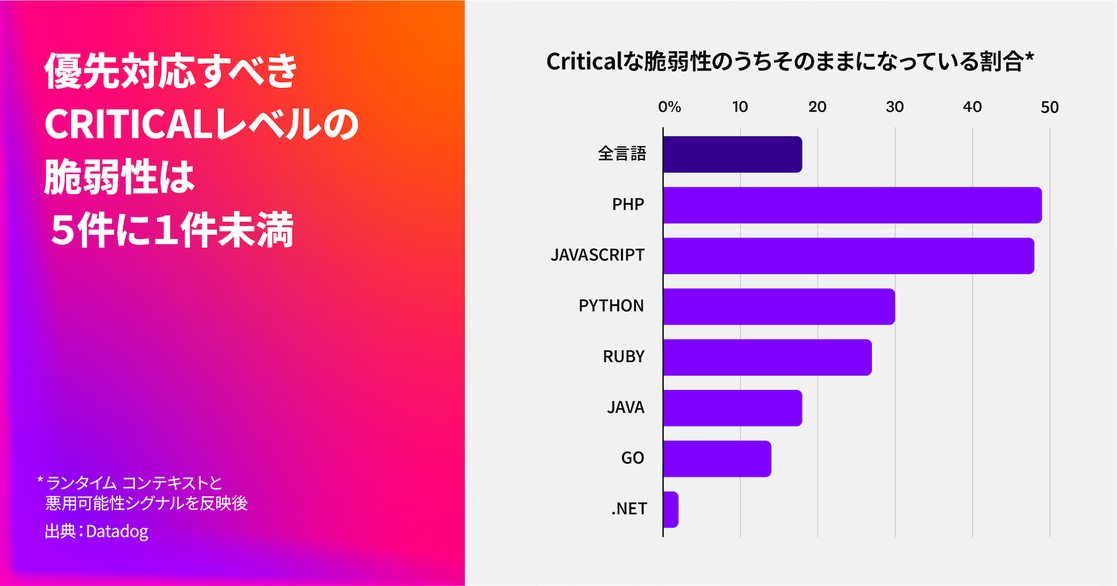

私たちの調査では、重大と分類された依存関係の脆弱性のうち、深刻度を調整した後も重大のままであるものは**18%**にとどまり、これは昨年と同じ結果でした。つまり、本当に重大と判断される脆弱性は80%以上減少することになり、結果としてアラートの数を大幅に減らすことができます。

今年はさらに踏み込み、こうした傾向が言語ごとにどのように異なるのかを分析しました。その結果、.NETの依存関係における脆弱性の98%が、重大(Critical)からより低い深刻度へと引き下げられることが分かりました。さらに調査を進めたところ、.NETの脆弱性における平均的なExploit Prediction Scoring System(EPSS)スコアは、他の言語と比べてかなり低い傾向にあることが確認されました。これは、Code Access Securityのような組み込みのセキュリティ機能によって、実際に悪用される可能性が低く抑えられていることが一因と考えられます。一方で、より高い割合を示したのがPHPで、49%の依存関係の脆弱性が重大のまま残っています。これは、多くのPHP依存関係の脆弱性において公開されているエクスプロイトが存在し、実際に悪用される可能性が高いことが影響していると考えられます。

Datadogの重大度スコアは、初期のCVSSスコアを調整するために4つの要素を基に算出されます。ランタイム コンテキストとしては、脆弱性が本番環境に存在するかどうか、そして影響を受けるサービスが現在攻撃を受けているかどうかが考慮されます。さらにCVEコンテキストからは、エクスプロイトの有無と、実際に悪用される可能性の高さという2つの要素が評価に加えられます。

すべての重大脆弱性を過度に優先してしまうと、対応チームに大きな負担がかかり、アラート疲れ(alert fatigue)を引き起こす可能性があります。その結果、本当にビジネス リスクの高い脆弱性への対応が後回しになってしまう恐れがあります。適切に優先順位を付けることで、チームは本当に重要な課題から対応できるようになり、開発者の時間を節約するとともに、最終的にはコスト削減にもつながります。

また、SCA脆弱性を少なくとも1つ抱えるアプリケーションにおいて、HighまたはCriticalレベルの脆弱性の平均数は、2025年の13.5件から今年は8件へと減少していることも確認されました。この減少傾向は、今後数年間にわたってセキュリティの優先順位付けが改善しているかどうかを判断する重要な指標となるでしょう。