Elijah Andrews

Memcached is an open source, high-performance, distributed memory object caching system. It acts as an in-memory key-value store for arbitrary data, and is commonly used to speed up dynamic web applications. Since Memcached stores data in memory, writing and reading from the store is extremely fast compared to disk-based operations. However, the data is volatile, meaning that Memcached should generally not be used as a permanent data store. Instead — as the name suggests — it is better suited to be used as a cache.

This blog will be split into two parts. In part one, I’m going to show you a typical web application request that can be optimized using Memcached and how to collect key metrics from Memcached using the Datadog Agent. Next week, in part two of the blog, we’ll dive a bit deeper into instrumenting your cache using DogStatsD.

Optimize web applications with Memcached monitoring

A common way to use Memcached in web applications is to cache the result of an expensive query made to a relational database. Subsequent requests that use the same query can read from the cache instead of the database, providing a significant boost in response time.

Here is some Python code for a simple request that can benefit from Memcached:

def get_user(user_id): return query_database_for_user(user_id)Here, query_database_for_user is our slow database query.

The Memcached protocol specifies the ability to set a value at a specific key, and then later get the cached value. When using Memcached as a cache, a time-to-live is usually set, which specifies the amount of time that the cache should store the data. By purging the value after a certain amount of time, our data is periodically refreshed from the database to reflect any changes that may have occurred since the value was last cached. The time-to-live also ensures that our cache only contains data that has been accessed recently (and hopefully will be accessed again soon).

We can start using Memcached in our get_user request by setting up a Memcached server, grabbing a client, then adding a few lines to our code:

import memcachemc = memcache.Client(['127.0.0.1:11211'])def get_user(user_id): user = mc.get('user:%s' % user_id) if user is None: user = query_database_for_user(user_id) mc.set('user:%s' % user_id, user, time=300) return userWhen the request is first executed, no value will be present at key ”˜user:%s' % userid. When this happens, mc.get returns None. Therefore, we run query_database_for_user as normal, and set the result in the cache, with a time-to-live of 300 seconds. The user is then returned.

If the request is then executed with the same user_id before the 300 seconds have passed, mc.get will return the cached result. Therefore, we can skip the costly database query, and simply return the user.

Instrument Memcached with Datadog

Now that we’ve got a Memcached server running and optimizing our previously slow get_user request, we probably want to set up Memcached monitoring to ensure it’s functioning correctly. Does it have enough memory? How many gets are happening per second? How many of those are misses?

We answer these questions, and many more, by enabling the Datadog’s Memcached integration.

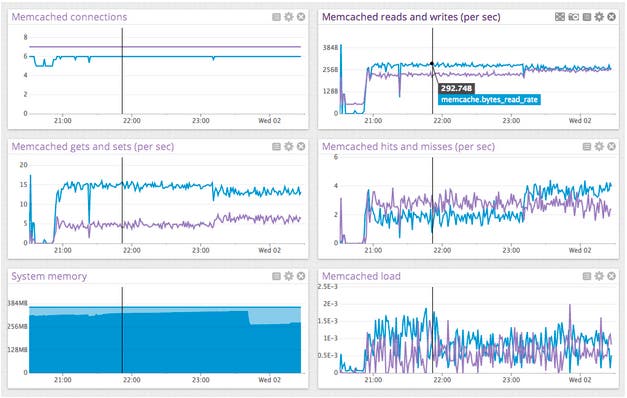

After you’ve installed the Memcached integration (don’t forget to run the Datadog Agent), head to the Memcached integration dashboard. Here, you’ll be able to monitor metrics indicative of the general health of your Memcached server.

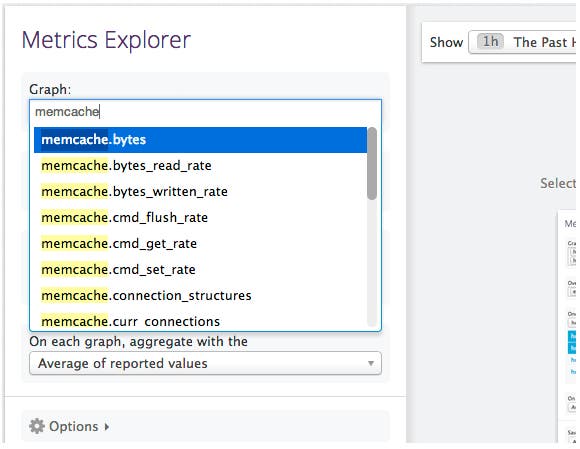

You can access even more Memcached metrics by heading to the Metrics Explorer, and searching for metrics in the memcache namespace:

Top Memcached metrics to monitor

Now that we’ve got the Memcached integration running, here are some of the most important Memcached metrics to monitor:

System memory

Make sure that you’re not running out of physical memory on your Memcached machines. If memory pages used by Memcached begin being swapped to disk, requests will be slowed significantly, and you may not benefit from using Memcached at all. Memcached will cap memory usage at 64 MB by default, but you’ll likely want to change this setting by using the -m option when starting the server. It’s important to note that Memcached will not immediately claim the amount of memory that you’ve configured; it will instead allocate and reserve physical memory as more items are stored, using the value of the flag as the upper bound for memory usage. Make sure that you have enough memory for Memcached at its maximum size.

Fill percent and evictions

It’s a good idea to keep track of the amount of free space in your cache. When a full cache is encountered when storing data, the cache will evict an older, unused item from the cache, and replace it with the new item. If your cache is constantly full, you may want to increase the size of your cache, reduce the time-to-live for some records, or store less data. Similarly, if your cache is barely used, you could probably afford to bump up your time-to-lives, or reduce the size of your cache.

At first glance, it appears that memcache.fill_percent tells us everything we need to know about the amount of free space in the cache. However, due to the way that Memcached assigns memory pages into slabs, it is possible for cache evictions to take place even when the cache is not 100% full. This is why it’s also important to keep an eye on the memcache.evictions_rate metric, which is also indicative of the amount of free space in the cache; if the frequency of evictions suddenly increases while fill percent is below 100%, a single slab class may be full.

Hits and misses

memcache.get_hits_rate and memcache.get_misses_rate metrics are useful for checking the general health and usage of your cache. They allow you to see how frequently the cache is being accessed, and the number of requests the result in cache hits and misses. In general, you want to have significantly more cache hits than misses in order for your cache to be effective. Keep an eye on the two metrics, and the ratio between the number of hits and misses; if any of the three start to fall, something is likely wrong.

In conclusion, we’ve used Memcached to speed up a slow request and installed the Datadog Memcached integration to collect general metrics about our Memcached server. We’ve also focused the top three sets of Memcached metrics to monitor. Next week, we’ll dive deeper into the performance of our cache by instrumenting application-specific metrics with DogStatsD.

To speed up your web applications and monitor key Memcached metrics discussed in this post, you can try Datadog for free for 14 days.

Happy monitoring!