David M. Lentz

This post is based on Mia Henderson’s talk titled “Deploying 30x a day safely at PagerDuty.” The video and transcript are available here.

At Datadog Summit San Francisco in 2017, Mia Henderson, site reliability engineer at PagerDuty, gave a talk describing how her team integrated automated metric checks into their deployment process.

Providing robust incident management

To maintain healthy and reliable infrastructure and applications, devops teams need to be able to detect and resolve any customer-facing issues as quickly as possible, ideally before they affect the user experience. PagerDuty enables teams to automatically notify the right people so that they can quickly respond to events and incidents that may affect their services. In order to provide a reliable, high-quality service themselves, the PagerDuty team has embraced canary releases, continuous deployment, and monitoring with Datadog.

Phase one: PagerDuty’s manual canary release

To limit the risk of introducing bugs and performance issues into production, PagerDuty incorporated a canary phase into their build pipeline. Named for the caged canary that alerted coal miners of unsafe air in the mine, a canary release helps an engineering team determine whether it’s safe to proceed with a deployment. In a canary release, the team deploys changes to a subset of its production environment, then watches for signs of trouble. If the team detects any issues with the canary release, they can easily revert the deployment before it affects users. If the canary release is successful, they can safely deploy those changes across the rest of the production environment.

Phase two: PagerDuty adopts continuous deployment

Initially, each developer on the PagerDuty team would manually execute a deployment and then watch their Datadog dashboards and alerts to determine whether the canary was successful. But that was problematic, Mia explains, because “manual deploys slow down deployment and they make people very sad, because they don’t like having to wait to get their code out … waiting to deploy and babysitting the deploys themselves.”

The team adopted the practice of continuous deployment, automating the process of deploying and monitoring the canary code. If the canary phase triggered an alert, the team would halt the release. Otherwise, the changes were automatically released to production. According to Mia, “Continuous deployment has been great. Our developers really love it … they just merge and they go on with their day.” It’s good for PagerDuty users, too, Mia explains: “Fixes and features get to our customers more quickly.” But on a bad day, so do bugs.

A pivotal incident

Continuous deployment enabled the team to release more frequently, which meant that developers didn’t know when their queued changes would be released to the canary servers. The process intentionally decoupled the developers from the activity of deploying and evaluating the canary release.

“The developers were no longer watching the as-you-deploy dashboards, because they didn’t actually know when their deploys are going out,” Mia says. “It could go out right when they merge, or it could go out an hour and a half or two hours later.”

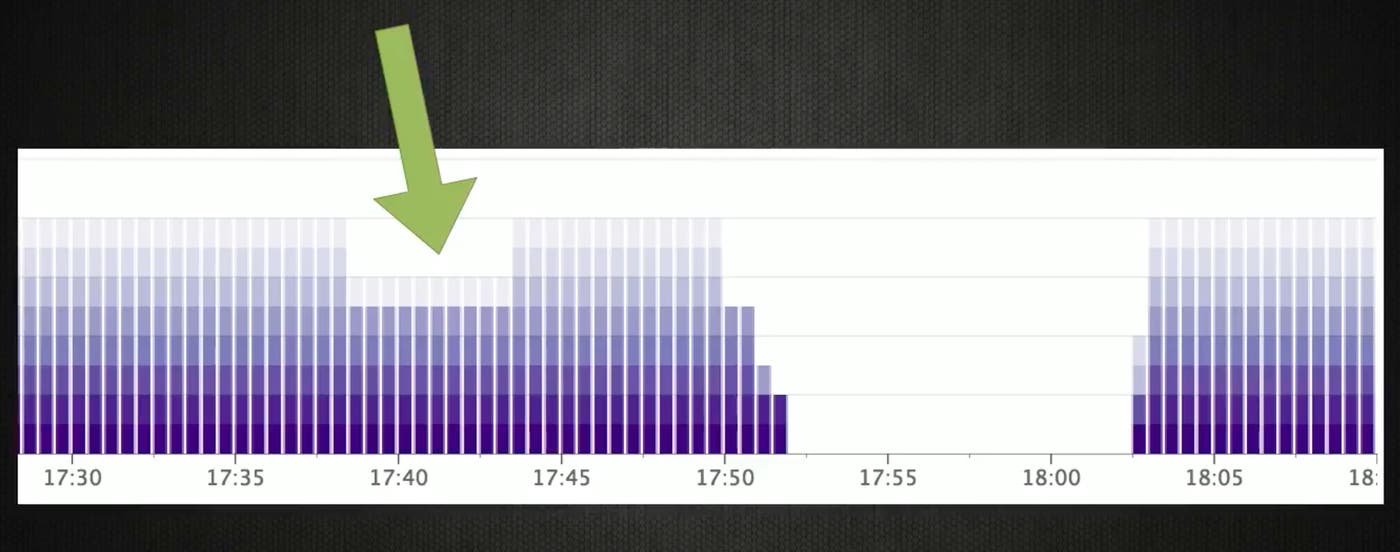

As a result, a pivotal incident occurred: “A developer at PagerDuty merged a change to the web repo that caused an issue with processing background tasks,” she says. This triggered an alert, but it wasn’t caught in time to halt the canary release. “So the developer was paged due to the canary causing issues, but by the time they got around to looking at the alert … the canary had been rolled back so the alert had actually auto-resolved.” The rollback was the team’s standard step between deploying the canary and moving to a full-scale production release, to ensure consistency across the environment.

In other words, the team had missed its window to correct the problem that was detected during the canary deployment. “So the deploy progressed past canary, went out to production,” Mia recalls. “So we lost all of our background task processing for about 10 minutes.”

Phase three: Automated canary checks

This incident led the team to automate the process of evaluating their canary releases. They started by creating a script to check the one key metric that had indicated the issue during this outage. “One thing that SRE had been talking about for quite a while, at least in concept, was doing canary checks for web,” Mia recalls. “So now we had actually a concrete case of why this was needed, and a concrete example of the metrics that we should be checking.”

They used Datadog’s Ruby client library to write a script that automatically checks the canary’s key metrics. “It was very easy to do,” Mia says. The first script in their revised process was “a simple script in Ruby that goes out to Datadog, checks the metric that indicated the issue during this outage, and makes sure that it’s not in a failure mode.” If the canary check fails, the deployment process is halted and the engineer who committed the code is alerted.

Ultimately, PagerDuty scripted checks of more metrics, and made automated canary checks part of the deployment process. “We integrated that into our build and deploy pipeline,” Mia says. “Once the canary has been out for about five minutes, we check the metrics.”

Advice from experience: Integrate canary checks

Recounting her team’s experience, Mia encourages others to adopt the same discipline. “Write a check script,” she advises. “Datadog has great libraries—Python and Ruby, a lot of languages to use—so this is actually one of the easiest things to do. Integrate the check into your continuous deployment.”

Her team estimated that the risk of adding canary checks to their deployment process was low. A false positive would incorrectly fail a canary, but that could be resolved with manual intervention and an update to the check script. “So, the easiest way to get this done is to just do it. … Make sure you just go out and just do continuous deploys, and use your existing Datadog metrics to make sure that the services are working when you canary them.”

Improve your deployment process with Datadog

With Datadog’s official and community-supported API libraries, you can write automated metric checks and more. If you’re not already using Datadog, you can sign up for a 14-day free trial to start monitoring key metrics in your deployment process.