Occasionally, we get clients asking about our Agent installation, if they are already using a cloud-based service that provides metrics—like Amazon Web Services (AWS) or HP Public Cloud. “Why do I need an agent on my server? Aren’t the metrics already being provided from the cloud service? Now I have another process I need to deploy and manage?”

These cloud-based hosting services produce metrics via their virtualization hypervisor to an API or Dashboard system, like CloudWatch in Amazon’s case—allowing the operator to inspect patterns, see some history and make good decisions. So why should you add an agent to your server? You’re already getting all the metrics, right?

Well, not really.

As touched on in our article, “The Top 5 Ways to Improve Your AWS EC2 Performance”, the metrics produced through the hypervisor-based monitoring are only part of the whole picture.

The limitations of public cloud metric APIs

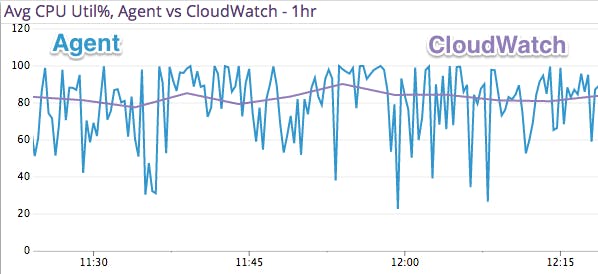

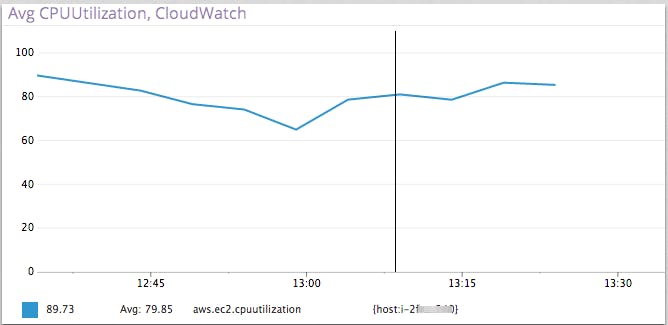

To begin with, the information provided is usually not all-encompassing, and produced at intervals that do not lend themselves to the granularity desired when debugging performance issues.

The metrics from a public cloud instance are produced at some interval to the provider’s API, after which they must be retrieved by Datadog, processed, and then passed along for display, all the while dealing with API Metric service availability, rate limiting, and other potential hazards of the Internet.

Agent-based monitoring means faster results and higher resolution

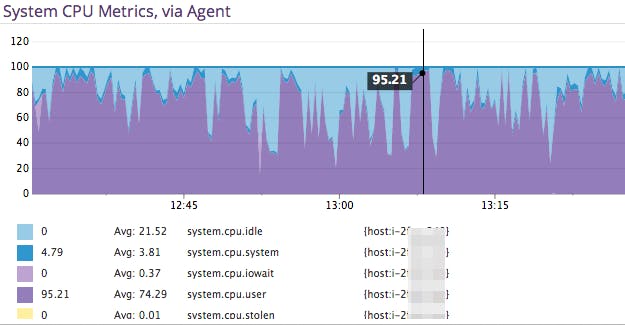

By using Agent-based monitoring, you get the nitty-gritty details faster—as the submission method is direct, instead of polling a service and relying on it to provide timely results. These include metrics like memory consumption by process, and typically arrive in a matter of seconds as they are not limited by the provider’s monitoring API limits.

Beyond the speed at which the details are received, the resolution of the data is also higher, allowing you to find blips in sub-minute intervals, and the ability to tie in to a wide variety of server-based application integrations to get a more comprehensive picture of what’s going on.

Peek under the hood

Also, these metrics only provide visibility from the “outside” into the operation of the virtualized server. It’s kind of like measuring a car’s performance by observing the external aspects—like exhaust, when gas is needed, and time to go from 0–60—all of which are probably important, but don’t provide all the “under the hood” details.

So if all you care about are CPU cycles and Disk/Network I/O (and some others) produced at 5-minute intervals (up to 1-minute intervals if you pay more), then by all means, don’t install anything on your servers. But if you want to see how the engine is working and what’s consuming the resources you’re already paying for, and if you want more detailed stats on what’s running, you can get a true, full picture.

Open source for all to use

Of course, our Agent is fully open source, so you can read through every line of it before installing it on your server during a free trial of Datadog. We provide a few methods for deployment, as well as maintaining popular configuration management solutions for Puppet and Chef.

So go forth, and don’t fear the Agent.