With the advanced containerization that has become the norm in the modern cloud, your infrastructure is likely more distributed, and thus more exposed to networking issues, than ever before. When troubleshooting application performance issues, this can make it difficult to link the symptoms you observe through monitoring the “golden signals” (requests, latency, and errors) on individual endpoints in your application to their underlying root causes. In a highly distributed environment, network monitoring becomes a key piece of the puzzle in understanding the behavior of your networked services.

With Datadog, you can quickly and easily pivot between network telemetry and application performance data to achieve a more comprehensive image of your infrastructure’s health. Datadog APM lets you easily track distributed traces end to end across your entire stack, helping identify and resolve application-level bugs and resource bottlenecks. And Datadog’s Network Performance Monitoring provides observability into network communication by aggregating granular network data into application-layer dependencies across your entire environment. Datadog NPM and APM are tightly integrated, letting you aggregate and analyze both your application and network data using unified service tagging to monitor across services, cloud regions, containers, and more. This makes it easy for your teams to integrate network telemetry into their root cause analysis workflows.

In this post, we will cover how to use Datadog to analyze application and network performance data in tandem to more efficiently troubleshoot the root cause of application issues. We’ll walk through:

- Correlating traces with network data to identify the source of application latency

- Troubleshooting network communication issues in Datadog APM

Debug application issues with NPM

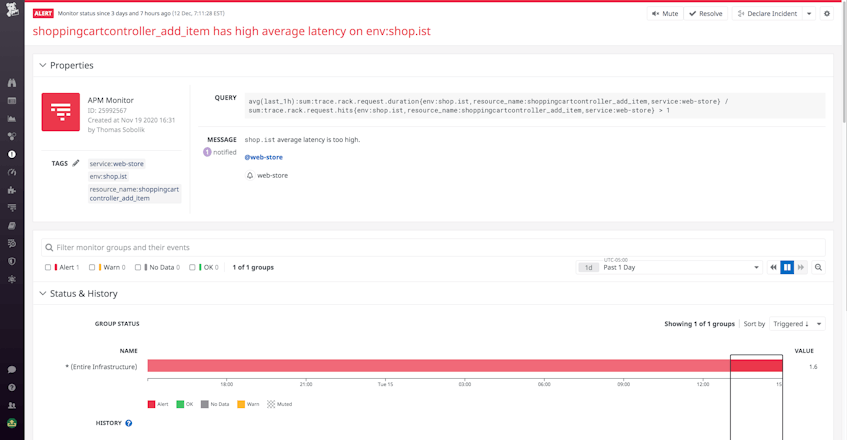

Let’s say we’ve received an alert reporting high average latency on one of our services, web-store. We can start by jumping directly from the alert to a list of web-store’s traces in APM to look for the slowest requests. From here, we can drill into a specific trace to examine spans showing runtime errors, or that have an unusually long execution time, which may suggest that a particular process or function call needs to be optimized.

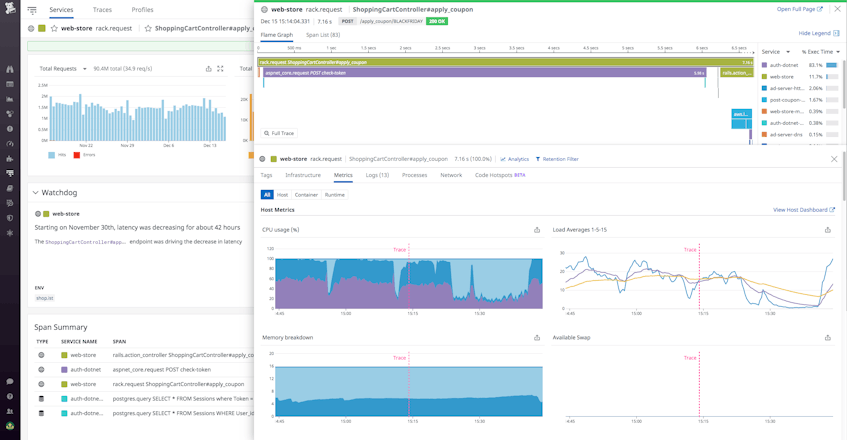

Datadog APM unifies telemetry from our application and from the underlying infrastructure. This means we can easily correlate the increased request latency with ingested logs from the service that may also help in locating code errors.

To look for possible resource bottlenecks as a cause, we can examine metrics for each span’s underlying host or container to understand if the latency may be caused by a lack of available CPU or disk space. This can significantly slow down request execution time if, for instance, a host is trying to execute many requests synchronously. The Processes tab breaks down the resource consumption of all processes running on the host to reveal any heavy colocated processes that may need to be streamlined.

Turn to NPM to find the root cause

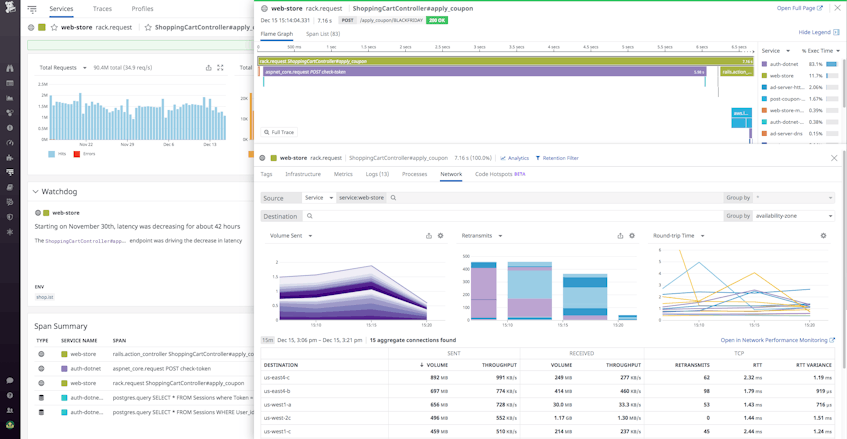

In our case, everything about our service that we can observe directly through the trace appears normal—we see no relevant code errors and have also validated that the issue is not with our underlying infrastructure. We can take advantage of NPM’s Network tab within APM to refocus our analysis on network issues.

Examining the trace’s flame graph, we observe significant gaps in between execution start times of some of the spans, representing time gaps between the operation of different services. In these gaps, we can infer that network communication is occurring between web-store and its dependencies, which we can investigate further in the Network tab.

The Network tab shows aggregated network performance metrics like network throughput, errors (TCP retransmits), and latency (TCP round-trip time) for network traffic on the selected service. We can group the destination by the availability-zone facet to see which AZs web-store is communicating with. Grouping by received volume, we notice that our web-store endpoint is sending a significant amount of traffic to us-east4-c, despite our expectation for it to communicate the most with us-east4-b. The unexpected cross-AZ traffic indicates potential misconfiguration on the client side, which would explain our observed latency on that endpoint.

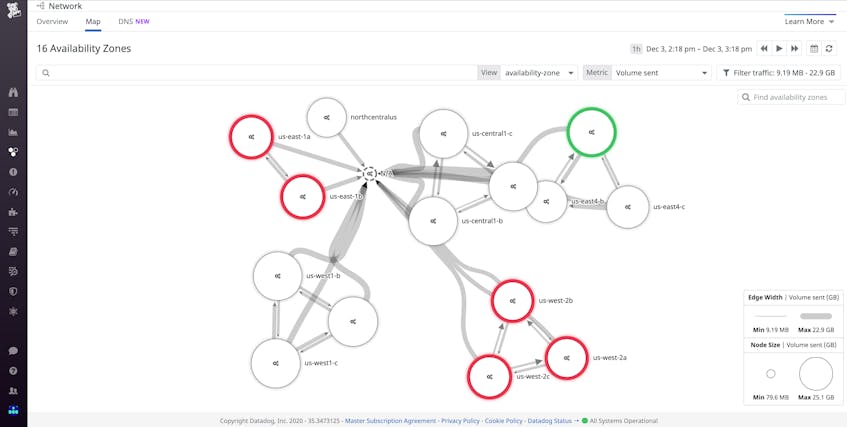

We can verify the hypothesis we developed in APM with the Network Map in NPM.

The Network Map visualizes network traffic between any tagged object in our environment, from services to pods to cloud regions. Grouping by availability zone and selecting received volume as our metric for the edge weights, we can see that us-east4-b and us-east4-c are communicating more than usual, which reflects the unusual communication we observed coming from our web-store service in us-east4-c. Now that we’ve determined the root cause, we can begin to focus our remediation efforts. By digging into our code’s deployment history, we can surface any recent deployments that may line up with the spike in cross-AZ traffic volume, and then audit the changes. Issuing a timely rollback, we’ll relieve our web-store service of the undue overhead and mitigate what could have been a significant increase in our cloud provider bill.

Use Datadog APM to investigate observed network issues

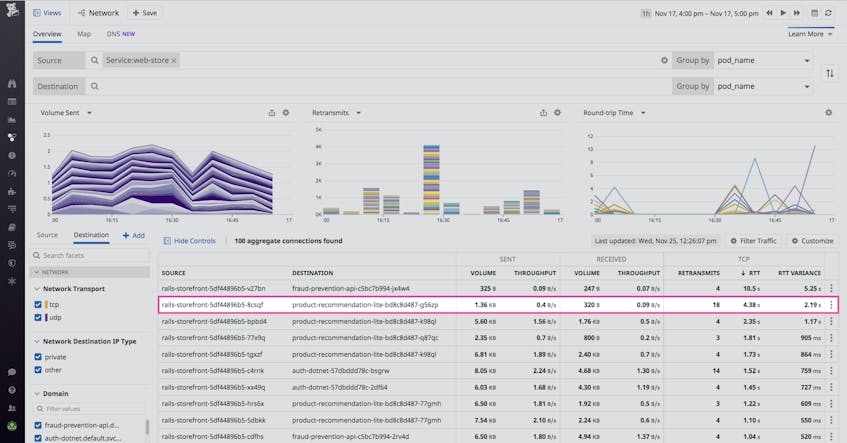

Thanks to unified tagging, we can use NPM to identify potential application issues to troubleshoot in APM. For example, we can start in the Network page to get a high-level view of pod communication and health across our environment. When sorting the table by TCP round-trip time, we observe that requests from rails-storefront-5df44896b5-8csqf to product-recommendation-lite-bd8c8d487-g56zp are experiencing high latency as well as connectivity issues, indicated by a large number of TCP retransmits. This could indicate that a misconfiguration or code error on either the source or destination endpoint is causing requests to hang, leading to packet loss.

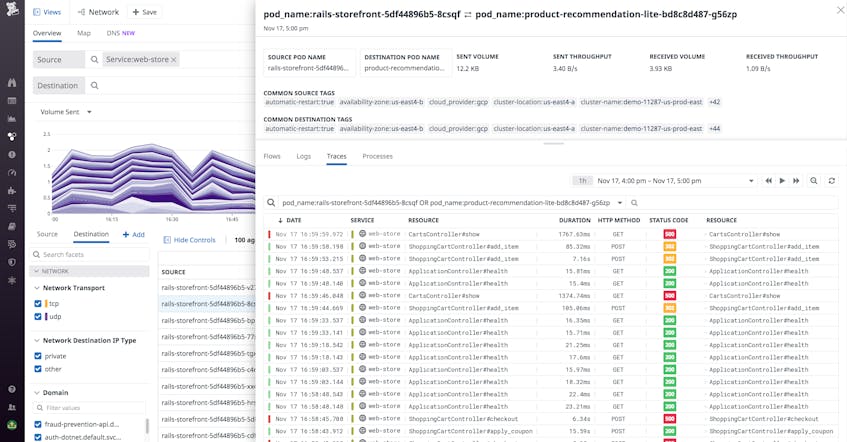

When inspecting one of these faulty dependencies, we can use the Traces tab to hone in on traces that may be throwing errors or suffering from exceptionally long execution time.

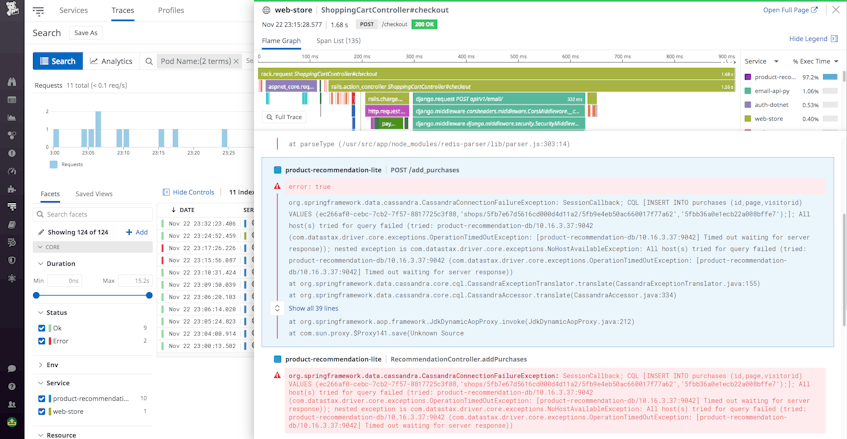

In the table shown, we can see some extremely slow requests being generated, some of which are leading to timeouts. NPM and APM are linked through this view, so we can open this list in APM to filter and sort the traces by other facets, including duration and status, to find the slowest traces or those throwing errors. We can drill down into one of the errorful traces to diagnose the cause of its exceptionally long duration.

By correlating logs with this trace, we observe a series of timeout errors being thrown by requests to the ShoppingCartController#checkout endpoint. Our trace surfaces spans for the function recommendationController.addPurchases that are attempting to run for over fifteen seconds before timing out. This prevents the checkout process from completing, triggering TCP retransmits—thus, we can conclude that this issue may be a significant contributor to web-store’s connectivity issues that we had previously spotted in NPM. We’ve used APM to uproot an issue on a particular endpoint of our service, and we can now work towards releasing a code fix.

Start debugging with Datadog NPM alongside APM

Together, Datadog NPM and APM provide your team with a number of workflows for quick, precise root cause analysis of application performance issues across your infrastructure, all from a single pane of glass. By enabling quick correlation between application traces and networking metrics, these tools make network telemetry accessible to every engineer in your organization, so teams get more context into problems and can streamline the process of managing services and triaging issues in production.

To get started with APM, follow the configuration steps to start tracing your application. Then, leverage Network Performance Monitoring to start monitoring the health of your application dependencies. Be sure to implement unified tagging to get the most comprehensive correlation and the broadest set of facets. Or if you’re brand new to Datadog, sign up for a 14-day free trial.