Kruthi Vuppala

Brooke Chen

Senior Product Manager

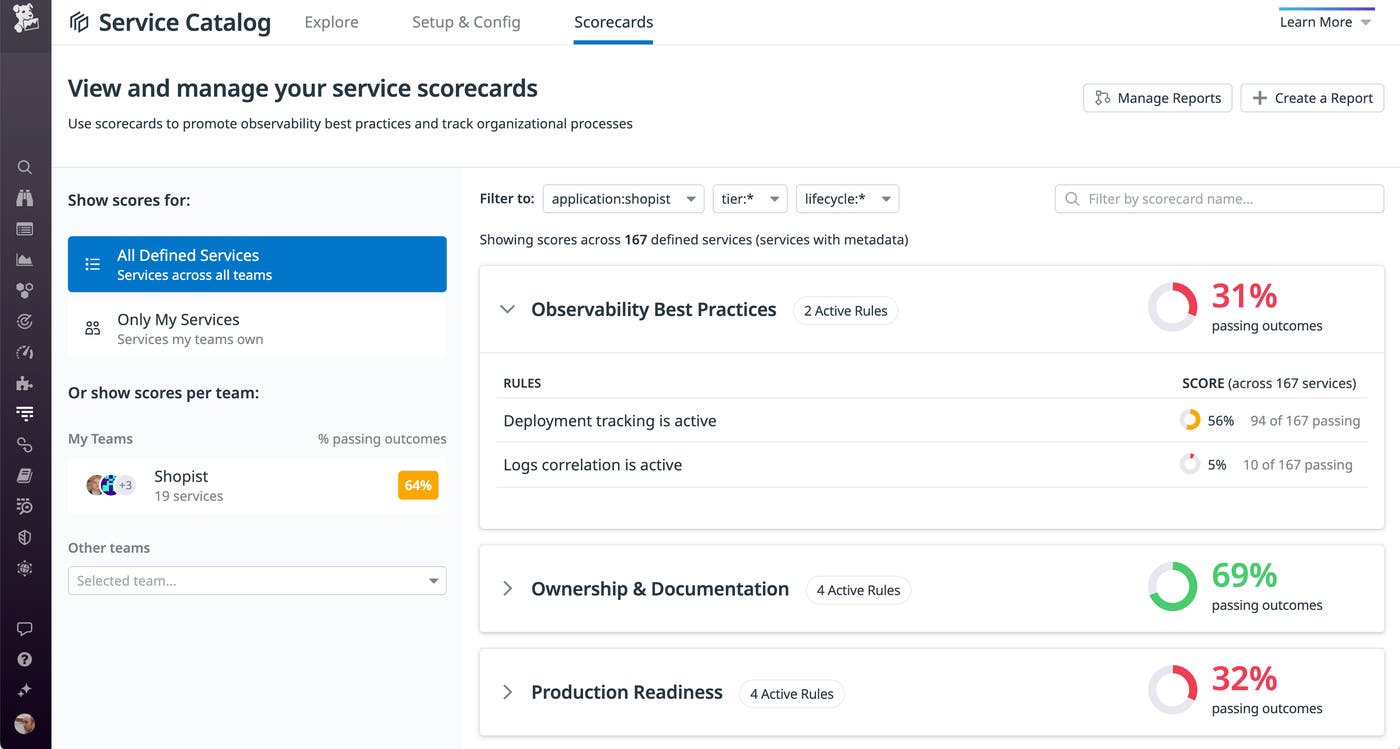

The Datadog Software Catalog consolidates knowledge of your organization’s services and shows you information about their performance, reliability, and ownership in a central location. The Software Catalog now includes Scorecards, which inform service owners, SREs, and other stakeholders throughout your organization of any gaps in observability or deviations from reliability best practices.

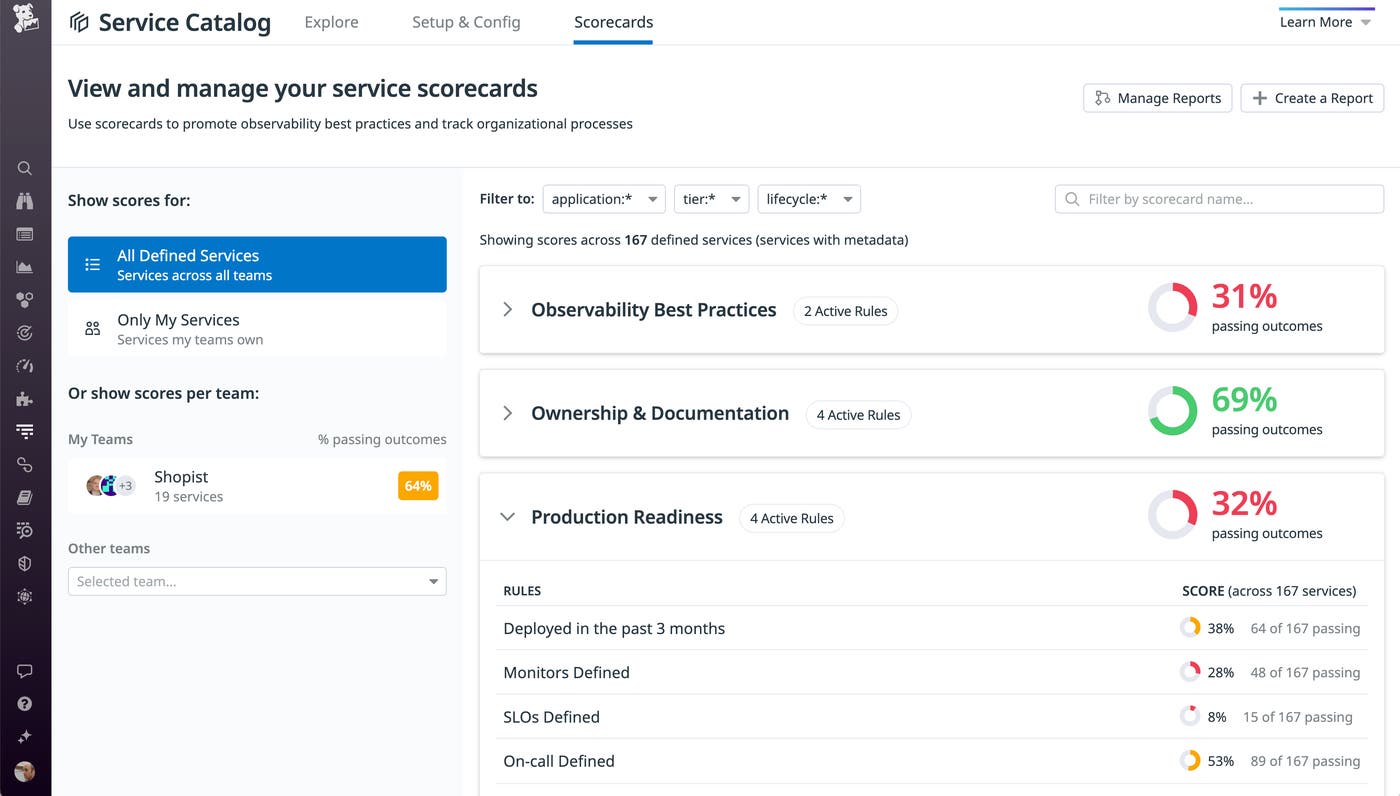

Datadog automatically evaluates each service against pass-fail rules in three categories: Production Readiness, Ownership & Documentation, and Observability Best Practices. Scorecards summarize observability and give teams a starting point to learn about and prioritize improvements to their services.

In this post, we’ll look at some of the ways in which Scorecards can help your team align with best practices, plan work to improve your services’ observability, and communicate and collaborate with stakeholders and other teams.

Ensure that teams have adopted best practices

The first scorecard category, Production Readiness, includes rules to help ensure that an SRE team’s processes have positioned them well to operate their services. The category includes a rule that evaluates whether a team has defined SLOs to track their services’ performance and another to check that they’ve created monitors so that they can quickly react to potential issues. Another rule checks that the Software Catalog record designates an on-call responder to enable collaboration between teams as they troubleshoot incidents that involve more than one service. The final rule in the category tests whether the team has deployed an updated version of the service within the last three months to make sure that they’re following agile best practices.

The Observability Best Practices category helps teams track their adoption of Datadog monitoring capabilities. Rules in this category evaluate whether a team is able to correlate APM data with logs, which allows them to speed up troubleshooting and use Deployment Tracking to correlate performance issues with code deployments.

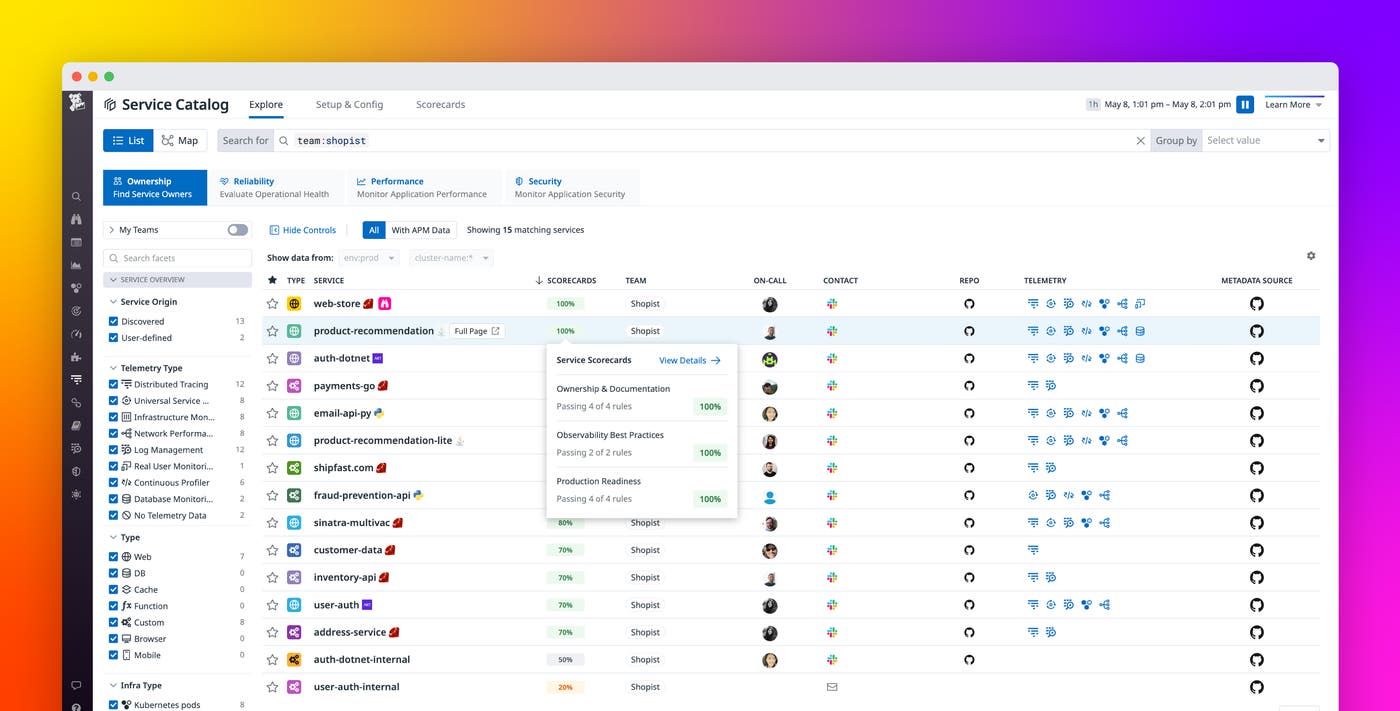

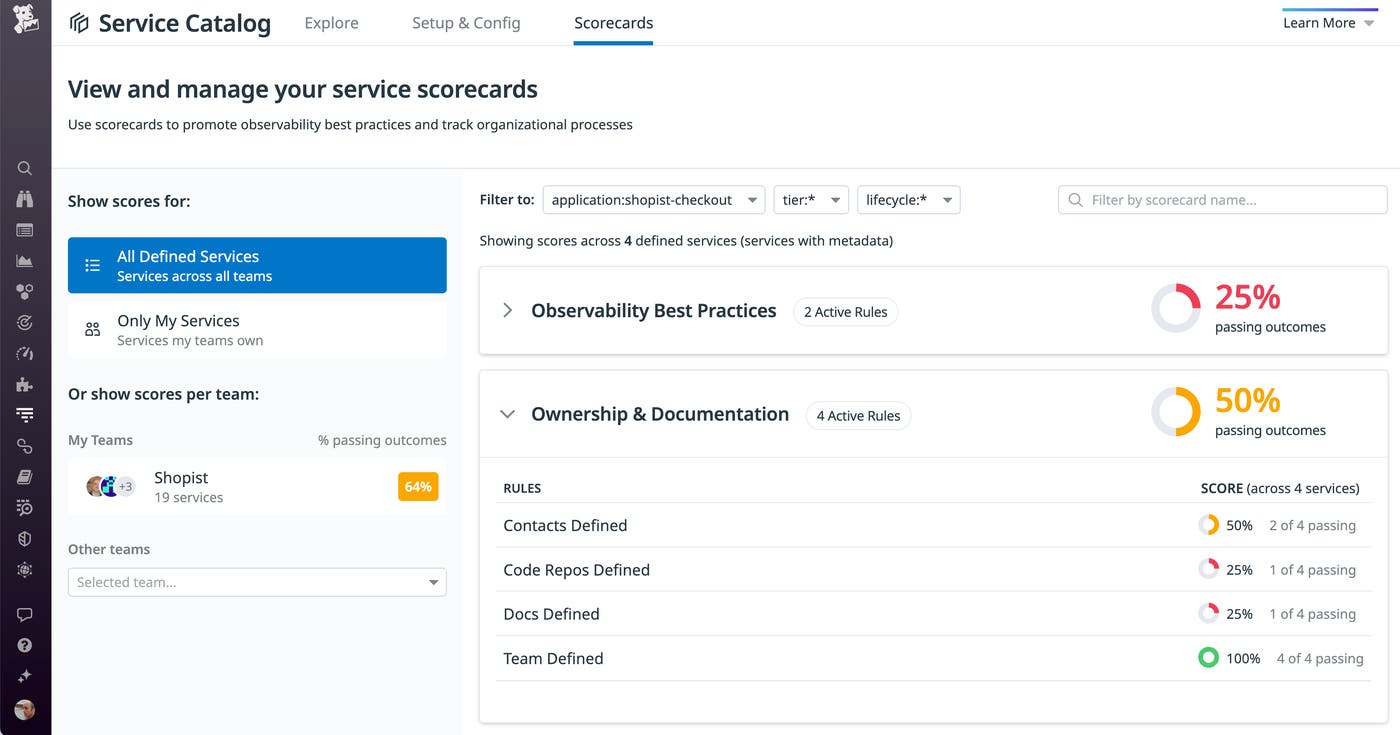

The screenshot below illustrates how engineering managers can track their teams’ scores by filtering the Scorecards view to show only relevant services. The scores column shows the percentage of services in the shopist application that are passing each Observability Best Practices rule. This can help managers ensure that all teams are consistently using Datadog features to get maximum visibility into their services.

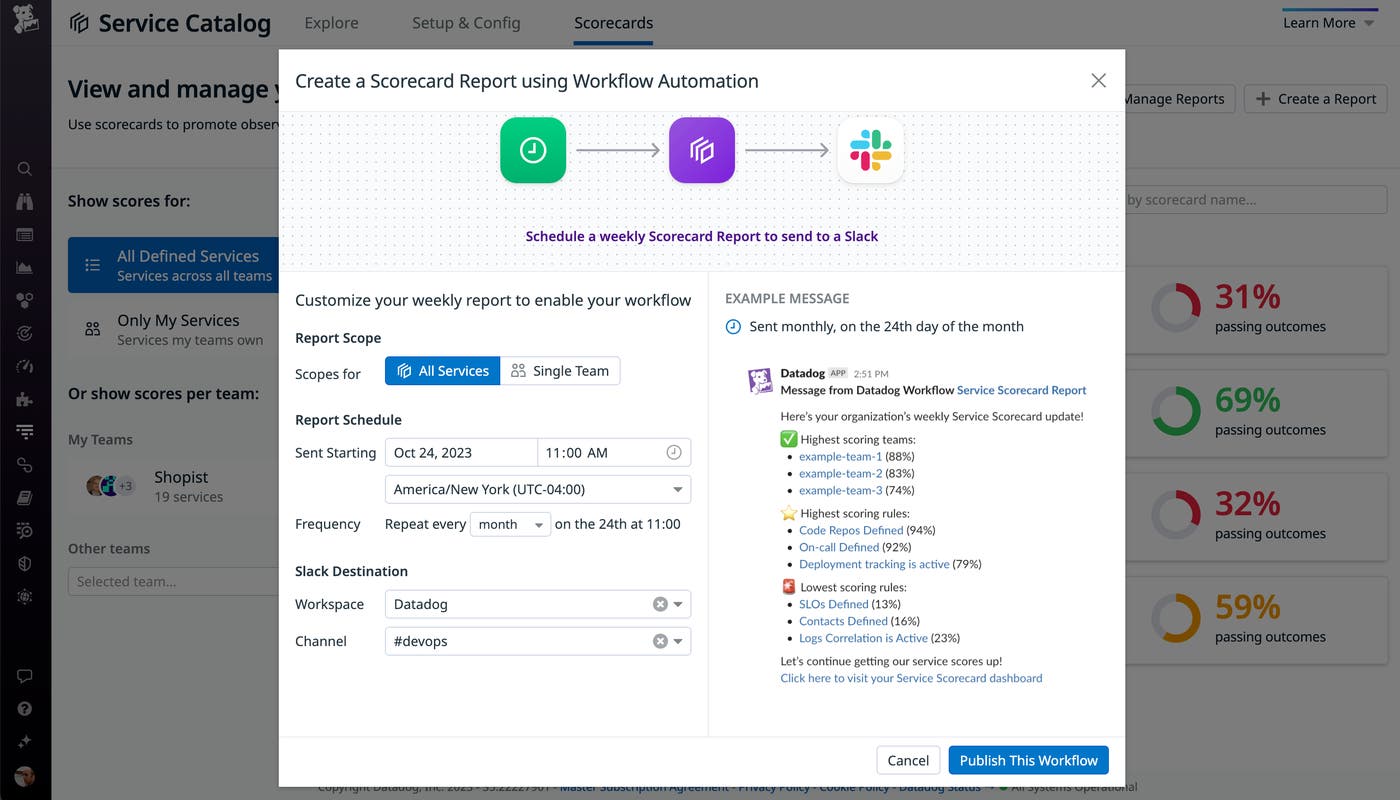

To help managers, team members, and stakeholders proactively stay informed of the progress and risks identified by Scorecards, you can create recurring Scorecard reports. These reports leverage Workflow Automation to send users an overview of service scores for each team or for the organization as a whole. This makes it easy to continuously identify areas for improvement by illustrating how well services and teams are meeting production readiness standards.

The screenshot below shows how you can create a recurring Scorecard report that sends a monthly notification to the #devops Slack channel. The report identifies the teams with the highest scores and highlights the rules with the highest and lowest scores, so service owners can see the areas where teams are succeeding and where they need to make progress.

Identify and prioritize improvements

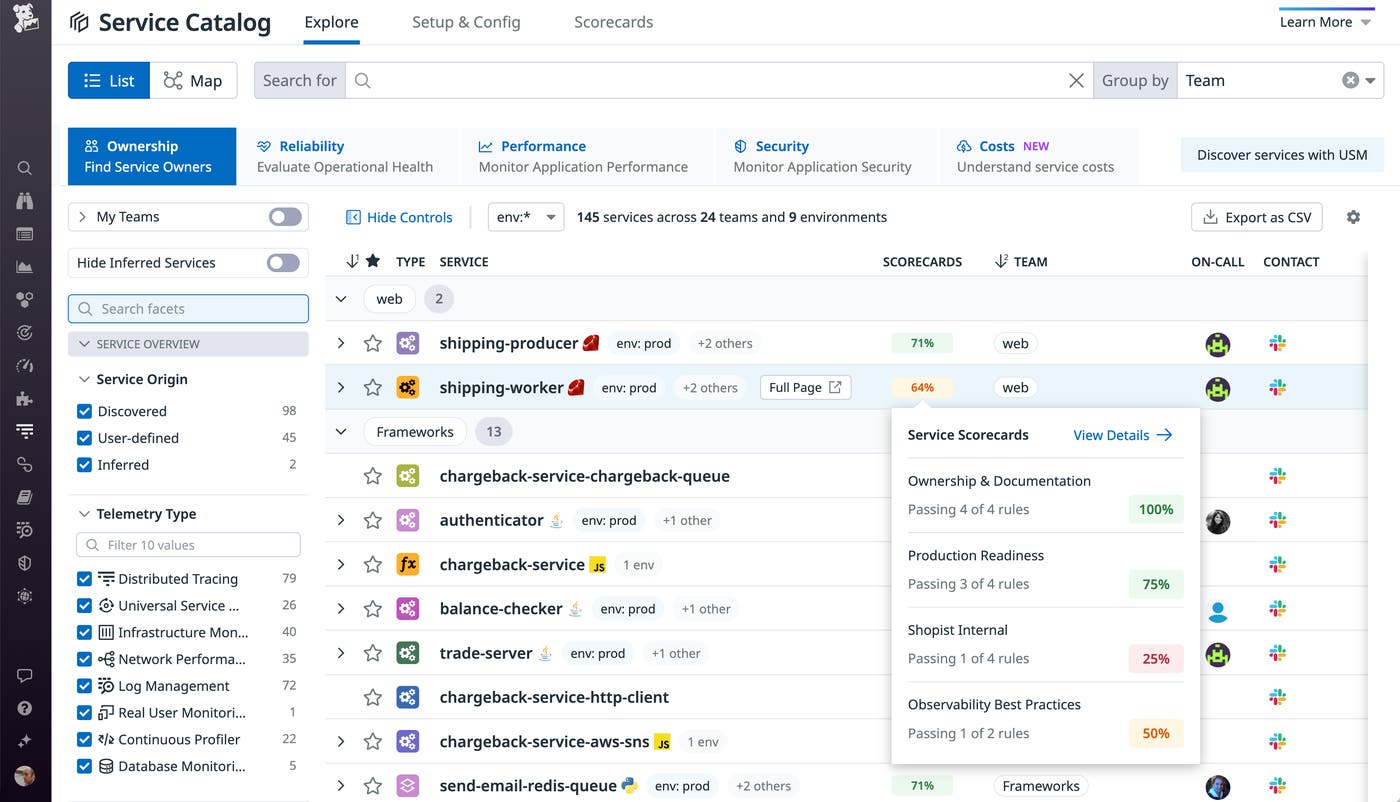

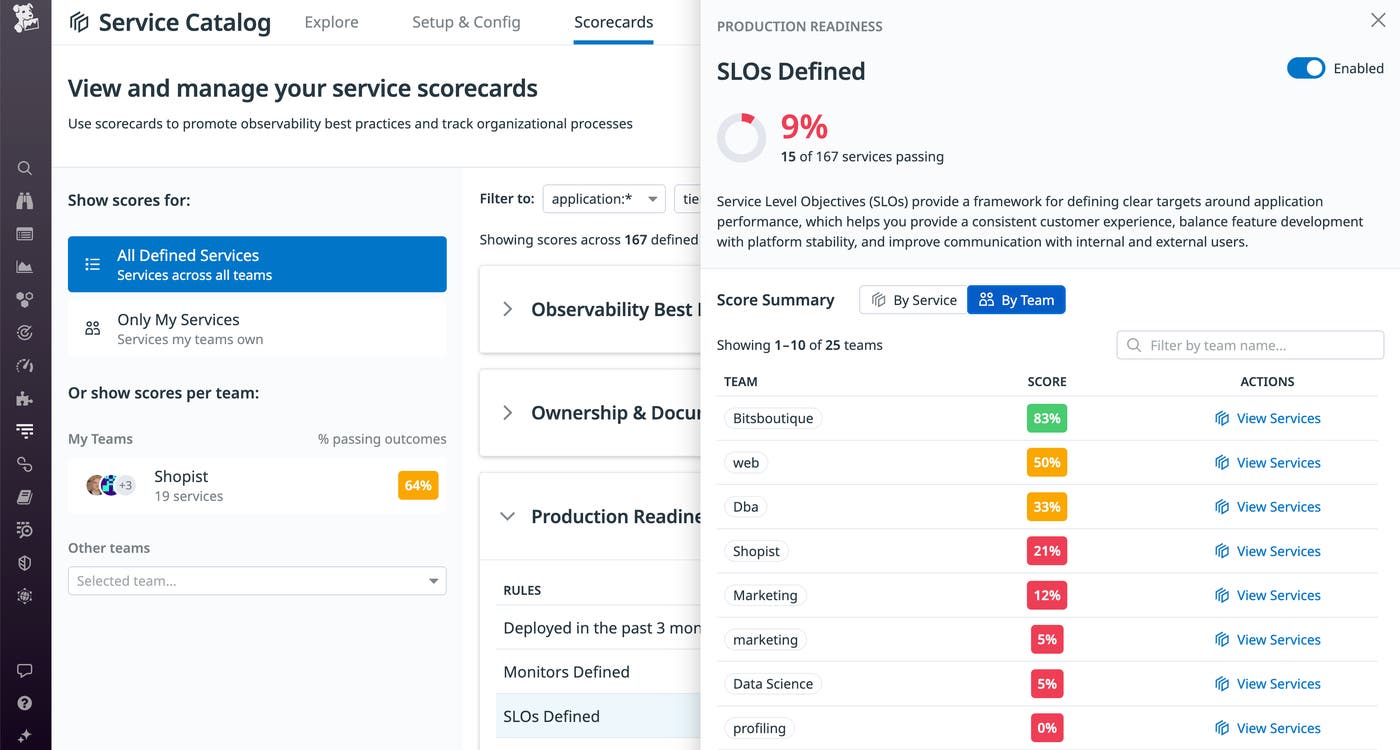

Scorecards give teams an up-to-date assessment of their services, which they can use to identify, prioritize, and plan improvements. The screenshot below shows that across all applications and teams, only 8 percent of services have passed the Production Readiness category’s SLOs Defined rule.

By clicking the scorecard, the service owner can see detailed information about any failed rules, plus relevant documentation to help their team make revisions to align with the conditions of the rule. In the screenshot below, the detail panel describes how SLOs help teams set clear targets for their service and ensure consistent performance.

Datadog automatically updates your scores once a day, so your scorecards always highlight the areas where your team should focus to gain visibility and maximize the service’s performance, reliability, and availability. As teams make progress mitigating the observability gaps in their services, service owners can use Scorecards to share that progress with stakeholders across the organization.

Communicate with stakeholders

The Software Catalog helps service owners build trust with stakeholders by giving them insight into the health and performance of their services. Scorecards extend this transparency by letting stakeholders identify actionable areas of improvement for these services, such as observability gaps or failure to adhere to standard team processes. And you can configure Scorecard reports to automatically and continually communicate how well each team adheres to preferred development practices. This information can help organizations recognize risks and prioritize the work necessary to remediate issues.

For example, an engineering manager can track the Scorecards of teams in their area to verify that they have created SLOs and designated on-call incident responders for their services. Based on that information, that manager may direct teams with failing scores to prioritize remediation over feature development until they have improved their services to comply with those rules.

Rules in the Ownership & Documentation category evaluate whether a team’s code repositories, documentation, runbooks, and dashboards are easily accessible to other teams whose services depend on theirs. By verifying that this information is available, these rules—as well as the Production Readiness rule that ensures that an on-call responder has been designated—help teams hold themselves accountable to other teams. The screenshot below shows that the team that operates the shopist-checkout application has provided documentation and code repositories for only 25 percent of their services. This communicates to stakeholders a risk that can hamper collaboration and highlights an improvement that the team can make.

The organization can track scorecards in these categories to understand how efficiently teams can troubleshoot and what remediations they can make to improve their incident response and minimize mean-time-to-resolution (MTTR).

Maintain visibility and track improvement with Scorecards

By allowing service owners, engineers, managers, and other stakeholders in your organization to continually track service observability, Scorecards help teams improve the performance, reliability, and availability of their services.

See the documentation to learn more about Scorecards and the Software Catalog. And if you’re not yet using Datadog, you can start right away with a 14-day free trial.