Jordan Obey

Senior Technical Content Writer

Machine learning (ML) platforms such as Amazon Sagemaker, Azure Machine Learning, and Google Vertex AI are fully managed services that enable data scientists and engineers to easily build, train, and deploy ML models. Common use cases for ML platforms include natural language processing (NLP) models for text analysis and chatbots, personalized recommendation systems for e-commerce web applications and streaming services, and predictive business analytics. Whatever your use case, a managed ecosystem helps simplify and expedite each step of the ML workflow by providing you with a broad set of tools that allow you to automate and scale ML development tasks—from preprocessing data to training and deploying models—on a single platform.

To ensure that your managed platform can support your ML workloads, it’s crucial to monitor the availability and efficient utilization of cloud resources. Insufficient resources can result in prolonged training times, reduced freshness of your models, and slow inference speeds. This negatively impacts performance and leads to inaccurate predictions, diminishing customer satisfaction and hurting your bottom line.

In this post, we will go over a typical ML workflow using managed platforms and some monitoring best practices that are important at each step involved in producing and maintaining ML models. Then we’ll look at key rate, error, and duration (RED) metrics to monitor to ensure your ML-powered applications maintain peak performance.

The ML workflow

ML workflows may vary depending on your use case, but a typical workflow includes:

Without the help of a managed platform, setting up and maintaining the infrastructure to support training and deploying ML models would be a complex and time-consuming task involving manually managing various components, such as servers, storage, networking configurations, and software dependencies.

As we dive into each step of a typical ML workflow, we will look at which resources you should pay attention to so that you can ensure managed platforms are supporting your ML models as expected.

Data preparation

The first step of an ML workflow is data extraction and preparation. This step involves standardizing, transforming, and ingesting the data you need to train your models. Access to this data––usually collected from an open source repo or in-house database––enables models to analyze and recognize patterns so they can then generate inferences.

For example, let’s say you want to integrate a customer service chatbot into your e-commerce website. In the data preparation phase, you may collect sample data that includes saved text transcripts of customer service phone calls, emails, or any other relevant data. You would then clean and standardize the text by removing all sensitive data, making everything lowercase, and then lemmatize and stem the text. You might also label the text, giving words “positive” and “negative” weights so that your models can interpret customer sentiment.

Additionally, modern ML workflows often incorporate feature stores, which are centralized repositories that store and serve measurable attributes (features) that are used to train your models. Feature stores are particularly useful for making available features that need to be precomputed from external data streams. For example, you could use a feature store to store precomputed features derived from compiling customer interaction data, such as sentiment scores or customer behavior indicators. These precomputed features can then be accessed and utilized by your models during training and inference.

The data preparation step also often involves splitting data into different datasets for different purposes. For instance, as we will discuss more in the model evaluation section, you may use one dataset to train your models and another dataset to validate that training.

Alert on high memory usage

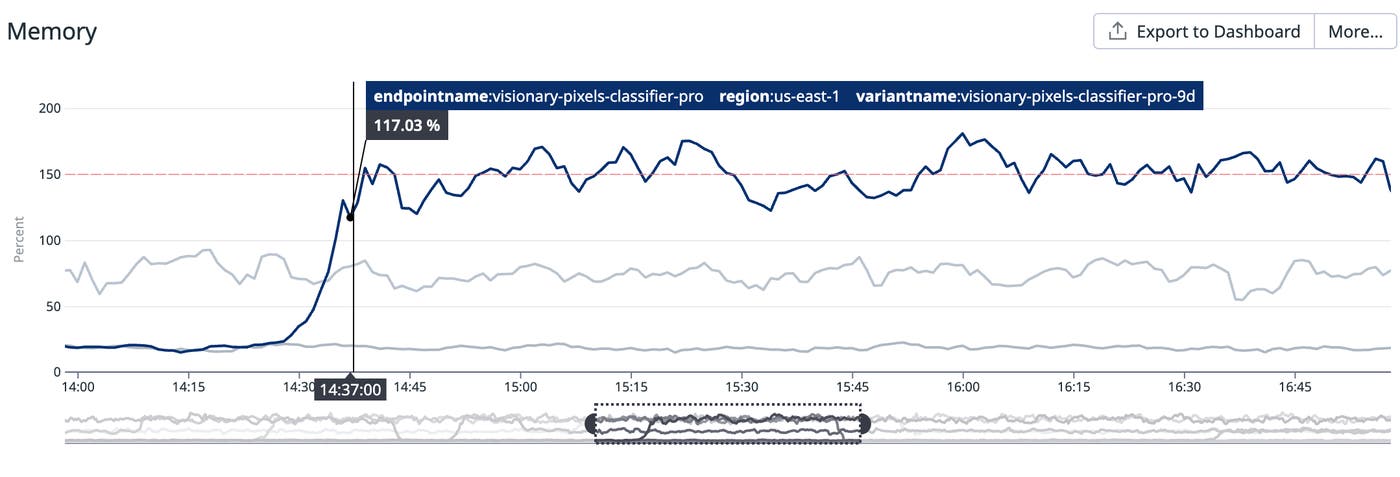

Alerting on memory utilization during data preparation is crucial because memory plays a vital role in storing and processing example data before model training. Insufficient memory can hinder dataset loading, impacting preprocessing efficiency and potentially leading to reduced model performance. By monitoring memory utilization, you can proactively identify and address issues such as resource contention or memory leaks, ensuring smooth data preparation and optimal model training. With that said, it’s worth noting that you can manage memory constraints with distributed computation frameworks such as Google Cloud Dataflow, AWS Glue, and Azure Data Factory.

Alerting on high memory utilization can help you prevent potential performance issues with your ML models. For example, if our e-commerce customer service chatbot hosted on Amazon SageMaker endpoints is experiencing high memory usage, it could lead to resource contention and impact response times. In extreme cases, insufficient memory may result in out-of-memory (OOM) errors, causing the chatbot to become unresponsive or shut down abruptly.

Model training

You can begin to train your model on prepared data using built-in or custom algorithms. For example, Amazon SageMaker provides built-in algorithms for text classification, which organizes text into separate categories, and summarization, which is used to highlight the most important data within a given text. In our e-commerce customer service chatbot use case, these algorithms would provide insight into the experience of your customers and help models generate appropriate responses, such as a chatbot recognizing if users are frustrated with the checkout flow of your site and asking for feedback on how to improve it.

In addition to built-in algorithms and pretrained models, managed platforms such as SageMaker and Vertex AI provide you with high-performance computing resources including Graphics Processing Units (GPUs) and AI accelerators such as Google Cloud TPU and AWS Trainium, which enable you to train models quickly and at scale. GPUs are optimized for parallel processing and are particularly useful for handling complex computations and training large-scale deep learning models on massive volumes of data.

CPU is also important during model training and plays an essential role in hyperparameter tuning, which is the process of identifying the optimal external configurations of an ML model such as the number of nodes in any layer of a neural network and the number of times an algorithm will iterate over a dataset to achieve the best performance.

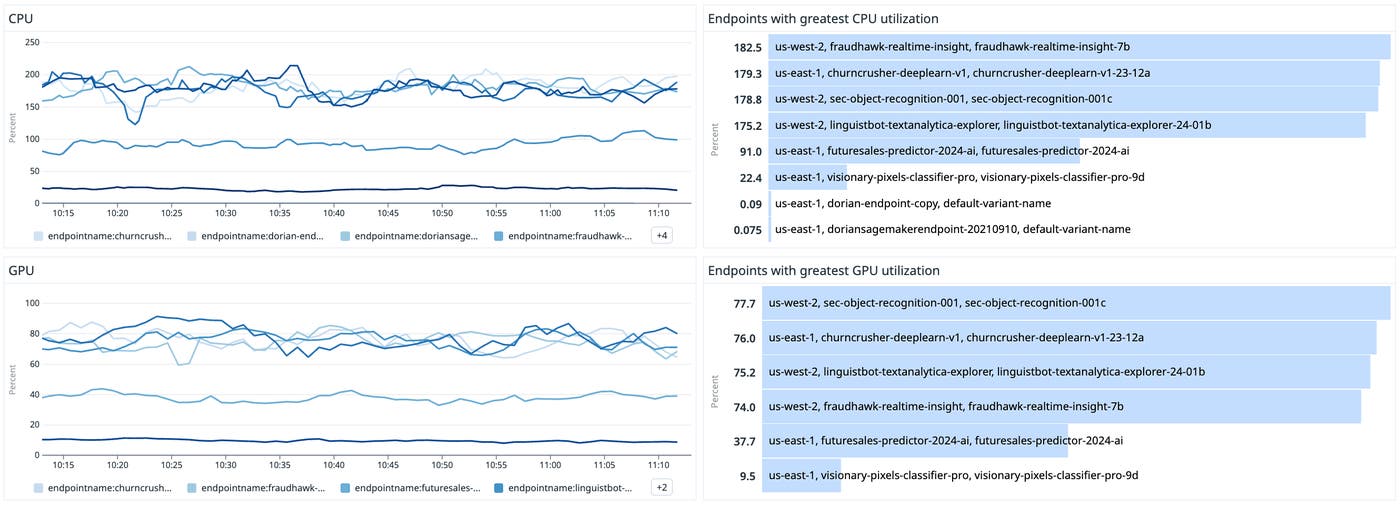

Rightsize CPU and GPU for performance and cost efficiency

Rightsizing CPU and GPU is important for model training from both a cost efficiency and performance optimization perspective. CPU and GPU impact infrastructure costs, and rightsizing ensures that you are using the optimal amount of resources necessary for your training without over-provisioning, which can lead to unnecessary expenses. For example, a more powerful GPU might speed up training but at a much higher cost, and the time saved may not justify the additional expense if the budget is a concern.

Striking the right balance between CPU and GPU resources can also lead to better model efficiency. While GPUs are designed to handle the parallel processing requirements of deep learning tasks, CPUs are essential for tasks like data preprocessing and serving the models’ results. An imbalance can lead to bottlenecks; for instance, a powerful GPU might be underutilized if the CPU cannot preprocess the data quickly enough, leading to idle GPU cycles.

Model evaluation

Once models have been trained, you need to evaluate whether they are performing as expected and producing accurate inferences. Model evaluation for an e-commerce customer service chatbot may include testing whether models are able to identify user sentiment, generate appropriate responses to queries, and handle concurrent requests while maintaining performance. AWS, Google Cloud, and Azure each have useful model evaluation solutions; for instance, AWS customers can use SageMaker Canvas to visualize their model’s accuracy scores, and Google Cloud customers can evaluate models using Vertex AI.

ML practitioners often evaluate models through a cross-validation technique called the holdout method which involves splitting their dataset into separate parts: training and testing. Once models are trained on training datasets, their predictions are validated against test datasets.

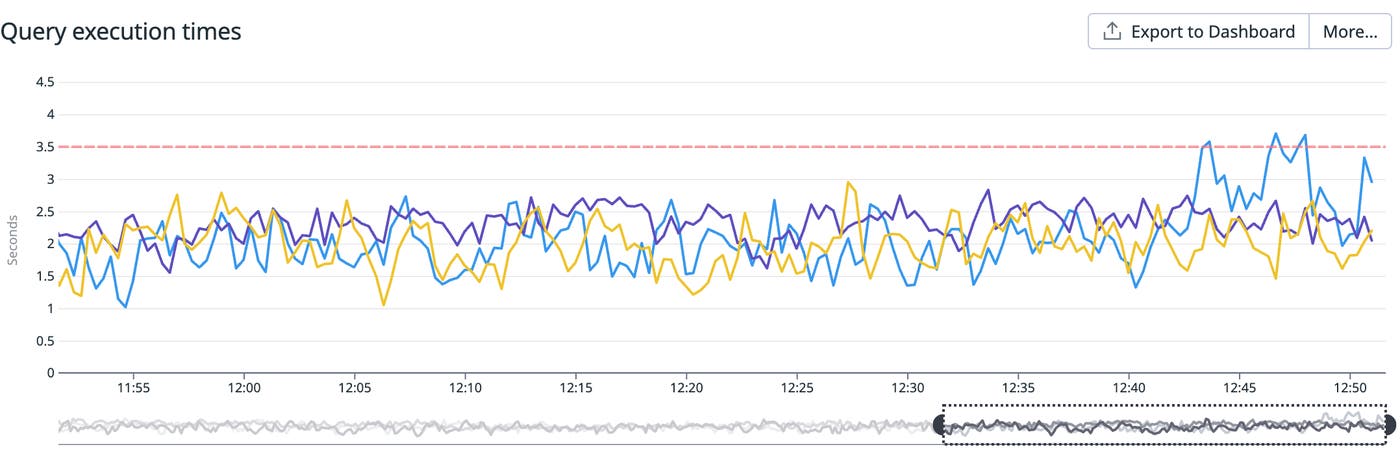

Monitor dataset query times and storage capacity

Test datasets are often saved in the cloud storage solution of the managed platform provider. This means SageMaker users can save test datasets in Amazon S3, whereas in Vertex AI a model’s test dataset is usually uploaded to a BigQuery table or a Cloud Storage object. Because storage solutions house the datasets used during model evaluation, it’s important to monitor them alongside your managed ML platforms to ensure they have sufficient capacity and remain performant to support timely and effective model assessment.

For instance, if you have opted to store your test dataset within BigQuery, you should alert on high query execution times (gcp.bigquery.query.execution_times.avg), which can lead to delays in receiving evaluation results and ultimately slow down your entire ML workflow.

Model deployment

ML platforms provide you with a wide variety of options for deploying your models before they start making predictions. For instance, Azure Machine Learning enables you to deploy models to Azure Container Instances, Azure Functions, edge locations, and other options. ML platforms also enable you to deploy models to other platforms such as TensorFlow Serving and MLflow.

Typically, models are deployed either as endpoints that client applications can send requests to or they are deployed for batch inference. While batch inferencing is best suited for use cases where you are handling large datasets that take a longer duration of time to process, deploying a model to an endpoint is best for real-time, low-latency inferences such as those that would be made by our e-commerce customer service chatbot.

Track performance and resource usage of your model endpoints

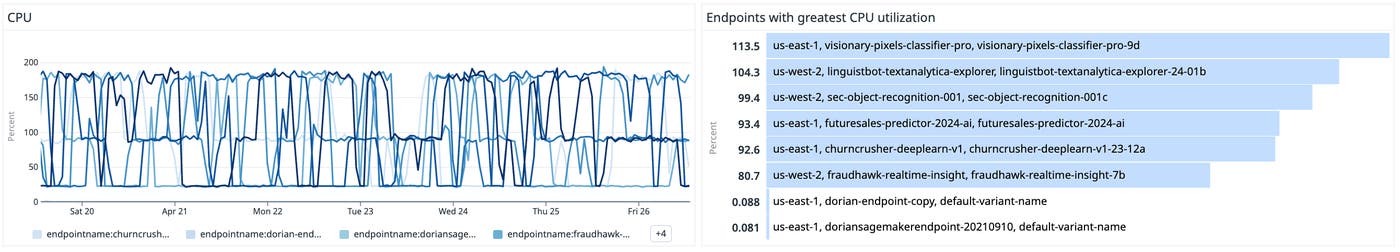

If you’re deploying ML models to endpoints for real-time inferencing, it’s important to ensure inferences are delivered quickly and maintain efficient resource usage. For example, we recommend that you alert on an unusually high percentage of CPU resources being used by an instance hosting a SageMaker model endpoint (aws.sagemaker.endpoints.cpuutilization). High CPU utilization may signal that the endpoint is under heavy load and experiencing performance bottlenecks. Conversely, consistently low CPU utilization might suggest that the endpoint is over-provisioned and not being used efficiently.

Similarly, you should set alerts on consistently high memory utilization (aws.sagemaker.endpoints.memory_utilization). High memory utilization can be an indicator of potential performance issues. For instance, models may be bound to an endpoint experiencing memory leakage, which could lead to eventual crashes and request timeouts.

If either CPU or memory utilization is consistently high, consider scaling up to a larger instance type with more memory or optimize your model and application to use memory more efficiently.

Model monitoring

In addition to monitoring the health and throughput of your machine learning models, you need to ensure that your models maintain their reliability. While the behavior of common software systems is largely regulated by their underlying code, the behavior of ML systems is driven by the data they learn from. This means any data quality issues or input data changes can significantly impact your models performance, making it imperative to continuously monitor the quality of your models’ predictions.

SageMaker, Azure Machine Learning, and Vertex AI all offer model monitoring tools that allow you to automatically detect and alert on issues that can degrade model performance. Some common issues include data drift, which occurs when a model’s input data changes significantly over time, and skew, which occurs when a production dataset deviates from the dataset used to train a model. Monitoring tools can also help you flag bias drift, in which models adopt unintended imbalances in their prediction due to erroneous data.

Though resources such as CPU, GPU, and memory all play a role in supporting model monitoring, the emphasis of this step of the ML workflow should be on validating the reliability and accuracy of models in production to ensure you are delivering accurate inferences to your customers.

Read more about best practices for monitoring ML models here.

Optimize model efficacy with standard RED (rate, error, duration) metrics

After your ML models have been successfully trained and are in production, you’ll want visibility into common RED (rate, error, duration) metrics:

Rate: Number of requests per second

Error: Percent of failing requests

Duration: Amount of time per request

These metrics are crucial because they directly impact your end users’ experience and can help you determine the overall performance of your deployment.

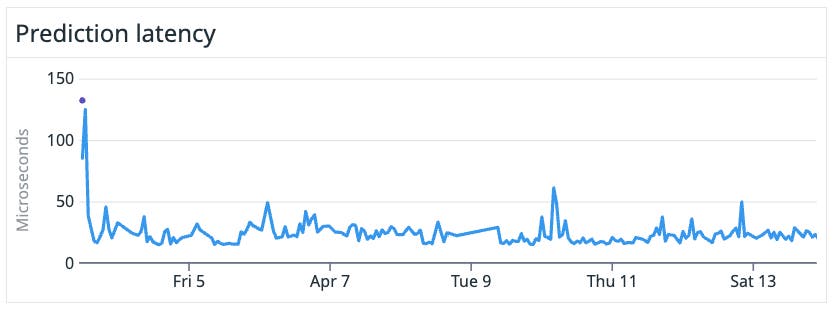

For example, let’s say your customer service chatbot is hosted on Amazon SageMaker. To make sure that it is serving customer queries swiftly and without delay, you should set up a threshold alert to notify you if your model latency has gone over 1000 milliseconds (or 1 second). If you are a Google Cloud Vertex AI customer, alerting on the “prediction latency” metrics would give you the same result. It’s important to alert on these latencies because high model latency can negatively impact customer satisfaction. If an interactive chatbot takes too long to respond to a prompt, customers will lose patience and likely turn to another service.

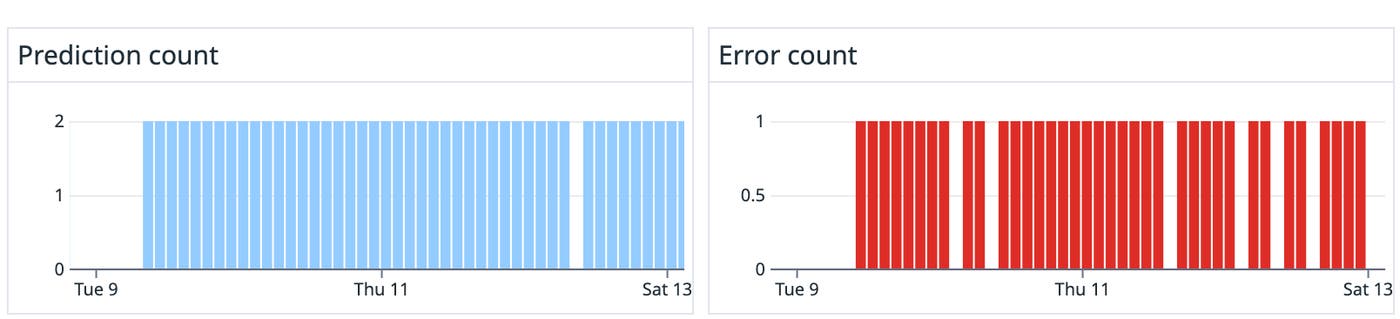

You should also pay close attention to the request load of your models so that you can better understand what your demand is. Azure Machine Learning, Vertex AI, and SageMaker all have quotas which define the maximum amount of cloud resources your workloads are allowed to utilize. Monitoring the volume of requests coming to your models helps alert you to whether you are close to hitting and exceeding any quotas, which can lead to errors and interrupted service.

Lastly, monitoring errors ensures that your models remain responsive and continue to deliver predictions. For example, Vertex AI emits a “prediction error percentage” metric which tracks the rate of errors produced by a specific model. High prediction error rates point to issues such as resource contention or potential outages. You can also monitor prediction counts alongside error counts to quickly see the ratio of predictions to errors.

Start monitoring your managed ML platforms today

In this post, we walked through the different steps of an ML workflow within a managed platform and what metrics are important to monitor to ensure that ML-powered applications can perform as expected. Whether you work with AWS, Azure, or Google Cloud, you can use the Datadog suite of integrations to gain visibility into the health and performance of your ML workflows hosted on managed ML platforms. If you aren’t already using Datadog, sign up today for a 14-day free trial.